部署k8s1.23.8 初始工作 安装ssh

1 2 3 4 5 sudo apt install openssh-server sudo systemctl start ssh sudo systemctl status sshd sudo ps -e | grep ssh

安装完之后可以使用xshell或是Mobaxterm来进行ssh连接,为了保证以后在物理机上部署集群需要使用物理机,所以我使用ssh来进行远程部署模拟

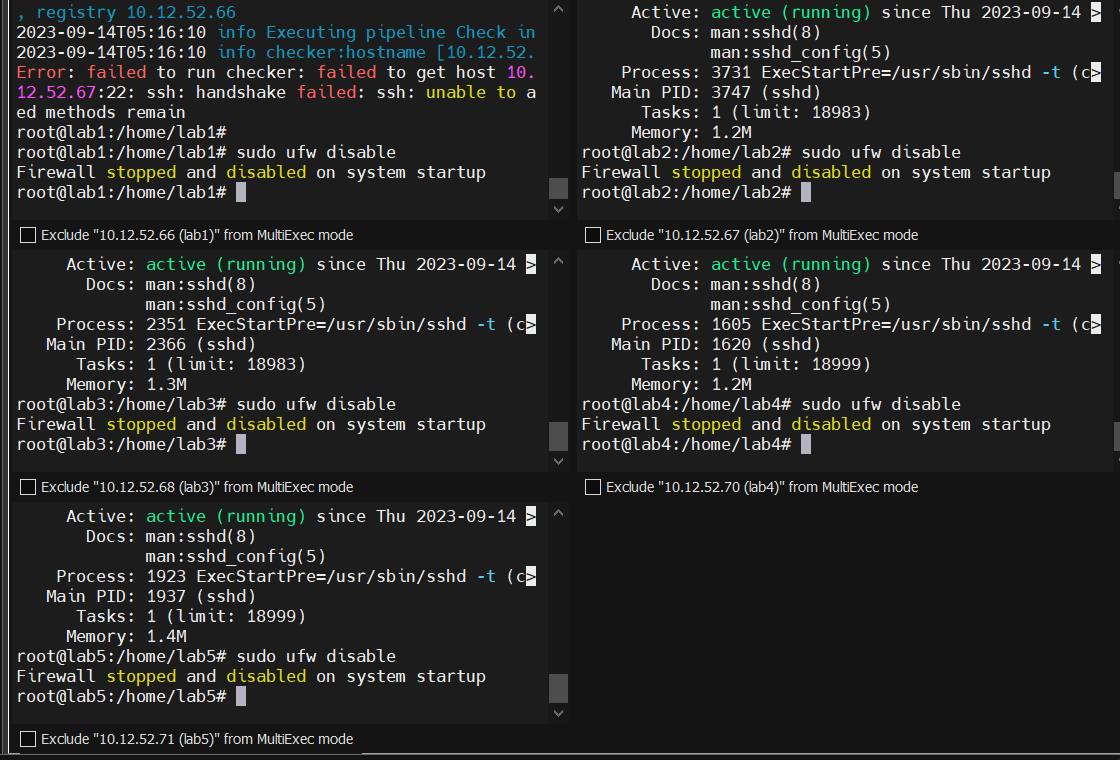

1.首先关闭防火墙

关闭swap 1 sudo sed -ri 's/.*swap.*/#&/' /etc/fstab

想要恢复swap可以使用以下命令恢复

1 sudo sed -ri 's/#(.*swap.*)/\1/' /etc/fstab

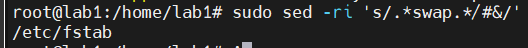

在master上添加hosts,这里要切换root用户,才能追加,如果直接vim编辑则只需要sudo就行

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 cat >> /etc/hosts << EOF 10.12.52.66 lab1 10.12.52.67 lab2 10.12.52.68 lab3 10.12.52.70 lab4 10.12.52.71 lab5 EOF cat >> /etc/hosts << EOF 192.168.52.51 lab1 192.168.52.52 lab2 192.168.52.53 lab3 EOF cat >> /etc/hosts << EOF 192.168.52.134 lab1 EOF

添加完记得查看一下

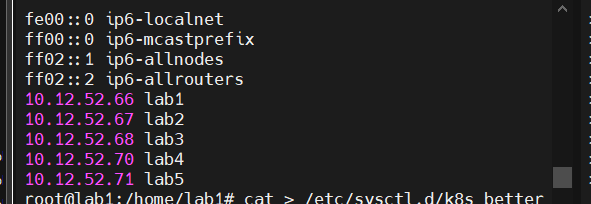

将桥接的 IPv4 流量传递到iptables的链

1 2 3 4 5 6 7 8 9 10 11 12 13 14 cat > /etc/sysctl.d/k8s_better.conf << EOF net.bridge.bridge-nf-call-iptables=1 net.bridge.bridge-nf-call-ip6tables=1 net.ipv4.ip_forward=1 vm.swappiness=0 vm.overcommit_memory=1 vm.panic_on_oom=0 fs.inotify.max_user_instances=8192 fs.inotify.max_user_watches=1048576 fs.file-max=52706963 fs.nr_open=52706963 net.ipv6.conf.all.disable_ipv6=1 net.netfilter.nf_conntrack_max=2310720 EOF

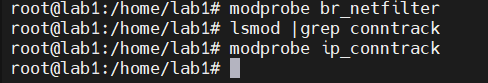

1 2 3 4 5 modprobe br_netfilter lsmod |grep conntrack modprobe ip_conntrack

同步时间 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 sudo apt-get update sudo apt-get install ntp //安装ntp服务 sudo systemctl enable ntp //开机启动服务 sudo systemctl start ntp //启动服务 sudo timedatectl set-timezone Asia/Shanghai //更改时区 ntpq -p //同步时间

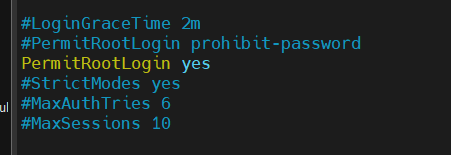

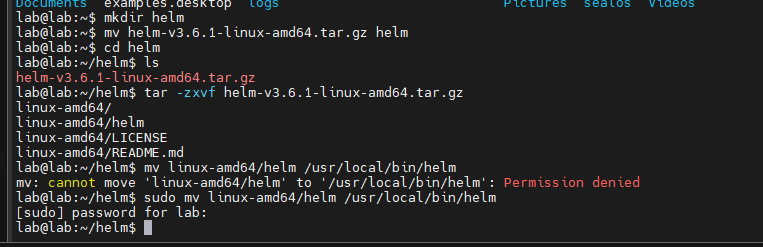

使用sealos安装k8s 首先对除了master节点之外的其他节点的ssh配置文件进行更改,因为默认的ssh连接是不允许root用户登录的

1 2 3 4 5 6 vim /etc/ssh/sshd_config PermitRootLogin yes PermitRootLogin prohibit-password PermitRootLogin no

然后重启ssh

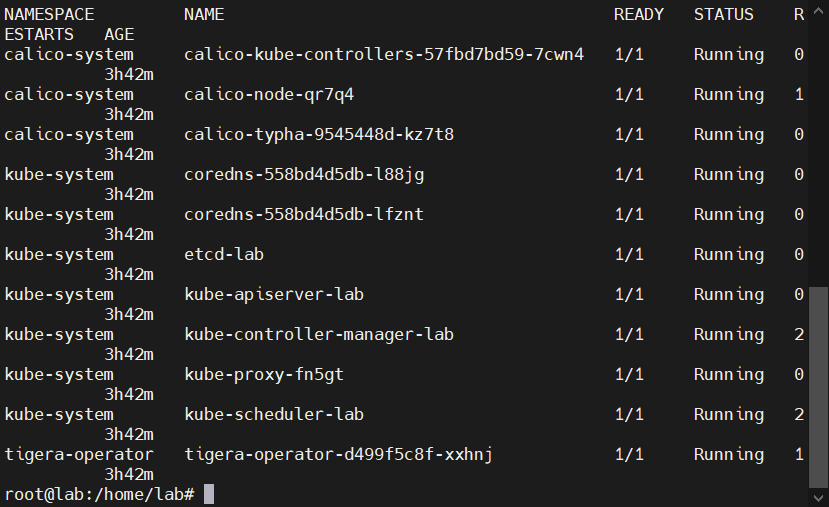

然后运行下面的命令安装k8s,这里sealos默认安装的网络插件是calico

1 2 3 4 5 6 sealos run labring/kubernetes-docker:v1.23.8 labring/helm:v3.8.2 labring/calico:v3.24.1 --masters 10.12.52.66 --nodes 10.12.52.67,10.12.52.68 -p 123456 sealos run registry.cn-shanghai.aliyuncs.com/labring/kubernetes:v1.23.8 registry.cn-shanghai.aliyuncs.com/labring/helm:v3.8.2 registry.cn-shanghai.aliyuncs.com/labring/calico:v3.24.1 \ --masters 192.168.52.134 sealos run registry.cn-shanghai.aliyuncs.com/labring/kubernetes:v1.21.2 registry.cn-shanghai.aliyuncs.com/labring/helm:v3.6.2 registry.cn-shanghai.aliyuncs.com/labring/calico:v3.20.1 --masters 192.168.52.134.cn-sh

虚拟机

1 sealos run labring/kubernetes-docker:v1.23.8 labring/helm:v3.8.2 labring/calico:v3.24.1 --masters 192.168.52.51 --nodes 192.168.52.52,192.168.52.53 -p 123456

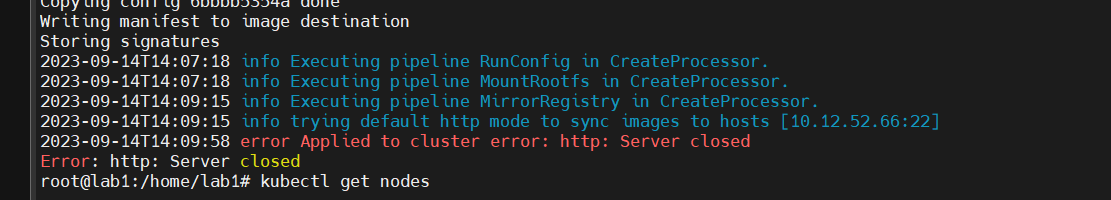

可能会遇到这样的报错,一直重试就成功了

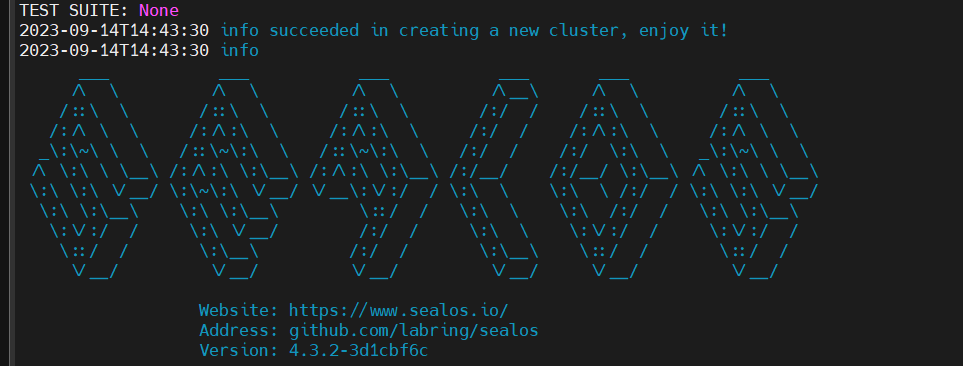

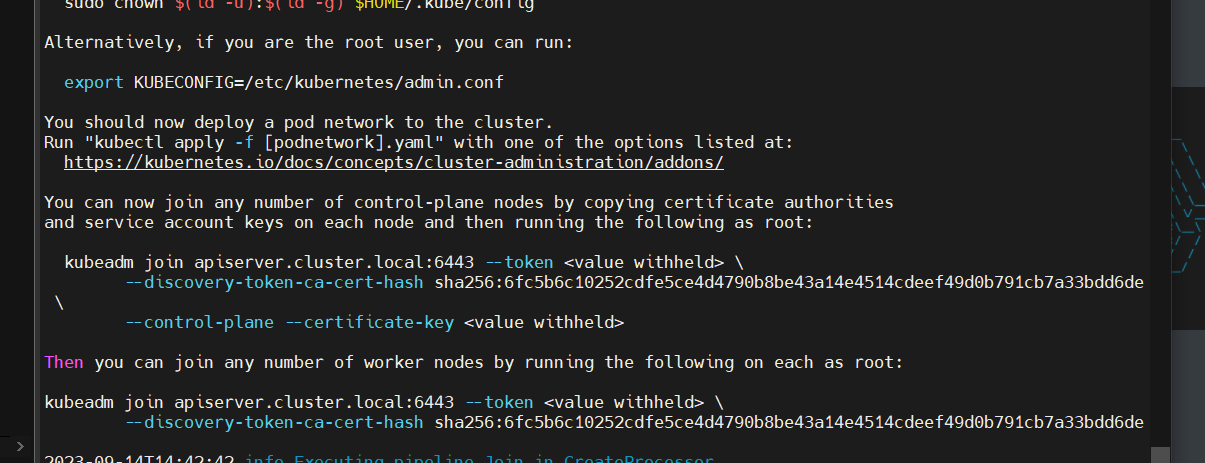

安装成功的界面是这样的

翻一下安装的log可以看到

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME /.kube sudo cp -i /etc/kubernetes/admin.conf $HOME /.kube/config sudo chown $(id -u):$(id -g) $HOME /.kube/config Alternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.conf You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ You can now join any number of control-plane nodes by copying certificate authorities and service account keys on each node and then running the following as root: kubeadm join apiserver.cluster.local:6443 --token <value withheld> \ --discovery-token-ca-cert-hash sha256:6fc5b6c10252cdfe5ce4d4790b8be43a14e4514cdeef49d0b791cb7a33bdd6de \ --control-plane --certificate-key <value withheld> Then you can join any number of worker nodes by running the following on each as root: kubeadm join apiserver.cluster.local:6443 --token <value withheld> \ --discovery-token-ca-cert-hash sha256:6fc5b6c10252cdfe5ce4d4790b8be43a14e4514cdeef49d0b791cb7a33bdd6de

虽然使用sealos不需要kubeadm了,但是还是记录下来了

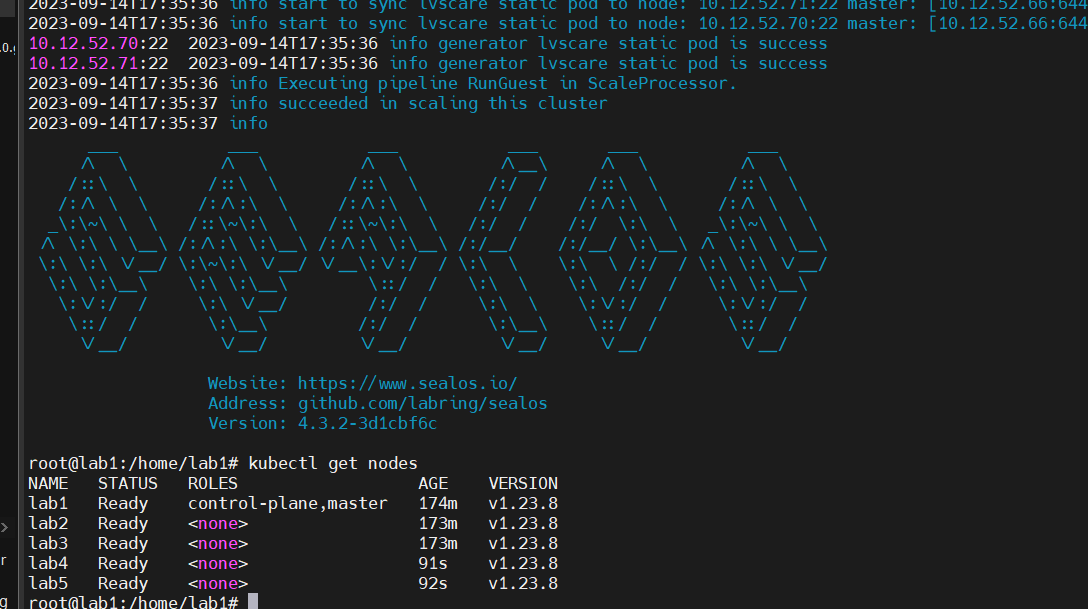

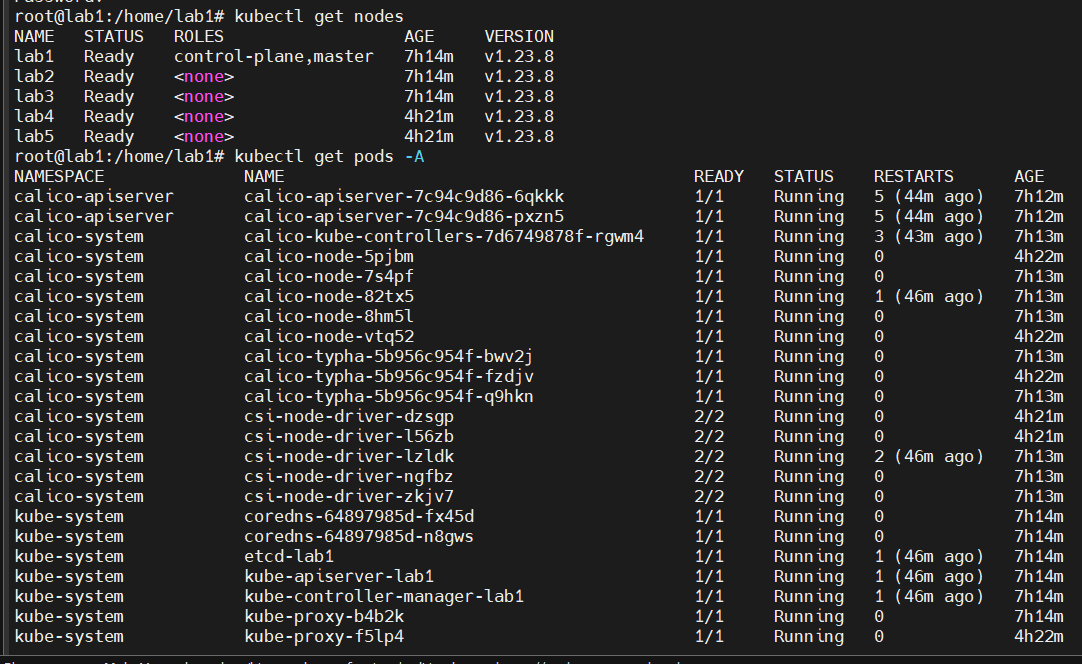

增加节点

1 sealos add --nodes 10.12.52.70,10.12.52.71 -p 123456

如果遇到了说端口监管不正确的地方就把端口改成22,比如这样

1 sealos add --nodes 10.12.52.70:22,10.12.52.71:22 -p 123456

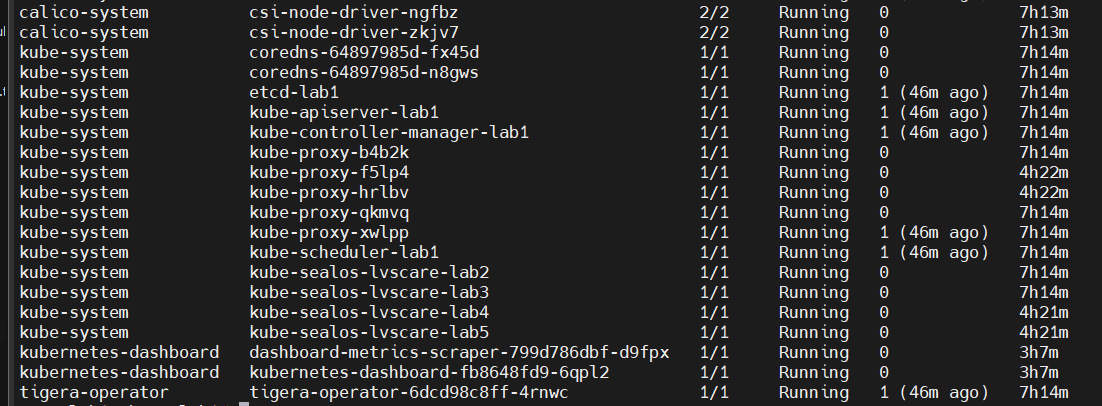

这样就安装完成了

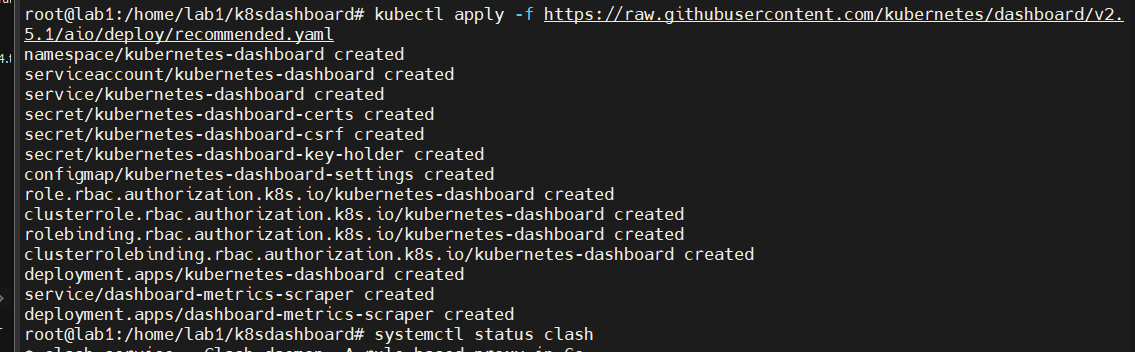

部署dashboard 对应自己的k8s版本下载对应的dashboard,此处下载的是2.5.1

1 kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.5.1/aio/deploy/recommended.yaml

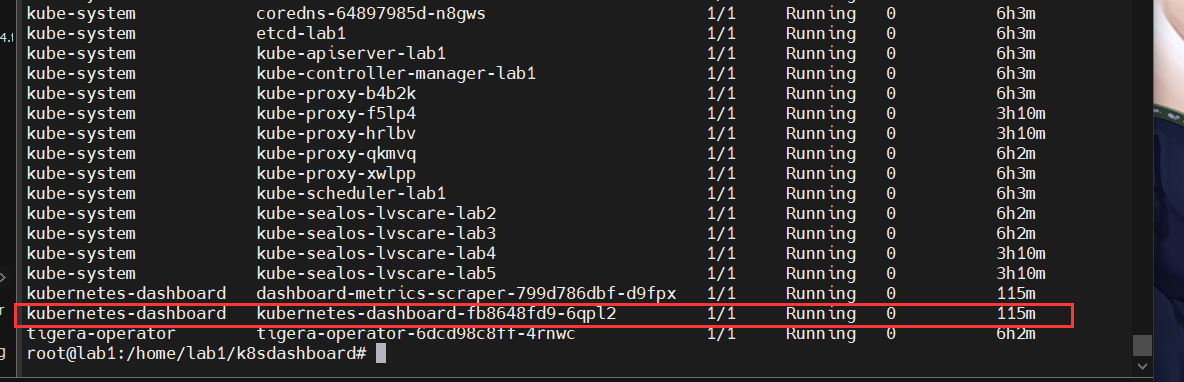

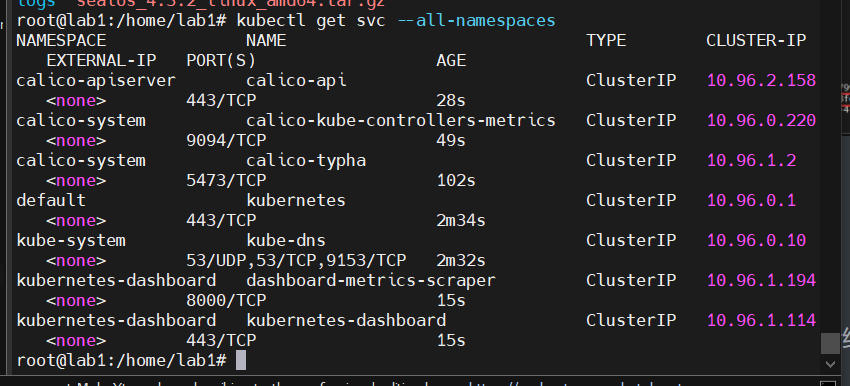

查看一下

1 kubectl get svc --all-namespaces

虚拟机

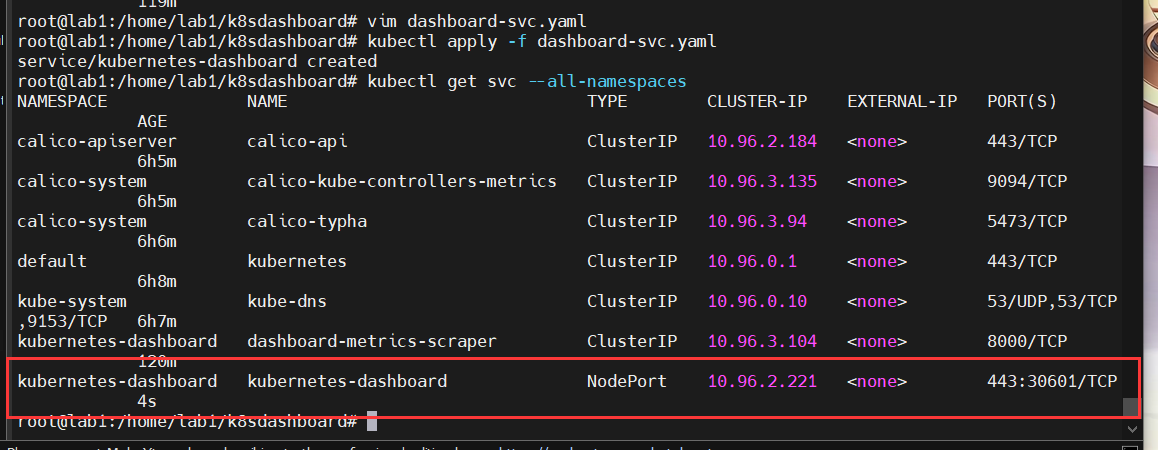

删除现有的service,因为后续要改成NODEPORT

1 kubectl delete service kubernetes-dashboard --namespace=kubernetes-dashboard

创建配置文件dashboard-svc.yaml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: type: NodePort ports: - port: 443 targetPort: 8443 selector: k8s-app: kubernetes-dashboard

1 2 3 4 5 6 7 8 9 10 11 12 13 root@lab1:/home/lab1/k8sdashboard service/kubernetes-dashboard created root@lab1:/home/lab1/k8sdashboard NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE calico-apiserver calico-api ClusterIP 10.96.2.184 <none> 443/TCP 6h5m calico-system calico-kube-controllers-metrics ClusterIP 10.96.3.135 <none> 9094/TCP 6h5m calico-system calico-typha ClusterIP 10.96.3.94 <none> 5473/TCP 6h6m default kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 6h8m kube-system kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 6h7m kubernetes-dashboard dashboard-metrics-scraper ClusterIP 10.96.3.104 <none> 8000/TCP 120m kubernetes-dashboard kubernetes-dashboard NodePort 10.96.2.221 <none> 443:30601/TCP 4s root@lab1:/home/lab1/k8sdashboard

创建 kubernetes-dashboard 管理员角色,dashboard-svc-account.yaml内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 apiVersion: v1 kind: ServiceAccount metadata: name: admin-user namespace: kubernetes-dashboard --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: admin-user roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: admin-user namespace: kubernetes-dashboard

执行

1 2 3 4 5 root@lab1:/home/lab1/k8sdashboard# vim dashboard-svc-account.yaml root@lab1:/home/lab1/k8sdashboard# kubectl apply -f dashboard-svc-account.yaml serviceaccount/admin-user created clusterrolebinding.rbac.authorization.k8s.io/admin-user created

获取token

1 2 3 4 5 6 7 root@lab1:/home/lab1/k8sdashboard admin-user-token-hcr5p [root@k8s-master01 dashboard] eyJhbGciOiJSUzI1NiIsImtpZCI6Im9VLUFMQ2g0OWZxcEw0enVkS0VHbHRrVnU4d0lJTFlWcXhMYi1PMEl3eU0ifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLWhjcjVwIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI1ZmRiZTFjMC1mMWMzLTRkM2YtYWNlMi0xM2ZhNDZiMTRmNDYiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4tdXNlciJ9.nChnvqiqFeXuTnMCW0y-m8KbR5KEyovjr7K0z32jkb88yvFTlQv50HyI8Y2fZ9fJ6qAeT3nIuZtcScbaS_bwi_PwvtwlItgjQhT09Hw8cpKLgts4FXLYwEGrm6Gyf2QIExuLf8JHjp_arBRTkY4uDHTeNVFx8AzCTFJEZ6EOoy7lYYGUcRIEkAQ5mU1N5kW-043_ufN9NwpeFi3DIcAlHofTrW9b7UqgbUTjJUxcanpqkc95A9C_iFeq9acVoMuNC_HSJVIcfeIXtZcmxY6e-JezhDdTAqONM8c3TVMelDbmFpPjlT-gIC7g5TXzlsK61RAktV6WLP96XUuyazqpGg

虚拟机

1 2 3 4 5 6 root@lab1:/home/lab1/dashboard admin-user-token-2x5fq root@lab1:/home/lab1/dashboard eyJhbGciOiJSUzI1NiIsImtpZCI6IkMwdGw3cS1aUGNQV0pMbUxTeDRDMFV0RktvX202SW80c0tMRWp6dl9NRzgifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLTJ4NWZxIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiJhYTczMzYwMy0xY2VlLTQxMDMtYmVmOS1kMWI0NjVkZTc0NGIiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4tdXNlciJ9.VoEuzCWU9c8swO3F6WyMVWtT_BKhhucl7ef5ug2AMmlrvNI8bs98Iw8Nf1G6f8-OwpjQKXSzcw9tUPY1745iEPfKBhXIeZ37AtH3HbN-NhpF1xtw4XsKI3-zxq6MOTR__4isF80sZdYoufqTFehkoOXJK4cdjPvhCx8LgFUuyIedHU8KmRCvPKH7kqh4iqN4z1LvTv_KzHmhZe1KmLgp1fEySUl_dN7tCu9-pBBNcU22t5h-UVefujohdDq_p4DWKcr-eZyyBUymsyzMLjcrZOYZnainRkrXwtiQs3DOhIR5SFqDexTsePgAF5jW5iq9TEDOzg_A_OheYQv_wNgcMA

1 2 3 4 root@lab1:/home/lab1/dashboard admin-user-token-vlpnx root@lab1:/home/lab1/dashboard eyJhbGciOiJSUzI1NiIsImtpZCI6IkMwdGw3cS1aUGNQV0pMbUxTeDRDMFV0RktvX202SW80c0tMRWp6dl9NRzgifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLXZscG54Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiIwYjllMzliMy03MjQ1LTRhYWMtODk3ZC1mNDIzNjFhZWRjNzIiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4tdXNlciJ9.jz6mZrz53DJr2tgJDgvBwxDuYDnOadq-6qKyC8jZIHWVwgaoLVdObPh6E8RiFnM7MVDOKOWHr5n_KFBpko0vY7qh4wvu6krNeRNi5aI_5whD5dy_L5-wEy6boKz9TUM9BCCTQ3tiI1CSPB-zIKfnTWxueBVoumG4uqC_YYgnIiwk0YqN_-RaM5A2pQo9Zrmz0HSXE6YoExLm1gGj0lmtBFkDjF2-n-ZbQQ1-9Sjz9bR6_QZhzSjQCzZpzwPCH5ODCiEo1BUDg9C88At1UeLAk4AafoWzVfrWP_ixevG9BAxTh93jxgsXXWwO_4Jw_povRuq6KRHab1nDtPisuDrNEA

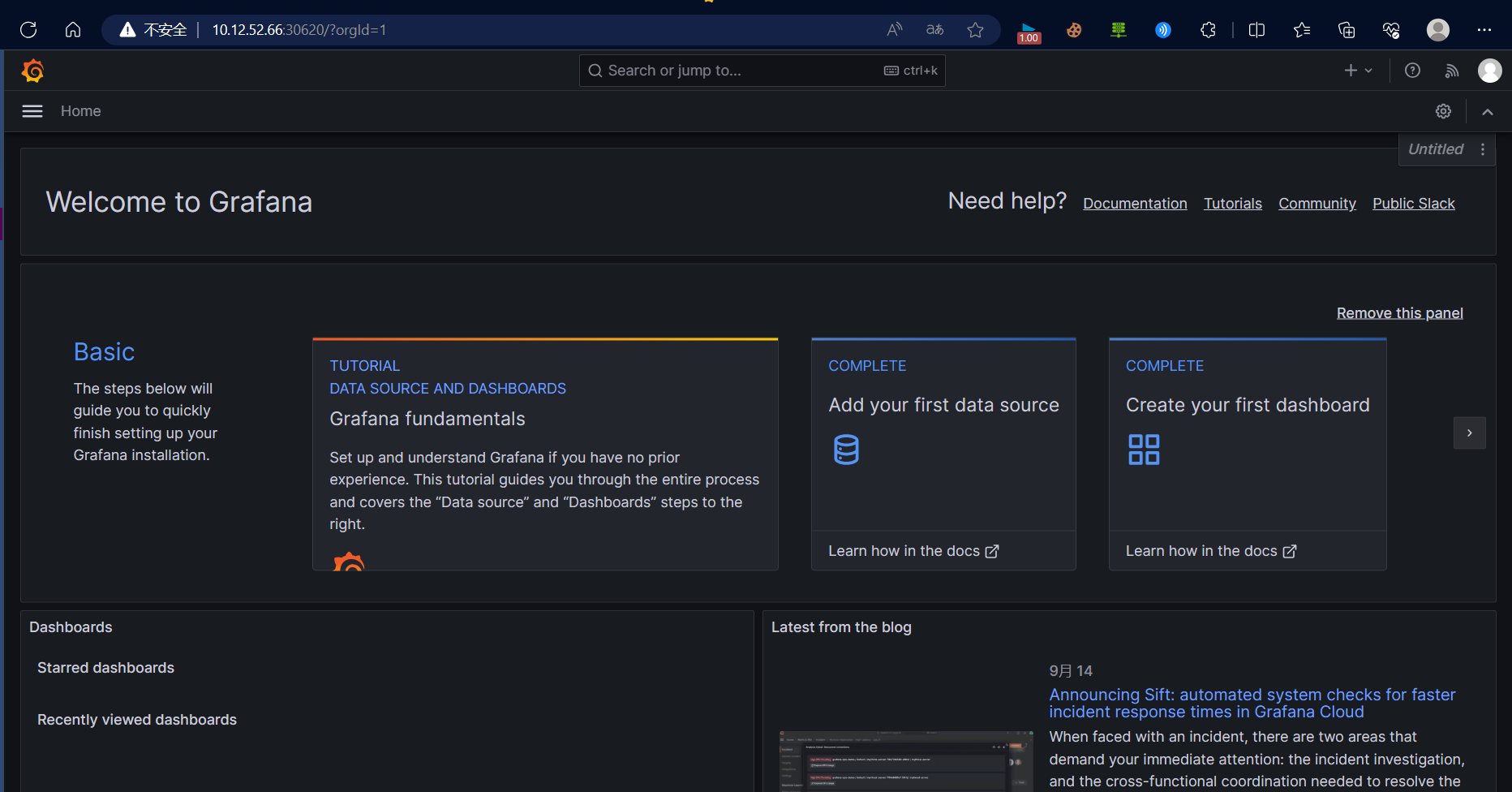

然后就可以访问了

Kubernetes Dashboard

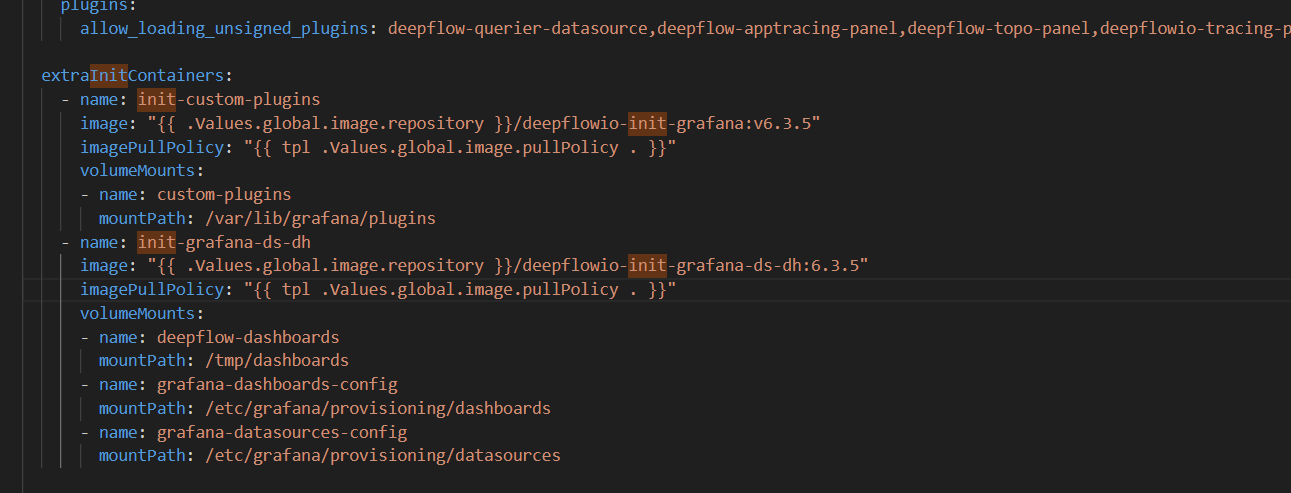

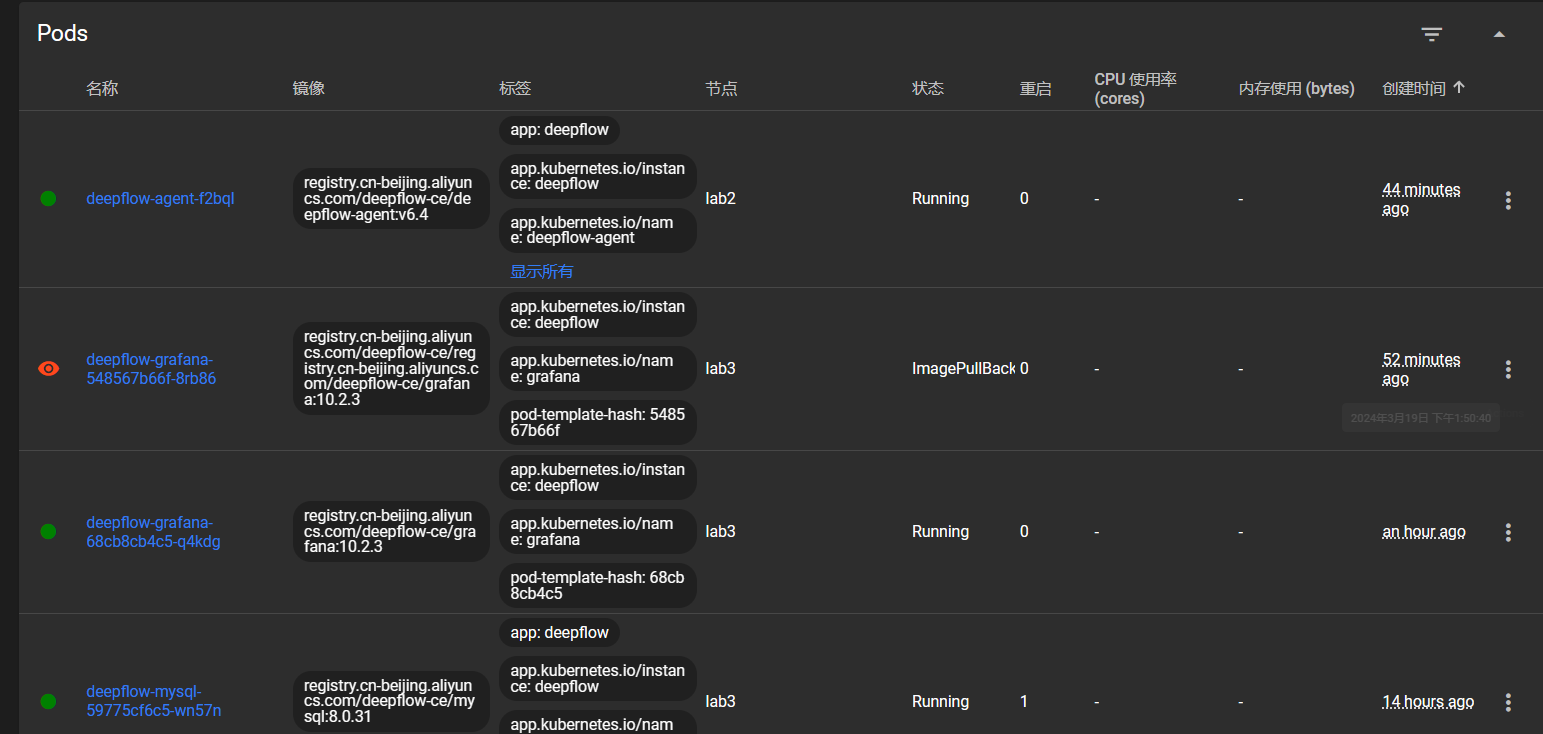

部署deepflow 官方文档

All-in-One 快速部署 | DeepFlow 文档

参考文档

部署社区版deepflow_deepflow部署_ljyfree的博客-CSDN博客

1 2 3 root@lab1:/home/lab1 "deepflow" has been added to your repositories

1 2 3 4 5 6 7 8 9 10 11 helm repo add deepflow https://deepflowio.github.io/deepflow cat << EOF > values-custom.yaml global: allInOneLocalStorage: true image: repository: registry.cn-beijing.aliyuncs.com/deepflow-ce grafana: image: repository: registry.cn-beijing.aliyuncs.com/deepflow-ce/grafana EOF

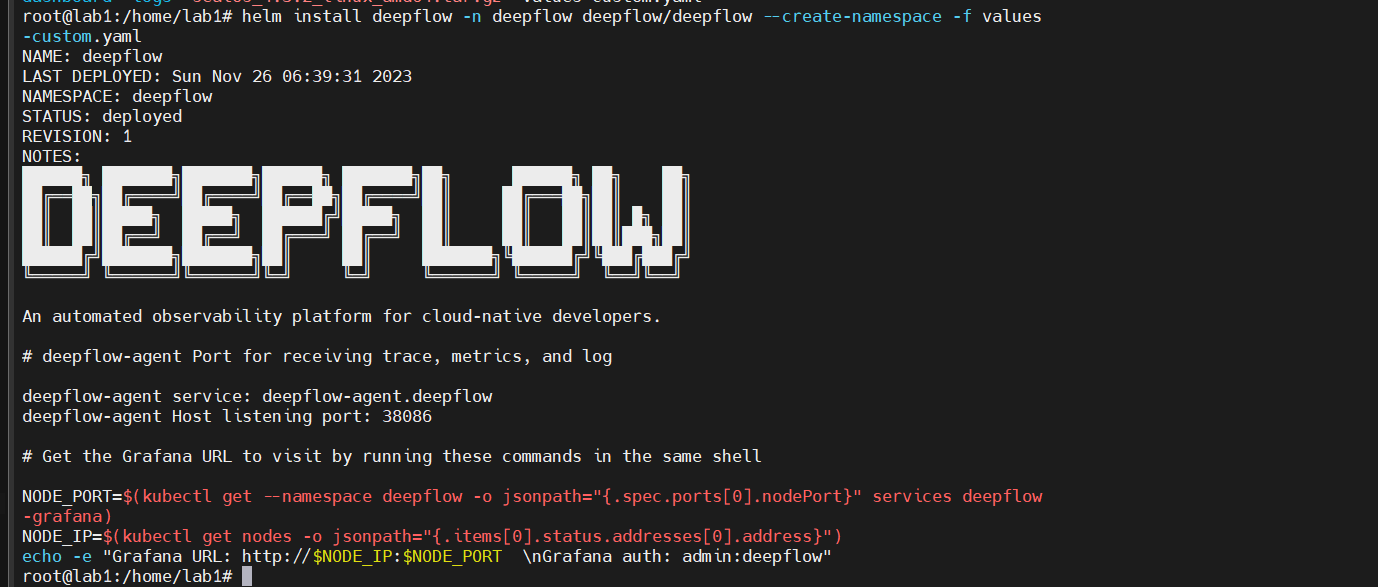

1 helm install deepflow -n deepflow deepflow/deepflow --create-namespace -f values-custom.yaml

虚拟机

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 root@lab1:/home/lab1 NAME: deepflow LAST DEPLOYED: Sun Nov 26 06:39:31 2023 NAMESPACE: deepflow STATUS: deployed REVISION: 1 NOTES: ██████╗ ███████╗███████╗██████╗ ███████╗██╗ ██████╗ ██╗ ██╗ ██╔══██╗██╔════╝██╔════╝██╔══██╗██╔════╝██║ ██╔═══██╗██║ ██║ ██║ ██║█████╗ █████╗ ██████╔╝█████╗ ██║ ██║ ██║██║ █╗ ██║ ██║ ██║██╔══╝ ██╔══╝ ██╔═══╝ ██╔══╝ ██║ ██║ ██║██║███╗██║ ██████╔╝███████╗███████╗██║ ██║ ███████╗╚██████╔╝╚███╔███╔╝ ╚═════╝ ╚══════╝╚══════╝╚═╝ ╚═╝ ╚══════╝ ╚═════╝ ╚══╝╚══╝ An automated observability platform for cloud-native developers. deepflow-agent service: deepflow-agent.deepflow deepflow-agent Host listening port: 38086 NODE_PORT=$(kubectl get --namespace deepflow -o jsonpath="{.spec.ports[0].nodePort}" services deepflow -grafana) NODE_IP=$(kubectl get nodes -o jsonpath="{.items[0].status.addresses[0].address}" ) echo -e "Grafana URL: http://$NODE_IP :$NODE_PORT \nGrafana auth: admin:deepflow"

物理机:

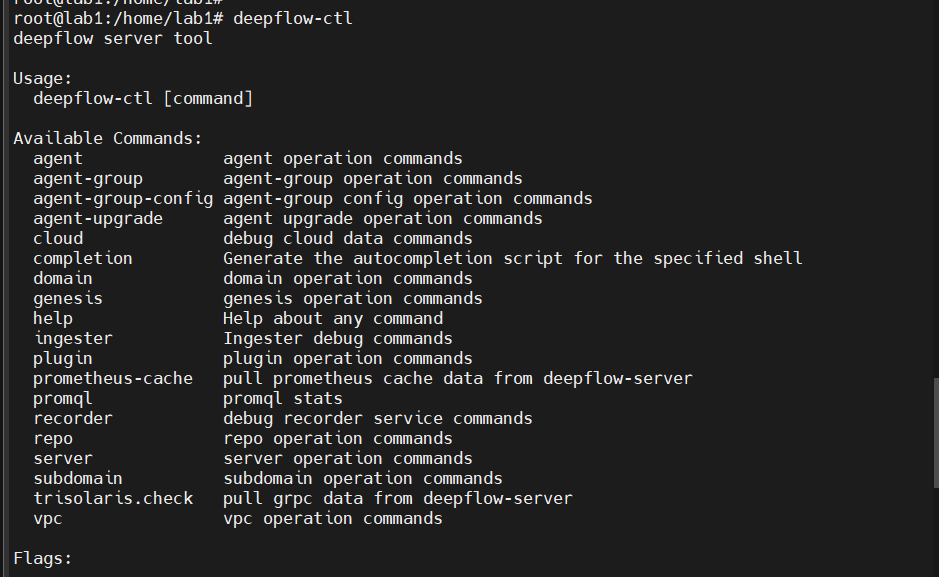

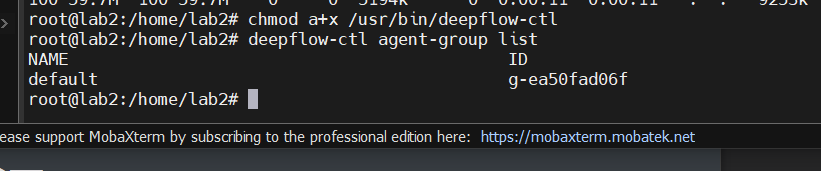

安装 deepflow-ctl

1 2 3 4 5 6 systemctl start clash export https_proxy=http://127.0.0.1:7890 http_proxy=http://127.0.0.1:7890 all_proxy=socks5://127.0.0.1:7890

1 2 3 curl -o /usr/bin/deepflow-ctl https://deepflow-ce.oss-cn-beijing.aliyuncs.com/bin/ctl/stable/linux/$(arch | sed 's|x86_64|amd64|' | sed 's|aarch64|arm64|' )/deepflow-ctl chmod a+x /usr/bin/deepflow-ctl

执行看看是否成功

这样就是成功了

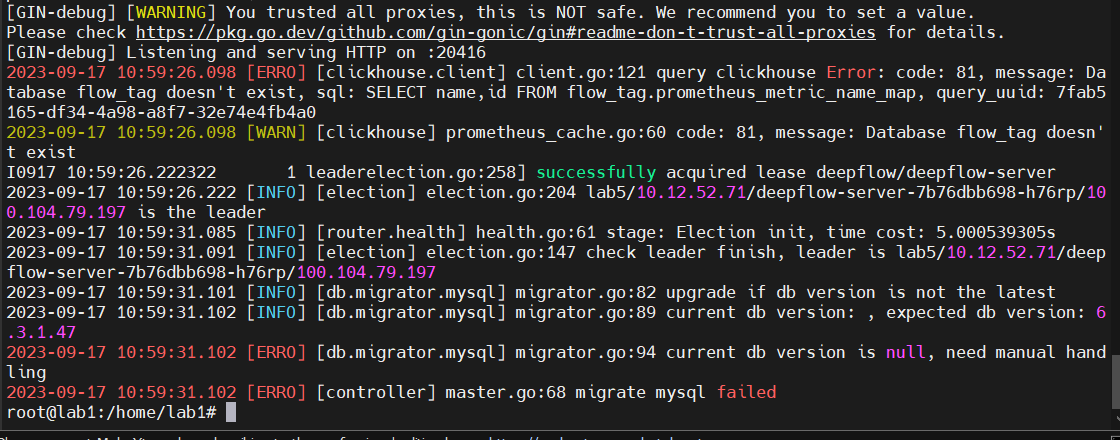

这里server的pod一直没跑起来,查看日志后发现是clickhouse数据库的问题

输出日志

1 kubectl logs deepflow-server-66666d6d7f-psvq5 -n deepflw

但是clickhouse的pod是成功运行的

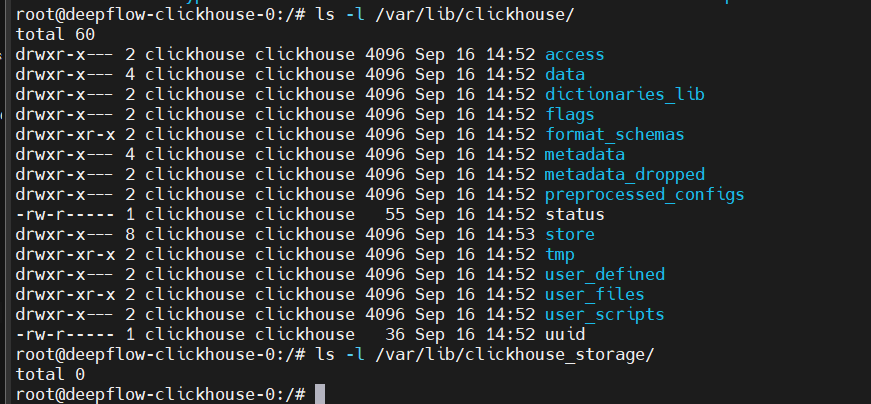

进入clickhouse容器内部看看

1 kubectl exec -it deepflow-clickhouse-0 -n deepflow -- /bin/bash

检查存储目录的内容和权限

在 ClickHouse 容器内,检查 /var/lib/clickhouse/ 和 /var/lib/clickhouse_storage/ 的内容和权限。

1 2 ls -l /var/lib/clickhouse/ ls -l /var/lib/clickhouse_storage/

确保文件和目录的所有权是 clickhouse 用户

查看 ClickHouse 日志

1 cat /var/log /clickhouse-server/clickhouse-server.log

检查 ClickHouse 数据库

1 kubectl exec -it deepflow-clickhouse-0 -n deepflow -- /bin/bash

在 ClickHouse 容器内,使用 ClickHouse CLI 来查询数据库。

这里没有截图,这里显示的database是没有flow_tag的,然后创建flow_tag

1 CREATE DATABASE flow_tag;

之后想解决MySQL的问题,搜索报错信息的时候,找到了这个一模一样的问题

[BUG] pod deepflow-server-XXX CrashLoopBackOff · Issue #3584 · deepflowio/deepflow (github.com)

Generally, mysql initialization fails due to poor disk performance or deepflow database table initialization fails. Try to log in mysql with root:deepflow to see if it can log in successfully. And add the following fields in the values - the custom files, update deepflow - server, if not successful landing, the empty mysql data directory/opt/deepflow/data/deepflow - mysql, Then add the following field to values-custom and update

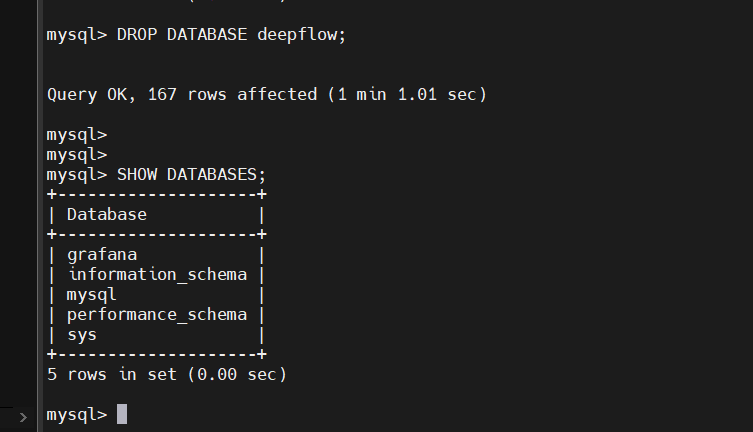

it.root:deepflow 尝试登陆 MySQL,看看是否能登陆成功,如果能登陆成功,则drop掉deepflow database,并在values-custom文件中添加如下字段,更新deepflow-server,如果不能成功登陆,则清空mysql数据目录 /opt/deepflow/data/deepflow-mysql,然后在values-custom中添加如下字段,并更新

1 2 3 4 5 6 7 8 9 10 11 mysql: livenessProbe: failureThreshold: 20 readinessProbe: failureThreshold: 20 server: livenessProbe: failureThreshold: 20 readinessProbe: failureThreshold: 20

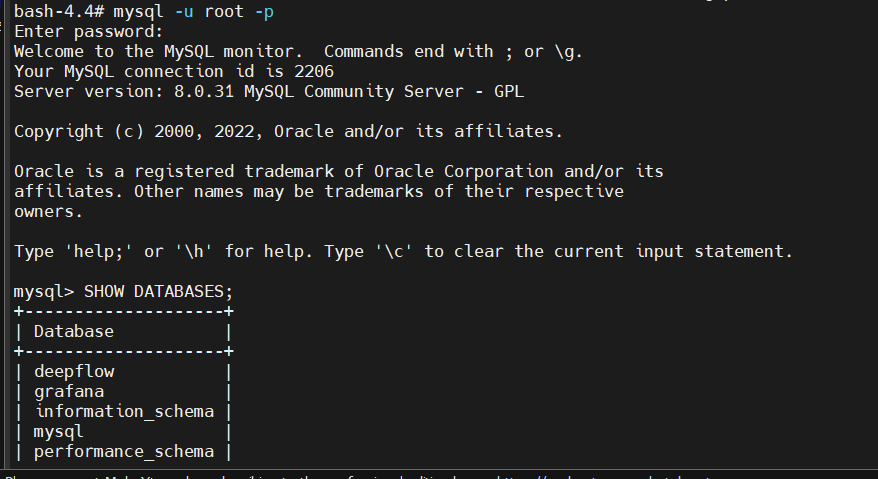

这里参照这个方法尝试一下

先进入mysql的pod

1 kubectl exec -it deepflow-mysql-6c97f94d8f-rbgnl -n deepflow -- /bin/bash

然后用root:deepflow连接一下数据库

1 mysql -u root -pdeepflow

然后把deepflow database drop掉

然后退出,在values-custom文件中添加如下字段

1 2 3 4 5 6 7 8 9 10 11 mysql: livenessProbe: failureThreshold: 20 readinessProbe: failureThreshold: 20 server: livenessProbe: failureThreshold: 20 readinessProbe: failureThreshold: 20

然后重启server

1 helm upgrade deepflow -f values-custom.yaml -n deepflow deepflow/deepflow

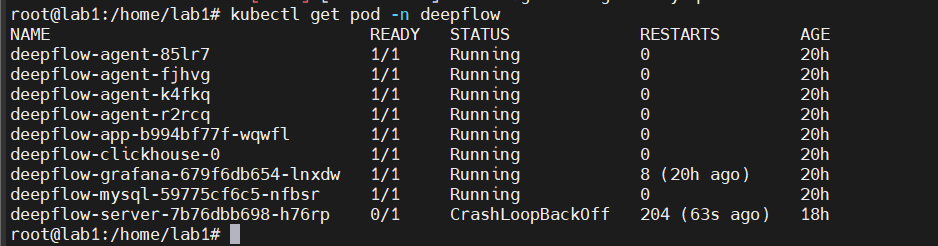

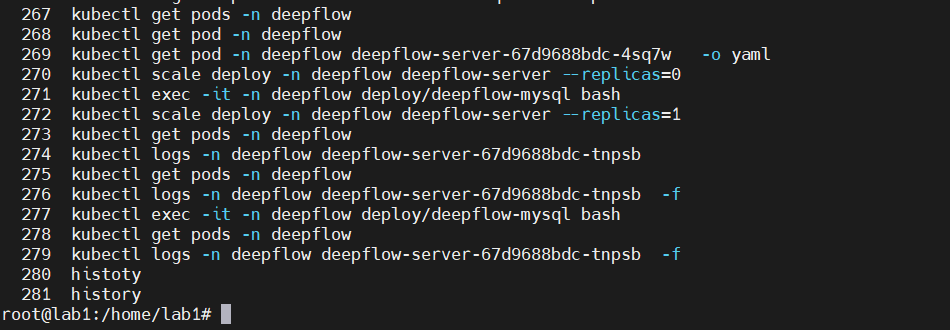

这里尝试了许多次,都失败了,找到了开发人员进行远程调试,他说他也用的同样的方法,但是我们重启server的方式有些许不同,可能就是这个原因吧,下面的图片是他的命令

我这里进行一下复刻

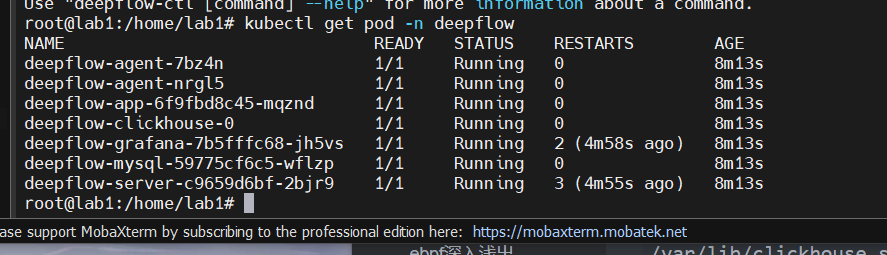

首先查看pod情况

1 kubectl get pods -n deepflow

接着看看server的情况,以yaml文件形式输出

1 kubectl get pod deepflow-server-67d9688bdc-tnpsb -n deepflow -o yaml

查看之后,发现是同样的问题,就先将server的pod给停掉

1 kubectl scale deploy -n deepflow deepflow-server --replicas=0

然后进入mysql的pod

1 kubectl exec -it deepflow-mysql-6c97f94d8f-rbgnl -n deepflow -- /bin/bash

然后也是drop掉deepflow的database

然后退出重启server

1 kubectl scale deploy -n deepflow deepflow-server --replicas=1

然后就是漫长的等待

1 kubectl logs -n deepflow deepflow-server-67d9688bdc-tnpsb -f

然后就好了

删除deepflow

使用 helm list 查看当前 Helm releases:

确认你看到名为 deepflow 的 release 在上述 namespace (deepflow) 中。

使用 helm uninstall 来删除这个 release:

1 helm uninstall deepflow -n deepflow

删除 deepflow namespace

1 kubectl delete namespace deepflow

查看命名空间中的所有资源 :

使用以下命令可以列出命名空间中的所有资源:

1 kubectl api-resources --verbs=list --namespaced -o name | xargs -n 1 kubectl get --show-kind --ignore-not-found -n deepflow

手动删除残留资源 :

根据上述命令的输出,手动删除命名空间中的任何残留资源。例如,如果你发现有一个未删除的 ConfigMap,可以使用以下命令删除它:

1 kubectl delete configmap <configmap-name> -n <your-namespace-name>

删除命名空间的终结器 :

如果在清理所有资源后命名空间仍然没有被删除,可能是因为命名空间对象上的终结器阻止了它。要删除它,你需要编辑命名空间并删除 finalizers。

使用以下命令编辑命名空间:

1 2 kubectl get namespace deepflow -o json | jq '.spec.finalizers=[]' | kubectl replace --raw "/api/v1/namespaces/deepflow/finalize" -f -

手动删除处于 Terminating 状态的 Pods

1 2 3 4 5 6 kubectl delete pod -n deepflow --force --grace-period=0 deepflow-agent-w6nzw kubectl delete pod -n deepflow --force --grace-period=0 deepflow-app-7f69b47dd6-nk928 kubectl delete pod -n deepflow --force --grace-period=0 deepflow-clickhouse-0 kubectl delete pod -n deepflow --force --grace-period=0 deepflow-grafana-84cdcdf594-8f7sg kubectl delete pod -n deepflow --force --grace-period=0 deepflow-mysql-6fc8c8cf85-f78sp kubectl delete pod -n deepflow --force --grace-period=0 deepflow-server-bb9699c94-tcgcx

学习网站在这 ebpf官网 eBPF Applications Landscape

ebpf入门实战 https://www.zadmei.com/ehxjsysz.html

基于 eBPF 的高度自动化可观测性实践 DeepFlow 基于 eBPF 的高度自动化可观测性实践 - DeepFlow

deepflow高级配置 Server Server 高级配置 | DeepFlow 文档

Agent Agent 高级配置 | DeepFlow 文档

Linux性能优化实战 Linux性能优化实战学习笔记(转载目录) - fiab13 - 博客园 (cnblogs.com)

ebpf学习 ebpf深入浅出 【BPF入门系列-3】BPF 环境搭建 | 深入浅出 eBPF

eBPF 核心技术与实战 https://www.zadmei.com/ehxjsysz.html

ubuntu 20.04安装libbpf-mfc42d-ChinaUnix博客

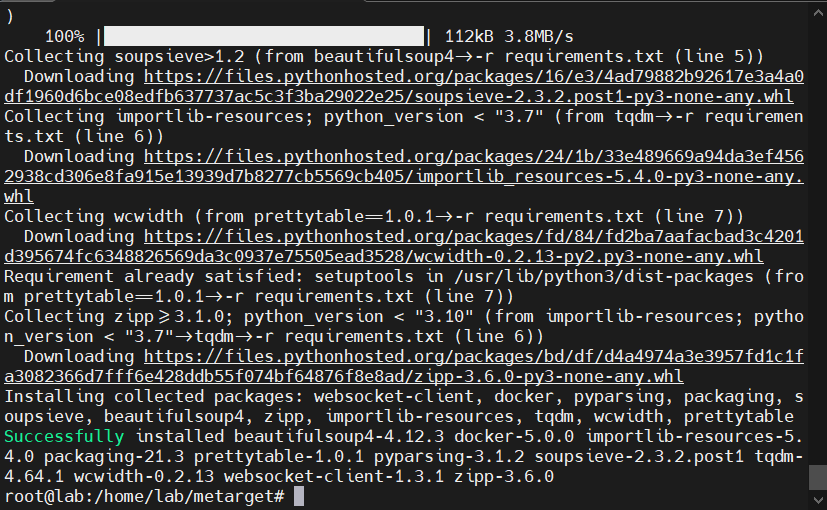

搭建eBPF开发环境(在他给的docker容器里实际上是不需要搭建的) 注:从下面开始,到分割线的所有内容均可不做参考 参考文档:

Ubuntu安装BCC - 骇人的籽 - 博客园 (cnblogs.com)

Ubuntu 安装 libbpf 教程_Chientol的博客-CSDN博客

ubuntu20.04安装bcc_JD怕秃头的博客-CSDN博客

主要参照的是第一个链接

其中

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 root@mobb-iMac:/home/mobb/bcc/build > libllvm3.7 llvm-3.7-dev libclang-3.7-dev python zlib1g-dev libelf-dev 正在读取软件包列表... 完成 正在分析软件包的依赖关系树 正在读取状态信息... 完成 注意,选中 'python-is-python2' 而非 'python' E: 无法定位软件包 libllvm3.7 E: 无法按照 glob ‘libllvm3.7’ 找到任何软件包 E: 无法按照正则表达式 libllvm3.7 找到任何软件包 E: 无法定位软件包 llvm-3.7-dev E: 无法按照 glob ‘llvm-3.7-dev’ 找到任何软件包 E: 无法按照正则表达式 llvm-3.7-dev 找到任何软件包 E: 无法定位软件包 libclang-3.7-dev E: 无法按照 glob ‘libclang-3.7-dev’ 找到任何软件包 E: 无法按照正则表达式 libclang-3.7-dev 找到任何软件包 sudo apt-get -y install bison build-essential cmake flex git libedit-dev \ llvm-dev libclang-dev python zlib1g-dev libelf-dev

2023.10.04参照了另一个博客,这个是我在进行源码编译运行时遇到了许多问题,最后不知道怎么的来到了这里

[Ubuntu 18.04 LTS源码构建bcc_ubuntu 18.04 安装 ebpf-CSDN博客](https://blog.csdn.net/weixin_44395686/article/details/106712543#:~:text=源码编译安装bcc 1 检查环境 (特别高版本内核可以忽略此步) 内核配置 :高版本的内核这些是标配,基本不用管,不放心也可以检查下。 通过命令 less,libclang-6.0-dev python zlib1g-dev libelf-dev 1 2 除了官网要求的这些工具之外,还要额外安装几个python3的包 )

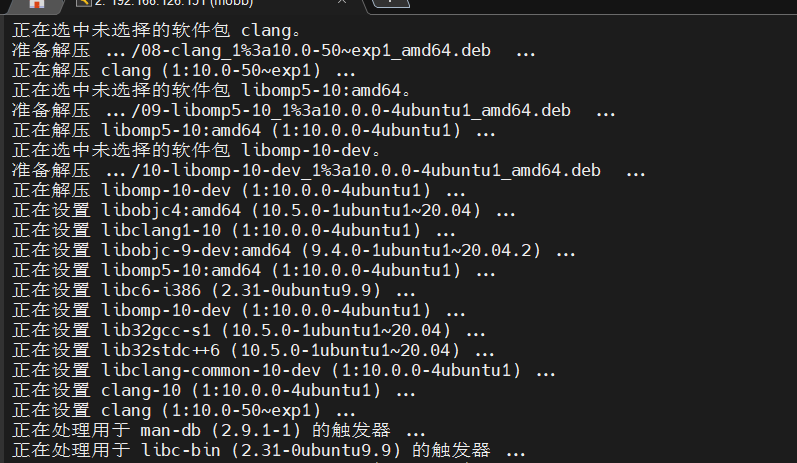

参照第二个博客的时候在安装clang时就发生了报错

有一些软件包无法被安装。如果您用的是 unstable 发行版,这也许是

找了很久最终在这里找到了解决办法

https://blog.csdn.net/qq_33406883/article/details/100971183

最后记得要重启一下

然后再次运行

1 2 3 4 5 6 7 8 9 10 11 12 13 root@k8smaster:/home/mobb/eBPF_test clang version 10.0.0-4ubuntu1 Target: x86_64-pc-linux-gnu Thread model: posix InstalledDir: /usr/bin Found candidate GCC installation: /usr/bin/../lib/gcc/x86_64-linux-gnu/9 Found candidate GCC installation: /usr/lib/gcc/x86_64-linux-gnu/9 Selected GCC installation: /usr/bin/../lib/gcc/x86_64-linux-gnu/9 Candidate multilib: .;@m64 Candidate multilib: 32;@m32 Candidate multilib: x32;@mx32 Selected multilib: .;@m64

依次安装下面这些依赖

1 2 3 4 5 sudo apt install llvm sudo apt install pkg-config sudo apt install m4 sudo apt install libelf-dev sudo apt install libpcap-dev

全部安装成功后,将二进制安装包clone下来并解压

然后进入解压目录

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 root@k8smaster:/home/mobb/libbpf-1.2.2/src MKDIR staticobjs CC staticobjs/bpf.o CC staticobjs/btf.o CC staticobjs/libbpf.o CC staticobjs/libbpf_errno.o CC staticobjs/netlink.o CC staticobjs/nlattr.o CC staticobjs/str_error.o CC staticobjs/libbpf_probes.o CC staticobjs/bpf_prog_linfo.o CC staticobjs/btf_dump.o CC staticobjs/hashmap.o CC staticobjs/ringbuf.o CC staticobjs/strset.o CC staticobjs/linker.o CC staticobjs/gen_loader.o CC staticobjs/relo_core.o CC staticobjs/usdt.o CC staticobjs/zip.o AR libbpf.a MKDIR sharedobjs CC sharedobjs/bpf.o CC sharedobjs/btf.o CC sharedobjs/libbpf.o CC sharedobjs/libbpf_errno.o CC sharedobjs/netlink.o CC sharedobjs/nlattr.o CC sharedobjs/str_error.o CC sharedobjs/libbpf_probes.o CC sharedobjs/bpf_prog_linfo.o CC sharedobjs/btf_dump.o CC sharedobjs/hashmap.o CC sharedobjs/ringbuf.o CC sharedobjs/strset.o CC sharedobjs/linker.o CC sharedobjs/gen_loader.o CC sharedobjs/relo_core.o CC sharedobjs/usdt.o CC sharedobjs/zip.o CC libbpf.so.1.2.2

虽然这里安装成功了,但是跑代码的时候还是报错,再次尝试了一下这个命令

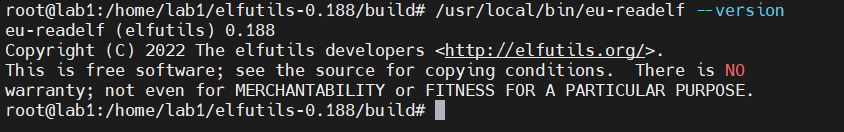

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 root@k8smaster:/home/mobb 正在读取软件包列表... 完成 正在分析软件包的依赖关系树 正在读取状态信息... 完成 make 已经是最新版 (4.2.1-1.2)。 make 已设置为手动安装。 linux-headers-5.15.0-79-generic 已经是最新版 (5.15.0-79.86~20.04.2)。 linux-headers-5.15.0-79-generic 已设置为手动安装。 下列软件包是自动安装的并且现在不需要了: libcbor0.6 libfido2-1 使用'sudo apt autoremove' 来卸载它(它们)。 将会同时安装下列软件: binfmt-support clang-10 ieee-data lib32gcc-s1 lib32stdc++6 libbpf0 libbpfcc libc6-i386 libclang-common-10-dev libclang-cpp10 libclang1-10 libdw1 libelf1 libelf1:i386 libllvm10 libncurses-dev libobjc-9-dev libobjc4 libomp-10-dev libomp5-10 libpfm4 libtinfo-dev libz3-4 libz3-dev linux-hwe-5.15-tools-5.15.0-79 linux-tools-common llvm-10 llvm-10-dev llvm-10-runtime llvm-10-tools llvm-runtime python3-bpfcc python3-netaddr python3-pygments 建议安装: clang-10-doc ncurses-doc libomp-10-doc llvm-10-doc ipython3 python-netaddr-docs python-pygments-doc ttf-bitstream-vera 下列【新】软件包将被安装: binfmt-support bpfcc-tools clang clang-10 ieee-data lib32gcc-s1 lib32stdc++6 libbpf-dev libbpf0 libbpfcc libbpfcc-dev libc6-i386 libclang-common-10-dev libclang-cpp10 libclang1-10 libelf-dev libllvm10 libncurses-dev libobjc-9-dev libobjc4 libomp-10-dev libomp5-10 libpfm4 libtinfo-dev libz3-4 libz3-dev linux-hwe-5.15-tools-5.15.0-79 linux-tools-5.15.0-79-generic linux-tools-common llvm llvm-10 llvm-10-dev llvm-10-runtime llvm-10-tools llvm-runtime python3-bpfcc python3-netaddr python3-pygments 下列软件包将被升级: libdw1 libelf1 libelf1:i386 升级了 3 个软件包,新安装了 38 个软件包,要卸载 0 个软件包,有 156 个软件包未被升级。 需要下载 107 MB/107 MB 的归档。 解压缩后会消耗 602 MB 的额外空间。 获取:1 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 binfmt-support amd64 2.2.0-2 [58.2 kB] 获取:2 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libbpfcc amd64 0.12.0-2 [14.9 MB] 获取:7 http://security.ubuntu.com/ubuntu focal-security/universe amd64 libobjc4 amd64 10.5.0-1ubuntu1~20.04 [42.8 kB] 获取:29 http://security.ubuntu.com/ubuntu focal-security/universe amd64 libobjc-9-dev amd64 9.4.0-1ubuntu1~20.04.2 [225 kB] 获取:3 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 python3-bpfcc all 0.12.0-2 [31.3 kB] 获取:4 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 ieee-data all 20180805.1 [1,589 kB] 获取:5 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/main amd64 python3-netaddr all 0.7.19-3ubuntu1 [236 kB] 获取:6 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 bpfcc-tools all 0.12.0-2 [579 kB] 获取:8 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 libllvm10 amd64 1:10.0.0-4ubuntu1 [15.3 MB] 获取:30 http://security.ubuntu.com/ubuntu focal-security/main amd64 lib32gcc-s1 amd64 10.5.0-1ubuntu1~20.04 [49.1 kB] 获取:31 http://security.ubuntu.com/ubuntu focal-security/main amd64 lib32stdc++6 amd64 10.5.0-1ubuntu1~20.04 [522 kB] 获取:9 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libclang-cpp10 amd64 1:10.0.0-4ubuntu1 [9,944 kB] 获取:32 http://security.ubuntu.com/ubuntu focal-security/main amd64 libelf-dev amd64 0.176-1.1ubuntu0.1 [57.1 kB] 获取:33 http://security.ubuntu.com/ubuntu focal-security/main amd64 libncurses-dev amd64 6.2-0ubuntu2.1 [340 kB] 获取:10 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/main amd64 libc6-i386 amd64 2.31-0ubuntu9.9 [2,730 kB] 获取:11 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libclang-common-10-dev amd64 1:10.0.0-4ubuntu1 [5,012 kB] 获取:34 http://security.ubuntu.com/ubuntu focal-security/main amd64 libtinfo-dev amd64 6.2-0ubuntu2.1 [972 B] 获取:35 http://security.ubuntu.com/ubuntu focal-security/main amd64 linux-tools-common all 5.4.0-163.180 [197 kB] 获取:12 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libclang1-10 amd64 1:10.0.0-4ubuntu1 [7,571 kB] 获取:36 http://security.ubuntu.com/ubuntu focal-security/main amd64 linux-hwe-5.15-tools-5.15.0-79 amd64 5.15.0-79.86~20.04.2 [7,296 kB] 获取:13 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 clang-10 amd64 1:10.0.0-4ubuntu1 [66.9 kB] 获取:14 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 clang amd64 1:10.0-50~exp1 [3,276 B] 获取:15 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/universe amd64 libbpf0 amd64 1:0.5.0-1~ubuntu20.04.1 [128 kB] 获取:16 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/universe amd64 libbpf-dev amd64 1:0.5.0-1~ubuntu20.04.1 [188 kB] 获取:17 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libbpfcc-dev amd64 0.12.0-2 [16.4 kB] 获取:18 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libomp5-10 amd64 1:10.0.0-4ubuntu1 [300 kB] 获取:19 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libomp-10-dev amd64 1:10.0.0-4ubuntu1 [47.7 kB] 获取:20 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-10-runtime amd64 1:10.0.0-4ubuntu1 [180 kB] 获取:21 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-runtime amd64 1:10.0-50~exp1 [2,916 B] 获取:22 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 libpfm4 amd64 4.10.1+git20-g7700f49-2 [266 kB] 获取:23 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-10 amd64 1:10.0.0-4ubuntu1 [5,214 kB] 获取:24 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm amd64 1:10.0-50~exp1 [3,880 B] 获取:25 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-10-tools amd64 1:10.0.0-4ubuntu1 [317 kB] 获取:26 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libz3-4 amd64 4.8.7-4build1 [6,792 kB] 获取:27 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libz3-dev amd64 4.8.7-4build1 [67.5 kB] 获取:28 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-10-dev amd64 1:10.0.0-4ubuntu1 [26.0 MB] 获取:37 http://security.ubuntu.com/ubuntu focal-security/main amd64 linux-tools-5.15.0-79-generic amd64 5.15.0-79.86~20.04.2 [2,008 B] 获取:38 http://security.ubuntu.com/ubuntu focal-security/main amd64 python3-pygments all 2.3.1+dfsg-1ubuntu2.2 [579 kB] 已下载 107 MB,耗时 14秒 (7,800 kB/s) 正在从软件包中解出模板:100% (正在读取数据库 ... 系统当前共安装有 208477 个文件和目录。) 准备解压 .../00-libdw1_0.176-1.1ubuntu0.1_amd64.deb ... 正在解压 libdw1:amd64 (0.176-1.1ubuntu0.1) 并覆盖 (0.176-1.1build1) ... 准备解压 .../01-libelf1_0.176-1.1ubuntu0.1_i386.deb ... 正在反配置 libelf1:amd64 (0.176-1.1build1) ... 正在解压 libelf1:i386 (0.176-1.1ubuntu0.1) 并覆盖 (0.176-1.1build1) ... 准备解压 .../02-libelf1_0.176-1.1ubuntu0.1_amd64.deb ... 正在解压 libelf1:amd64 (0.176-1.1ubuntu0.1) 并覆盖 (0.176-1.1build1) ... 正在选中未选择的软件包 binfmt-support。 准备解压 .../03-binfmt-support_2.2.0-2_amd64.deb ... 正在解压 binfmt-support (2.2.0-2) ... 正在选中未选择的软件包 libbpfcc。 准备解压 .../04-libbpfcc_0.12.0-2_amd64.deb ... 正在解压 libbpfcc (0.12.0-2) ... 正在选中未选择的软件包 python3-bpfcc。 准备解压 .../05-python3-bpfcc_0.12.0-2_all.deb ... 正在解压 python3-bpfcc (0.12.0-2) ... 正在选中未选择的软件包 ieee-data。 准备解压 .../06-ieee-data_20180805.1_all.deb ... 正在解压 ieee-data (20180805.1) ... 正在选中未选择的软件包 python3-netaddr。 准备解压 .../07-python3-netaddr_0.7.19-3ubuntu1_all.deb ... 正在解压 python3-netaddr (0.7.19-3ubuntu1) ... 正在选中未选择的软件包 bpfcc-tools。 准备解压 .../08-bpfcc-tools_0.12.0-2_all.deb ... 正在解压 bpfcc-tools (0.12.0-2) ... 正在选中未选择的软件包 libllvm10:amd64。 准备解压 .../09-libllvm10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libllvm10:amd64 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libclang-cpp10。 准备解压 .../10-libclang-cpp10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libclang-cpp10 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libobjc4:amd64。 准备解压 .../11-libobjc4_10.5.0-1ubuntu1~20.04_amd64.deb ... 正在解压 libobjc4:amd64 (10.5.0-1ubuntu1~20.04) ... 正在选中未选择的软件包 libobjc-9-dev:amd64。 准备解压 .../12-libobjc-9-dev_9.4.0-1ubuntu1~20.04.2_amd64.deb ... 正在解压 libobjc-9-dev:amd64 (9.4.0-1ubuntu1~20.04.2) ... 正在选中未选择的软件包 libc6-i386。 准备解压 .../13-libc6-i386_2.31-0ubuntu9.9_amd64.deb ... 正在解压 libc6-i386 (2.31-0ubuntu9.9) ... 被已安装的软件包 libc6:i386 (2.31-0ubuntu9.9) 中的文件替换了... 正在选中未选择的软件包 lib32gcc-s1。 准备解压 .../14-lib32gcc-s1_10.5.0-1ubuntu1~20.04_amd64.deb ... 正在解压 lib32gcc-s1 (10.5.0-1ubuntu1~20.04) ... 正在选中未选择的软件包 lib32stdc++6。 准备解压 .../15-lib32stdc++6_10.5.0-1ubuntu1~20.04_amd64.deb ... 正在解压 lib32stdc++6 (10.5.0-1ubuntu1~20.04) ... 正在选中未选择的软件包 libclang-common-10-dev。 准备解压 .../16-libclang-common-10-dev_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libclang-common-10-dev (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libclang1-10。 准备解压 .../17-libclang1-10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libclang1-10 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 clang-10。 准备解压 .../18-clang-10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 clang-10 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 clang。 准备解压 .../19-clang_1%3a10.0-50~exp1_amd64.deb ... 正在解压 clang (1:10.0-50~exp1) ... 正在选中未选择的软件包 libbpf0:amd64。 准备解压 .../20-libbpf0_1%3a0.5.0-1~ubuntu20.04.1_amd64.deb ... 正在解压 libbpf0:amd64 (1:0.5.0-1~ubuntu20.04.1) ... 正在选中未选择的软件包 libelf-dev:amd64。 准备解压 .../21-libelf-dev_0.176-1.1ubuntu0.1_amd64.deb ... 正在解压 libelf-dev:amd64 (0.176-1.1ubuntu0.1) ... 正在选中未选择的软件包 libbpf-dev:amd64。 准备解压 .../22-libbpf-dev_1%3a0.5.0-1~ubuntu20.04.1_amd64.deb ... 正在解压 libbpf-dev:amd64 (1:0.5.0-1~ubuntu20.04.1) ... 正在选中未选择的软件包 libbpfcc-dev。 准备解压 .../23-libbpfcc-dev_0.12.0-2_amd64.deb ... 正在解压 libbpfcc-dev (0.12.0-2) ... 正在选中未选择的软件包 libncurses-dev:amd64。 准备解压 .../24-libncurses-dev_6.2-0ubuntu2.1_amd64.deb ... 正在解压 libncurses-dev:amd64 (6.2-0ubuntu2.1) ... 正在选中未选择的软件包 libomp5-10:amd64。 准备解压 .../25-libomp5-10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libomp5-10:amd64 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libomp-10-dev。 准备解压 .../26-libomp-10-dev_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libomp-10-dev (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libtinfo-dev:amd64。 准备解压 .../27-libtinfo-dev_6.2-0ubuntu2.1_amd64.deb ... 正在解压 libtinfo-dev:amd64 (6.2-0ubuntu2.1) ... 正在选中未选择的软件包 linux-tools-common。 准备解压 .../28-linux-tools-common_5.4.0-163.180_all.deb ... 正在解压 linux-tools-common (5.4.0-163.180) ... 正在选中未选择的软件包 linux-hwe-5.15-tools-5.15.0-79。 准备解压 .../29-linux-hwe-5.15-tools-5.15.0-79_5.15.0-79.86~20.04.2_amd64.deb ... 正在解压 linux-hwe-5.15-tools-5.15.0-79 (5.15.0-79.86~20.04.2) ... 正在选中未选择的软件包 linux-tools-5.15.0-79-generic。 准备解压 .../30-linux-tools-5.15.0-79-generic_5.15.0-79.86~20.04.2_amd64.deb ... 正在解压 linux-tools-5.15.0-79-generic (5.15.0-79.86~20.04.2) ... 正在选中未选择的软件包 llvm-10-runtime。 准备解压 .../31-llvm-10-runtime_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 llvm-10-runtime (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 llvm-runtime。 准备解压 .../32-llvm-runtime_1%3a10.0-50~exp1_amd64.deb ... 正在解压 llvm-runtime (1:10.0-50~exp1) ... 正在选中未选择的软件包 libpfm4:amd64。 准备解压 .../33-libpfm4_4.10.1+git20-g7700f49-2_amd64.deb ... 正在解压 libpfm4:amd64 (4.10.1+git20-g7700f49-2) ... 正在选中未选择的软件包 llvm-10。 准备解压 .../34-llvm-10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 llvm-10 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 llvm。 准备解压 .../35-llvm_1%3a10.0-50~exp1_amd64.deb ... 正在解压 llvm (1:10.0-50~exp1) ... 正在选中未选择的软件包 python3-pygments。 准备解压 .../36-python3-pygments_2.3.1+dfsg-1ubuntu2.2_all.deb ... 正在解压 python3-pygments (2.3.1+dfsg-1ubuntu2.2) ... 正在选中未选择的软件包 llvm-10-tools。 准备解压 .../37-llvm-10-tools_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 llvm-10-tools (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libz3-4:amd64。 准备解压 .../38-libz3-4_4.8.7-4build1_amd64.deb ... 正在解压 libz3-4:amd64 (4.8.7-4build1) ... 正在选中未选择的软件包 libz3-dev:amd64。 准备解压 .../39-libz3-dev_4.8.7-4build1_amd64.deb ... 正在解压 libz3-dev:amd64 (4.8.7-4build1) ... 正在选中未选择的软件包 llvm-10-dev。 准备解压 .../40-llvm-10-dev_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 llvm-10-dev (1:10.0.0-4ubuntu1) ... 正在设置 libncurses-dev:amd64 (6.2-0ubuntu2.1) ... 正在设置 libobjc4:amd64 (10.5.0-1ubuntu1~20.04) ... 正在设置 libllvm10:amd64 (1:10.0.0-4ubuntu1) ... 正在设置 python3-pygments (2.3.1+dfsg-1ubuntu2.2) ... 正在设置 libz3-4:amd64 (4.8.7-4build1) ... 正在设置 libpfm4:amd64 (4.10.1+git20-g7700f49-2) ... 正在设置 libclang1-10 (1:10.0.0-4ubuntu1) ... 正在设置 binfmt-support (2.2.0-2) ... Created symlink /etc/systemd/system/multi-user.target.wants/binfmt-support.service → /lib/systemd/system/binfmt-support.service. 正在设置 libobjc-9-dev:amd64 (9.4.0-1ubuntu1~20.04.2) ... 正在设置 ieee-data (20180805.1) ... 正在设置 libomp5-10:amd64 (1:10.0.0-4ubuntu1) ... 正在设置 libc6-i386 (2.31-0ubuntu9.9) ... 正在设置 libelf1:amd64 (0.176-1.1ubuntu0.1) ... 正在设置 libelf1:i386 (0.176-1.1ubuntu0.1) ... 正在设置 linux-tools-common (5.4.0-163.180) ... 正在设置 libtinfo-dev:amd64 (6.2-0ubuntu2.1) ... 正在设置 libz3-dev:amd64 (4.8.7-4build1) ... 正在设置 libdw1:amd64 (0.176-1.1ubuntu0.1) ... 正在设置 llvm-10-tools (1:10.0.0-4ubuntu1) ... 正在设置 libomp-10-dev (1:10.0.0-4ubuntu1) ... 正在设置 libclang-cpp10 (1:10.0.0-4ubuntu1) ... 正在设置 llvm-10-runtime (1:10.0.0-4ubuntu1) ... 正在设置 lib32gcc-s1 (10.5.0-1ubuntu1~20.04) ... 正在设置 lib32stdc++6 (10.5.0-1ubuntu1~20.04) ... 正在设置 llvm-runtime (1:10.0-50~exp1) ... 正在设置 libelf-dev:amd64 (0.176-1.1ubuntu0.1) ... 正在设置 python3-netaddr (0.7.19-3ubuntu1) ... 正在设置 libbpf0:amd64 (1:0.5.0-1~ubuntu20.04.1) ... 正在设置 libbpfcc (0.12.0-2) ... 正在设置 python3-bpfcc (0.12.0-2) ... 正在设置 linux-hwe-5.15-tools-5.15.0-79 (5.15.0-79.86~20.04.2) ... 正在设置 libbpf-dev:amd64 (1:0.5.0-1~ubuntu20.04.1) ... 正在设置 libclang-common-10-dev (1:10.0.0-4ubuntu1) ... 正在设置 linux-tools-5.15.0-79-generic (5.15.0-79.86~20.04.2) ... 正在设置 llvm-10 (1:10.0.0-4ubuntu1) ... 正在设置 llvm-10-dev (1:10.0.0-4ubuntu1) ... 正在设置 bpfcc-tools (0.12.0-2) ... 正在设置 libbpfcc-dev (0.12.0-2) ... 正在设置 llvm (1:10.0-50~exp1) ... 正在设置 clang-10 (1:10.0.0-4ubuntu1) ... 正在设置 clang (1:10.0-50~exp1) ... 正在处理用于 systemd (245.4-4ubuntu3.20) 的触发器 ... 正在处理用于 man-db (2.9.1-1) 的触发器 ... 正在处理用于 libc-bin (2.31-0ubuntu9.9) 的触发器 ...

查看文件是否已安装

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 cat /boot/config-5.15.0-79-generic |grep BPF CONFIG_BPF=y CONFIG_HAVE_EBPF_JIT=y CONFIG_ARCH_WANT_DEFAULT_BPF_JIT=y CONFIG_BPF_SYSCALL=y CONFIG_BPF_JIT=y CONFIG_BPF_JIT_ALWAYS_ON=y CONFIG_BPF_JIT_DEFAULT_ON=y CONFIG_BPF_UNPRIV_DEFAULT_OFF=y CONFIG_BPF_LSM=y CONFIG_CGROUP_BPF=y CONFIG_IPV6_SEG6_BPF=y CONFIG_NETFILTER_XT_MATCH_BPF=m CONFIG_BPFILTER=y CONFIG_BPFILTER_UMH=m CONFIG_NET_CLS_BPF=m CONFIG_NET_ACT_BPF=m CONFIG_BPF_STREAM_PARSER=y CONFIG_LWTUNNEL_BPF=y CONFIG_BPF_EVENTS=y CONFIG_BPF_KPROBE_OVERRIDE=y CONFIG_TEST_BPF=m

这里也有显示了,但是不知道为什么还是没能运行,报了这样的错

ImportError: cannot import name BPF · Issue #2278 · iovisor/bcc (github.com)

最后重新再安装了一遍

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 root@k8smaster:/home/mobb 正在读取软件包列表... 完成 正在分析软件包的依赖关系树 正在读取状态信息... 完成 make 已经是最新版 (4.2.1-1.2)。 make 已设置为手动安装。 linux-headers-5.15.0-79-generic 已经是最新版 (5.15.0-79.86~20.04.2)。 linux-headers-5.15.0-79-generic 已设置为手动安装。 下列软件包是自动安装的并且现在不需要了: libcbor0.6 libfido2-1 使用'sudo apt autoremove' 来卸载它(它们)。 将会同时安装下列软件: binfmt-support clang-10 ieee-data lib32gcc-s1 lib32stdc++6 libbpf0 libbpfcc libc6-i386 libclang-common-10-dev libclang-cpp10 libclang1-10 libdw1 libelf1 libelf1:i386 libllvm10 libncurses-dev libobjc-9-dev libobjc4 libomp-10-dev libomp5-10 libpfm4 libtinfo-dev libz3-4 libz3-dev linux-hwe-5.15-tools-5.15.0-79 linux-tools-common llvm-10 llvm-10-dev llvm-10-runtime llvm-10-tools llvm-runtime python3-bpfcc python3-netaddr python3-pygments 建议安装: clang-10-doc ncurses-doc libomp-10-doc llvm-10-doc ipython3 python-netaddr-docs python-pygments-doc ttf-bitstream-vera 下列【新】软件包将被安装: binfmt-support bpfcc-tools clang clang-10 ieee-data lib32gcc-s1 lib32stdc++6 libbpf-dev libbpf0 libbpfcc libbpfcc-dev libc6-i386 libclang-common-10-dev libclang-cpp10 libclang1-10 libelf-dev libllvm10 libncurses-dev libobjc-9-dev libobjc4 libomp-10-dev libomp5-10 libpfm4 libtinfo-dev libz3-4 libz3-dev linux-hwe-5.15-tools-5.15.0-79 linux-tools-5.15.0-79-generic linux-tools-common llvm llvm-10 llvm-10-dev llvm-10-runtime llvm-10-tools llvm-runtime python3-bpfcc python3-netaddr python3-pygments 下列软件包将被升级: libdw1 libelf1 libelf1:i386 升级了 3 个软件包,新安装了 38 个软件包,要卸载 0 个软件包,有 156 个软件包未被升级。 需要下载 107 MB/107 MB 的归档。 解压缩后会消耗 602 MB 的额外空间。 获取:1 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 binfmt-support amd64 2.2.0-2 [58.2 kB] 获取:2 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libbpfcc amd64 0.12.0-2 [14.9 MB] 获取:7 http://security.ubuntu.com/ubuntu focal-security/universe amd64 libobjc4 amd64 10.5.0-1ubuntu1~20.04 [42.8 kB] 获取:29 http://security.ubuntu.com/ubuntu focal-security/universe amd64 libobjc-9-dev amd64 9.4.0-1ubuntu1~20.04.2 [225 kB] 获取:3 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 python3-bpfcc all 0.12.0-2 [31.3 kB] 获取:4 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 ieee-data all 20180805.1 [1,589 kB] 获取:5 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/main amd64 python3-netaddr all 0.7.19-3ubuntu1 [236 kB] 获取:6 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 bpfcc-tools all 0.12.0-2 [579 kB] 获取:8 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 libllvm10 amd64 1:10.0.0-4ubuntu1 [15.3 MB] 获取:30 http://security.ubuntu.com/ubuntu focal-security/main amd64 lib32gcc-s1 amd64 10.5.0-1ubuntu1~20.04 [49.1 kB] 获取:31 http://security.ubuntu.com/ubuntu focal-security/main amd64 lib32stdc++6 amd64 10.5.0-1ubuntu1~20.04 [522 kB] 获取:9 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libclang-cpp10 amd64 1:10.0.0-4ubuntu1 [9,944 kB] 获取:32 http://security.ubuntu.com/ubuntu focal-security/main amd64 libelf-dev amd64 0.176-1.1ubuntu0.1 [57.1 kB] 获取:33 http://security.ubuntu.com/ubuntu focal-security/main amd64 libncurses-dev amd64 6.2-0ubuntu2.1 [340 kB] 获取:10 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/main amd64 libc6-i386 amd64 2.31-0ubuntu9.9 [2,730 kB] 获取:11 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libclang-common-10-dev amd64 1:10.0.0-4ubuntu1 [5,012 kB] 获取:34 http://security.ubuntu.com/ubuntu focal-security/main amd64 libtinfo-dev amd64 6.2-0ubuntu2.1 [972 B] 获取:35 http://security.ubuntu.com/ubuntu focal-security/main amd64 linux-tools-common all 5.4.0-163.180 [197 kB] 获取:12 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libclang1-10 amd64 1:10.0.0-4ubuntu1 [7,571 kB] 获取:36 http://security.ubuntu.com/ubuntu focal-security/main amd64 linux-hwe-5.15-tools-5.15.0-79 amd64 5.15.0-79.86~20.04.2 [7,296 kB] 获取:13 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 clang-10 amd64 1:10.0.0-4ubuntu1 [66.9 kB] 获取:14 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 clang amd64 1:10.0-50~exp1 [3,276 B] 获取:15 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/universe amd64 libbpf0 amd64 1:0.5.0-1~ubuntu20.04.1 [128 kB] 获取:16 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/universe amd64 libbpf-dev amd64 1:0.5.0-1~ubuntu20.04.1 [188 kB] 获取:17 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libbpfcc-dev amd64 0.12.0-2 [16.4 kB] 获取:18 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libomp5-10 amd64 1:10.0.0-4ubuntu1 [300 kB] 获取:19 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libomp-10-dev amd64 1:10.0.0-4ubuntu1 [47.7 kB] 获取:20 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-10-runtime amd64 1:10.0.0-4ubuntu1 [180 kB] 获取:21 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-runtime amd64 1:10.0-50~exp1 [2,916 B] 获取:22 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 libpfm4 amd64 4.10.1+git20-g7700f49-2 [266 kB] 获取:23 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-10 amd64 1:10.0.0-4ubuntu1 [5,214 kB] 获取:24 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm amd64 1:10.0-50~exp1 [3,880 B] 获取:25 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-10-tools amd64 1:10.0.0-4ubuntu1 [317 kB] 获取:26 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libz3-4 amd64 4.8.7-4build1 [6,792 kB] 获取:27 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libz3-dev amd64 4.8.7-4build1 [67.5 kB] 获取:28 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 llvm-10-dev amd64 1:10.0.0-4ubuntu1 [26.0 MB] 获取:37 http://security.ubuntu.com/ubuntu focal-security/main amd64 linux-tools-5.15.0-79-generic amd64 5.15.0-79.86~20.04.2 [2,008 B] 获取:38 http://security.ubuntu.com/ubuntu focal-security/main amd64 python3-pygments all 2.3.1+dfsg-1ubuntu2.2 [579 kB] 已下载 107 MB,耗时 14秒 (7,800 kB/s) 正在从软件包中解出模板:100% (正在读取数据库 ... 系统当前共安装有 208477 个文件和目录。) 准备解压 .../00-libdw1_0.176-1.1ubuntu0.1_amd64.deb ... 正在解压 libdw1:amd64 (0.176-1.1ubuntu0.1) 并覆盖 (0.176-1.1build1) ... 准备解压 .../01-libelf1_0.176-1.1ubuntu0.1_i386.deb ... 正在反配置 libelf1:amd64 (0.176-1.1build1) ... 正在解压 libelf1:i386 (0.176-1.1ubuntu0.1) 并覆盖 (0.176-1.1build1) ... 准备解压 .../02-libelf1_0.176-1.1ubuntu0.1_amd64.deb ... 正在解压 libelf1:amd64 (0.176-1.1ubuntu0.1) 并覆盖 (0.176-1.1build1) ... 正在选中未选择的软件包 binfmt-support。 准备解压 .../03-binfmt-support_2.2.0-2_amd64.deb ... 正在解压 binfmt-support (2.2.0-2) ... 正在选中未选择的软件包 libbpfcc。 准备解压 .../04-libbpfcc_0.12.0-2_amd64.deb ... 正在解压 libbpfcc (0.12.0-2) ... 正在选中未选择的软件包 python3-bpfcc。 准备解压 .../05-python3-bpfcc_0.12.0-2_all.deb ... 正在解压 python3-bpfcc (0.12.0-2) ... 正在选中未选择的软件包 ieee-data。 准备解压 .../06-ieee-data_20180805.1_all.deb ... 正在解压 ieee-data (20180805.1) ... 正在选中未选择的软件包 python3-netaddr。 准备解压 .../07-python3-netaddr_0.7.19-3ubuntu1_all.deb ... 正在解压 python3-netaddr (0.7.19-3ubuntu1) ... 正在选中未选择的软件包 bpfcc-tools。 准备解压 .../08-bpfcc-tools_0.12.0-2_all.deb ... 正在解压 bpfcc-tools (0.12.0-2) ... 正在选中未选择的软件包 libllvm10:amd64。 准备解压 .../09-libllvm10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libllvm10:amd64 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libclang-cpp10。 准备解压 .../10-libclang-cpp10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libclang-cpp10 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libobjc4:amd64。 准备解压 .../11-libobjc4_10.5.0-1ubuntu1~20.04_amd64.deb ... 正在解压 libobjc4:amd64 (10.5.0-1ubuntu1~20.04) ... 正在选中未选择的软件包 libobjc-9-dev:amd64。 准备解压 .../12-libobjc-9-dev_9.4.0-1ubuntu1~20.04.2_amd64.deb ... 正在解压 libobjc-9-dev:amd64 (9.4.0-1ubuntu1~20.04.2) ... 正在选中未选择的软件包 libc6-i386。 准备解压 .../13-libc6-i386_2.31-0ubuntu9.9_amd64.deb ... 正在解压 libc6-i386 (2.31-0ubuntu9.9) ... 被已安装的软件包 libc6:i386 (2.31-0ubuntu9.9) 中的文件替换了... 正在选中未选择的软件包 lib32gcc-s1。 准备解压 .../14-lib32gcc-s1_10.5.0-1ubuntu1~20.04_amd64.deb ... 正在解压 lib32gcc-s1 (10.5.0-1ubuntu1~20.04) ... 正在选中未选择的软件包 lib32stdc++6。 准备解压 .../15-lib32stdc++6_10.5.0-1ubuntu1~20.04_amd64.deb ... 正在解压 lib32stdc++6 (10.5.0-1ubuntu1~20.04) ... 正在选中未选择的软件包 libclang-common-10-dev。 准备解压 .../16-libclang-common-10-dev_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libclang-common-10-dev (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libclang1-10。 准备解压 .../17-libclang1-10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libclang1-10 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 clang-10。 准备解压 .../18-clang-10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 clang-10 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 clang。 准备解压 .../19-clang_1%3a10.0-50~exp1_amd64.deb ... 正在解压 clang (1:10.0-50~exp1) ... 正在选中未选择的软件包 libbpf0:amd64。 准备解压 .../20-libbpf0_1%3a0.5.0-1~ubuntu20.04.1_amd64.deb ... 正在解压 libbpf0:amd64 (1:0.5.0-1~ubuntu20.04.1) ... 正在选中未选择的软件包 libelf-dev:amd64。 准备解压 .../21-libelf-dev_0.176-1.1ubuntu0.1_amd64.deb ... 正在解压 libelf-dev:amd64 (0.176-1.1ubuntu0.1) ... 正在选中未选择的软件包 libbpf-dev:amd64。 准备解压 .../22-libbpf-dev_1%3a0.5.0-1~ubuntu20.04.1_amd64.deb ... 正在解压 libbpf-dev:amd64 (1:0.5.0-1~ubuntu20.04.1) ... 正在选中未选择的软件包 libbpfcc-dev。 准备解压 .../23-libbpfcc-dev_0.12.0-2_amd64.deb ... 正在解压 libbpfcc-dev (0.12.0-2) ... 正在选中未选择的软件包 libncurses-dev:amd64。 准备解压 .../24-libncurses-dev_6.2-0ubuntu2.1_amd64.deb ... 正在解压 libncurses-dev:amd64 (6.2-0ubuntu2.1) ... 正在选中未选择的软件包 libomp5-10:amd64。 准备解压 .../25-libomp5-10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libomp5-10:amd64 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libomp-10-dev。 准备解压 .../26-libomp-10-dev_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 libomp-10-dev (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libtinfo-dev:amd64。 准备解压 .../27-libtinfo-dev_6.2-0ubuntu2.1_amd64.deb ... 正在解压 libtinfo-dev:amd64 (6.2-0ubuntu2.1) ... 正在选中未选择的软件包 linux-tools-common。 准备解压 .../28-linux-tools-common_5.4.0-163.180_all.deb ... 正在解压 linux-tools-common (5.4.0-163.180) ... 正在选中未选择的软件包 linux-hwe-5.15-tools-5.15.0-79。 准备解压 .../29-linux-hwe-5.15-tools-5.15.0-79_5.15.0-79.86~20.04.2_amd64.deb ... 正在解压 linux-hwe-5.15-tools-5.15.0-79 (5.15.0-79.86~20.04.2) ... 正在选中未选择的软件包 linux-tools-5.15.0-79-generic。 准备解压 .../30-linux-tools-5.15.0-79-generic_5.15.0-79.86~20.04.2_amd64.deb ... 正在解压 linux-tools-5.15.0-79-generic (5.15.0-79.86~20.04.2) ... 正在选中未选择的软件包 llvm-10-runtime。 准备解压 .../31-llvm-10-runtime_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 llvm-10-runtime (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 llvm-runtime。 准备解压 .../32-llvm-runtime_1%3a10.0-50~exp1_amd64.deb ... 正在解压 llvm-runtime (1:10.0-50~exp1) ... 正在选中未选择的软件包 libpfm4:amd64。 准备解压 .../33-libpfm4_4.10.1+git20-g7700f49-2_amd64.deb ... 正在解压 libpfm4:amd64 (4.10.1+git20-g7700f49-2) ... 正在选中未选择的软件包 llvm-10。 准备解压 .../34-llvm-10_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 llvm-10 (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 llvm。 准备解压 .../35-llvm_1%3a10.0-50~exp1_amd64.deb ... 正在解压 llvm (1:10.0-50~exp1) ... 正在选中未选择的软件包 python3-pygments。 准备解压 .../36-python3-pygments_2.3.1+dfsg-1ubuntu2.2_all.deb ... 正在解压 python3-pygments (2.3.1+dfsg-1ubuntu2.2) ... 正在选中未选择的软件包 llvm-10-tools。 准备解压 .../37-llvm-10-tools_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 llvm-10-tools (1:10.0.0-4ubuntu1) ... 正在选中未选择的软件包 libz3-4:amd64。 准备解压 .../38-libz3-4_4.8.7-4build1_amd64.deb ... 正在解压 libz3-4:amd64 (4.8.7-4build1) ... 正在选中未选择的软件包 libz3-dev:amd64。 准备解压 .../39-libz3-dev_4.8.7-4build1_amd64.deb ... 正在解压 libz3-dev:amd64 (4.8.7-4build1) ... 正在选中未选择的软件包 llvm-10-dev。 准备解压 .../40-llvm-10-dev_1%3a10.0.0-4ubuntu1_amd64.deb ... 正在解压 llvm-10-dev (1:10.0.0-4ubuntu1) ... 正在设置 libncurses-dev:amd64 (6.2-0ubuntu2.1) ... 正在设置 libobjc4:amd64 (10.5.0-1ubuntu1~20.04) ... 正在设置 libllvm10:amd64 (1:10.0.0-4ubuntu1) ... 正在设置 python3-pygments (2.3.1+dfsg-1ubuntu2.2) ... 正在设置 libz3-4:amd64 (4.8.7-4build1) ... 正在设置 libpfm4:amd64 (4.10.1+git20-g7700f49-2) ... 正在设置 libclang1-10 (1:10.0.0-4ubuntu1) ... 正在设置 binfmt-support (2.2.0-2) ... Created symlink /etc/systemd/system/multi-user.target.wants/binfmt-support.service → /lib/systemd/system/binfmt-support.service. 正在设置 libobjc-9-dev:amd64 (9.4.0-1ubuntu1~20.04.2) ... 正在设置 ieee-data (20180805.1) ... 正在设置 libomp5-10:amd64 (1:10.0.0-4ubuntu1) ... 正在设置 libc6-i386 (2.31-0ubuntu9.9) ... 正在设置 libelf1:amd64 (0.176-1.1ubuntu0.1) ... 正在设置 libelf1:i386 (0.176-1.1ubuntu0.1) ... 正在设置 linux-tools-common (5.4.0-163.180) ... 正在设置 libtinfo-dev:amd64 (6.2-0ubuntu2.1) ... 正在设置 libz3-dev:amd64 (4.8.7-4build1) ... 正在设置 libdw1:amd64 (0.176-1.1ubuntu0.1) ... 正在设置 llvm-10-tools (1:10.0.0-4ubuntu1) ... 正在设置 libomp-10-dev (1:10.0.0-4ubuntu1) ... 正在设置 libclang-cpp10 (1:10.0.0-4ubuntu1) ... 正在设置 llvm-10-runtime (1:10.0.0-4ubuntu1) ... 正在设置 lib32gcc-s1 (10.5.0-1ubuntu1~20.04) ... 正在设置 lib32stdc++6 (10.5.0-1ubuntu1~20.04) ... 正在设置 llvm-runtime (1:10.0-50~exp1) ... 正在设置 libelf-dev:amd64 (0.176-1.1ubuntu0.1) ... 正在设置 python3-netaddr (0.7.19-3ubuntu1) ... 正在设置 libbpf0:amd64 (1:0.5.0-1~ubuntu20.04.1) ... 正在设置 libbpfcc (0.12.0-2) ... 正在设置 python3-bpfcc (0.12.0-2) ... 正在设置 linux-hwe-5.15-tools-5.15.0-79 (5.15.0-79.86~20.04.2) ... 正在设置 libbpf-dev:amd64 (1:0.5.0-1~ubuntu20.04.1) ... 正在设置 libclang-common-10-dev (1:10.0.0-4ubuntu1) ... 正在设置 linux-tools-5.15.0-79-generic (5.15.0-79.86~20.04.2) ... 正在设置 llvm-10 (1:10.0.0-4ubuntu1) ... 正在设置 llvm-10-dev (1:10.0.0-4ubuntu1) ... 正在设置 bpfcc-tools (0.12.0-2) ... 正在设置 libbpfcc-dev (0.12.0-2) ... 正在设置 llvm (1:10.0-50~exp1) ... 正在设置 clang-10 (1:10.0.0-4ubuntu1) ... 正在设置 clang (1:10.0-50~exp1) ... 正在处理用于 systemd (245.4-4ubuntu3.20) 的触发器 ... 正在处理用于 man-db (2.9.1-1) 的触发器 ... 正在处理用于 libc-bin (2.31-0ubuntu9.9) 的触发器 ... root@k8smaster:/home/mobb root@k8smaster:/home/mobb root@k8smaster:/home/mobb/eBPF_test root@k8smaster:/home/mobb/eBPF_test Traceback (most recent call last): File "hello.py" , line 11, in <module> b = BPF(src_file="hello.c" ) File "/usr/lib/python3/dist-packages/bcc/__init__.py" , line 317, in __init__ src_file = BPF._find_file(src_file) File "/usr/lib/python3/dist-packages/bcc/__init__.py" , line 246, in _find_file raise Exception("Could not find file %s" % filename) Exception: Could not find file b'hello.c' root@k8smaster:/home/mobb/eBPF_test CONFIG_BPF=y CONFIG_HAVE_EBPF_JIT=y CONFIG_ARCH_WANT_DEFAULT_BPF_JIT=y CONFIG_BPF_SYSCALL=y CONFIG_BPF_JIT=y CONFIG_BPF_JIT_ALWAYS_ON=y CONFIG_BPF_JIT_DEFAULT_ON=y CONFIG_BPF_UNPRIV_DEFAULT_OFF=y CONFIG_BPF_LSM=y CONFIG_CGROUP_BPF=y CONFIG_IPV6_SEG6_BPF=y CONFIG_NETFILTER_XT_MATCH_BPF=m CONFIG_BPFILTER=y CONFIG_BPFILTER_UMH=m CONFIG_NET_CLS_BPF=m CONFIG_NET_ACT_BPF=m CONFIG_BPF_STREAM_PARSER=y CONFIG_LWTUNNEL_BPF=y CONFIG_BPF_EVENTS=y CONFIG_BPF_KPROBE_OVERRIDE=y CONFIG_TEST_BPF=m root@k8smaster:/home/mobb/eBPF_test 正在读取软件包列表... 完成 正在分析软件包的依赖关系树 正在读取状态信息... 完成 python3-bpfcc 已经是最新版 (0.12.0-2)。 python3-bpfcc 已设置为手动安装。 下列软件包是自动安装的并且现在不需要了: libcbor0.6 libfido2-1 使用'apt autoremove' 来卸载它(它们)。 升级了 0 个软件包,新安装了 0 个软件包,要卸载 0 个软件包,有 156 个软件包未被升级。 root@k8smaster:/home/mobb/eBPF_test Traceback (most recent call last): File "hello.py" , line 11, in <module> b = BPF(src_file="hello.c" ) File "/usr/lib/python3/dist-packages/bcc/__init__.py" , line 317, in __init__ src_file = BPF._find_file(src_file) File "/usr/lib/python3/dist-packages/bcc/__init__.py" , line 246, in _find_file raise Exception("Could not find file %s" % filename) Exception: Could not find file b'hello.c' root@k8smaster:/home/mobb/eBPF_test root@k8smaster:/home/mobb/eBPF_test Traceback (most recent call last): File "hello.py" , line 11, in <module> b = BPF(src_file="hello.c" ) File "/usr/lib/python3/dist-packages/bcc/__init__.py" , line 317, in __init__ src_file = BPF._find_file(src_file) File "/usr/lib/python3/dist-packages/bcc/__init__.py" , line 246, in _find_file raise Exception("Could not find file %s" % filename) Exception: Could not find file b'hello.c' root@k8smaster:/home/mobb/eBPF_test root@k8smaster:/home/mobb 正克隆到 'libbpf' ... remote: Enumerating objects: 11028, done . remote: Counting objects: 100% (2406/2406), done . remote: Compressing objects: 100% (539/539), done . remote: Total 11028 (delta 1858), reused 1918 (delta 1836), pack-reused 8622 接收对象中: 100% (11028/11028), 8.16 MiB | 4.65 MiB/s, 完成. 处理 delta 中: 100% (7421/7421), 完成. root@k8smaster:/home/mobb 公共的 音乐 dashboard-svc.yaml pwndbg 模板 桌面 deepflow_pv.yaml recommended.yaml 视频 components.yaml eBPF_test sealos_4.3.2_linux_amd64.tar.gz 图片 components.yaml.1 ingress-controller sealos_4.3.2_linux_amd64.tar.gz.1 文档 config.yaml libbpf 下载 dashboard-svc-account.yaml metrics-server root@k8smaster:/home/mobb root@k8smaster:/home/mobb/libbpf assets ci include LICENSE.LGPL-2.1 src BPF-CHECKPOINT-COMMIT docs LICENSE README.md SYNC.md CHECKPOINT-COMMIT fuzz LICENSE.BSD-2-Clause scripts root@k8smaster:/home/mobb/libbpf root@k8smaster:/home/mobb/libbpf/src bpf.c btf.c libbpf_errno.c Makefile str_error.h bpf_core_read.h btf_dump.c libbpf.h netlink.c strset.c bpf_endian.h btf.h libbpf_internal.h nlattr.c strset.h bpf_gen_internal.h elf.c libbpf_legacy.h nlattr.h usdt.bpf.h bpf.h gen_loader.c libbpf.map relo_core.c usdt.c bpf_helper_defs.h hashmap.c libbpf.pc.template relo_core.h zip.c bpf_helpers.h hashmap.h libbpf_probes.c ringbuf.c zip.h bpf_prog_linfo.c libbpf.c libbpf_version.h skel_internal.h bpf_tracing.h libbpf_common.h linker.c str_error.c root@k8smaster:/home/mobb/libbpf/src MKDIR staticobjs CC staticobjs/bpf.o CC staticobjs/btf.o CC staticobjs/libbpf.o CC staticobjs/libbpf_errno.o CC staticobjs/netlink.o CC staticobjs/nlattr.o CC staticobjs/str_error.o CC staticobjs/libbpf_probes.o CC staticobjs/bpf_prog_linfo.o CC staticobjs/btf_dump.o CC staticobjs/hashmap.o CC staticobjs/ringbuf.o CC staticobjs/strset.o CC staticobjs/linker.o CC staticobjs/gen_loader.o CC staticobjs/relo_core.o CC staticobjs/usdt.o CC staticobjs/zip.o CC staticobjs/elf.o AR libbpf.a MKDIR sharedobjs CC sharedobjs/bpf.o CC sharedobjs/btf.o CC sharedobjs/libbpf.o CC sharedobjs/libbpf_errno.o CC sharedobjs/netlink.o CC sharedobjs/nlattr.o CC sharedobjs/str_error.o CC sharedobjs/libbpf_probes.o CC sharedobjs/bpf_prog_linfo.o CC sharedobjs/btf_dump.o CC sharedobjs/hashmap.o CC sharedobjs/ringbuf.o CC sharedobjs/strset.o CC sharedobjs/linker.o CC sharedobjs/gen_loader.o CC sharedobjs/relo_core.o CC sharedobjs/usdt.o CC sharedobjs/zip.o CC sharedobjs/elf.o CC libbpf.so.1.3.0

然后再编译这里的样例代码

初窥门径:开发并运行你的第一个 eBPF 程序-火焰兔 (zadmei.com)

应该是可以了

我是分割线 真正的eBPF开发环境搭建 经过漫长的试错和研究,我发现了之前的问题所在,在拉取镜像之后的CMAKE环节这里是没什么问题的,但是在make的时候,总是会在百分之38左右的位置,直接报错,然后显示失败,查看了CMakeCache.txt文件后,发现了一个问题:每次编译都会调用LLVM10,而报错内容会显示:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 In file included from /usr/lib/llvm-10/include/clang/AST/RecursiveASTVisitor.h:16, from /home/mobb/bcc/src/cc/frontends/clang/b_frontend_action.h:23, from /home/mobb/bcc/src/cc/frontends/clang/b_frontend_action.cc:31: /usr/lib/llvm-10/include/clang/AST/Attr.h: In static member function ‘static clang::ParamIdx clang::ParamIdx::deserialize(clang::ParamIdx::SerialType)’: /usr/lib/llvm-10/include/clang/AST/Attr.h:261:17: warning: dereferencing type-punned pointer will break strict-aliasing rules [-Wstrict-aliasing] 261 | ParamIdx P(*reinterpret_cast<ParamIdx *>(&S)); | ^~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~ /usr/lib/llvm-10/include/clang/AST/Attr.h:261:17: warning: dereferencing type-punned pointer will break strict-aliasing rules [-Wstrict-aliasing] /usr/bin/ld: /usr/lib/llvm-10/lib/libclangCodeGen.a(BackendUtil.cpp.o): in function `(anonymous namespace)::EmitAssemblyHelper::EmitAssemblyWithNewPassManager(clang::BackendAction, std::unique_ptr<llvm::raw_pwrite_stream, std::default_delete<llvm::raw_pwrite_stream> >)': (.text._ZN12_GLOBAL__N_118EmitAssemblyHelper30EmitAssemblyWithNewPassManagerEN5clang13BackendActionESt10unique_ptrIN4llvm17raw_pwrite_streamESt14default_deleteIS5_EE+0x1f15): undefined reference to `getPollyPluginInfo()' collect2: error: ld returned 1 exit status make[2]: *** [examples/cpp/CMakeFiles/CGroupTest.dir/build.make:158:examples/cpp/CGroupTest] 错误 1 make[1]: *** [CMakeFiles/Makefile2:1146:examples/cpp/CMakeFiles/CGroupTest.dir/all] 错误 2 make: *** [Makefile:141:all] 错误 2

总之会指向一个问题,在LLVM里面找不到getPollyPluginInfo()这个函数,我之前以为我是我安装的LLVM12的问题,但是查阅了相关信息,LLVM12是有相关的函数的,所以实际上是没有把LLVM10卸载,然后还需要把LLVM12添加到PATH环境当中。

接下来开始进行环境的安装

参考文档

ubuntu20.04安装bcc_JD怕秃头的博客-CSDN博客

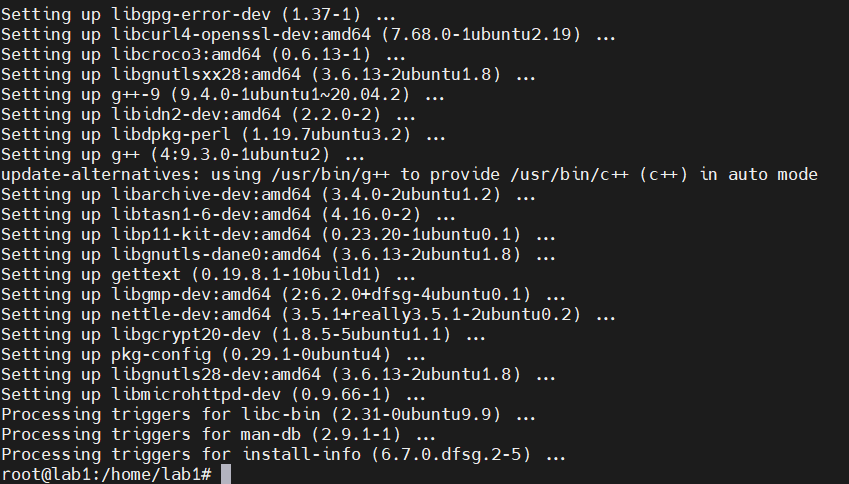

1 2 sudo apt install -y g++ libmicrohttpd-dev libsqlite3-dev libarchive-dev libcurl4-openssl-dev gettext libzstd-dev pkg-config sudo apt install make

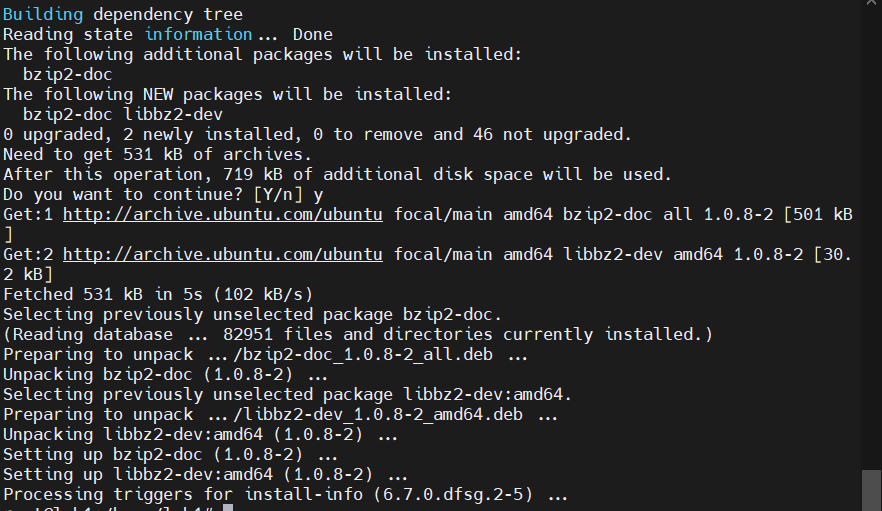

1 2 sudo apt install zlib1g-dev sudo apt-get install libbz2-dev

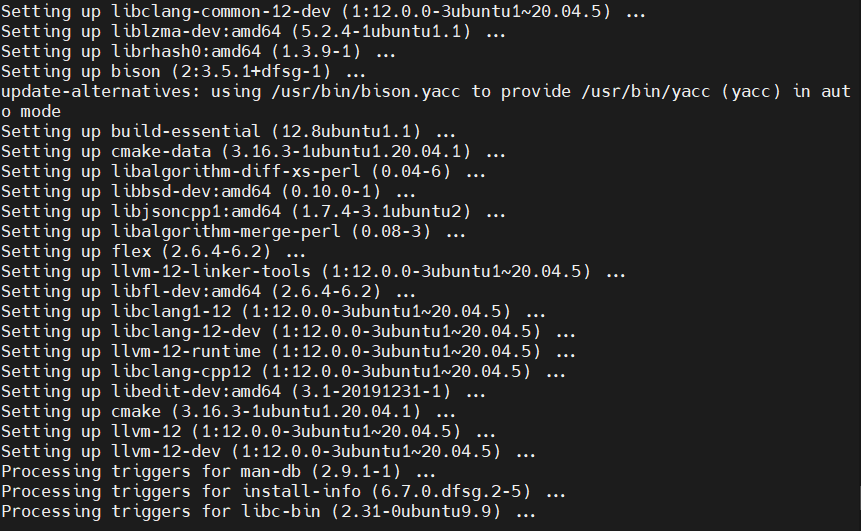

1 2 sudo apt install -y bison build-essential cmake flex git libedit-dev liblzma-dev \ libllvm12 llvm-12-dev libclang-12-dev zlib1g-dev libelf-dev libfl-dev python3-distutils

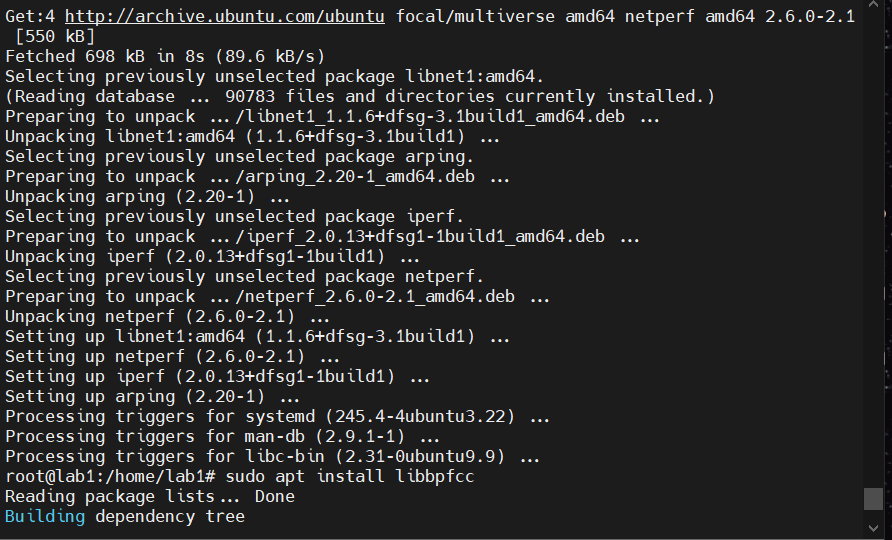

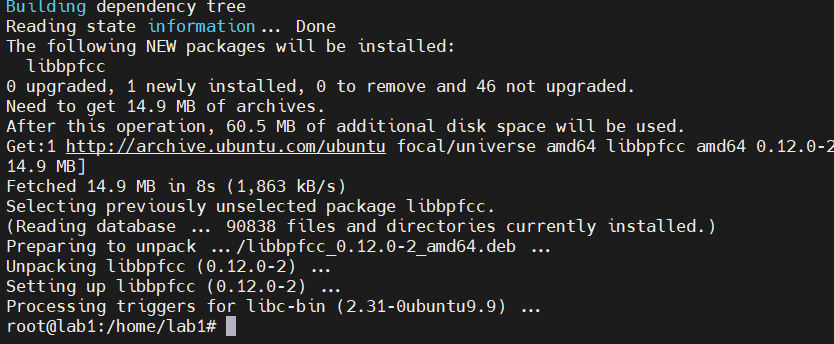

1 2 3 sudo apt install arping netperf iperf sudo apt install libbpfcc sudo apt install python3-bpfcc

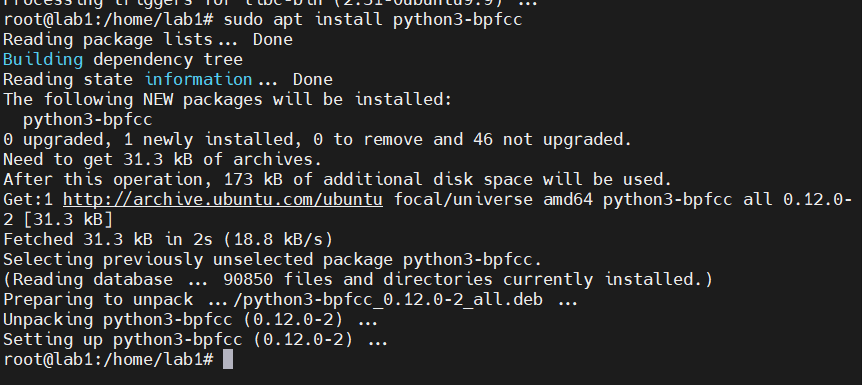

安装elfutils

1 2 3 4 5 wget https://sourceware.org/elfutils/ftp/0.188/elfutils-0.188.tar.bz2 tar xvf elfutils-0.188.tar.bz2 mkdir elfutils-0.188/build cd elfutils-0.188/build/ ../configure

记得要让这里的should all be yes全都是yes

这里出了点错误,因为我在检验我是否安装成功的时候看到了报错

1 2 root@lab1:/home/lab1/elfutils-0.188/build /usr/local /bin/eu-readelf: /lib/x86_64-linux-gnu/libdw.so.1: version `ELFUTILS_0.177' not found (required by /usr/local/bin/eu-readelf)

eu-readelf 版本与 libdw 库版本不匹配。eu-readelf 需要的 libdw 库版本是 ELFUTILS_0.177,但实际安装的 libdw 版本不符合要求。

这可能是系统中同时存在多个版本的 libdw 库导致的

所以找一下系统中的libdw库

1 2 3 4 5 6 7 root@lab1:/home/lab1/elfutils-0.188/build /home/lab1/elfutils-0.188/build/libdw/libdw.so.1 /home/lab1/elfutils-0.188/build/libdw/libdw.so /var/lib/docker/overlay2/6310b69a4afce7b65b94f0a38b6aab25057152455ddd5f1134537a7bd75474b9/diff/usr/lib64/libdw.so.1 /usr/local /lib/libdw.so.1 /usr/local /lib/libdw.so /usr/lib/x86_64-linux-gnu/libdw.so.1

移除旧版本的 libdw 库 :移除以下路径的库:

/usr/lib/x86_64-linux-gnu/libdw.so.1/var/lib/docker/overlay2/6310b69a4afce7b65b94f0a38b6aab25057152455ddd5f1134537a7bd75474b9/diff/usr/lib64/libdw.so.1

然后再重新安装,从解压那一部分重新开始。重新安装之后依然遇到了问题

1 2 root@lab1:/home/lab1/elfutils-0.188/build /usr/local /bin/eu-readelf: error while loading shared libraries: libdw.so.1: cannot open shared object file: No such file or directory

这个错误表明 eu-readelf 程序在运行时无法找到所需的共享库文件 libdw.so.1。这通常是因为系统没有正确配置共享库搜索路径

添加共享库搜索路径:

打开文件 /etc/ld.so.conf 以编辑共享库的搜索路径。使用以下命令以 root 用户编辑该文件:

1 sudo vim /etc/ld.so.conf

在文件中添加一行,指向 elfutils 的安装目录,通常是 /usr/local/lib,然后保存并退出编辑器

更新共享库缓存:

运行以下命令来更新共享库缓存:

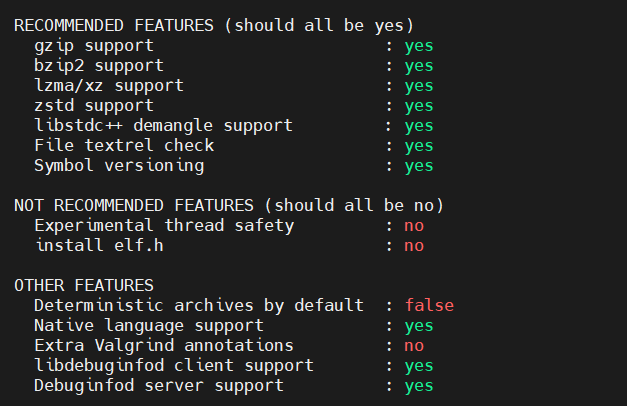

1 /usr/local /bin/eu-readelf --version

只要这样就是成功了

接下来安装bcc

1 2 git clone https://github.com/iovisor/bcc.git mkdir bcc/build; cd bcc/build

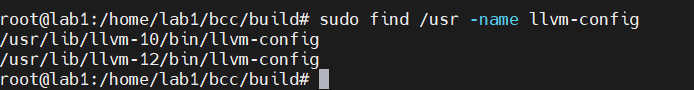

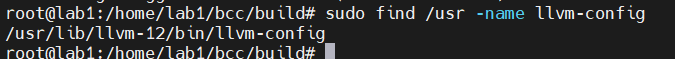

这里要先看看有没有安装的llvm

这里没有输出不代表就没有安装

1 sudo find /usr -name llvm-config

这里要删掉llvm-10

1 sudo apt-get remove llvm-10

删除之后还需要吧llvm12添加到path中

添加这个到文件最后一行

1 export PATH=/usr/lib/llvm-12/bin:$PATH

重新加载 shell 配置文件以使更改生效

这样就行了

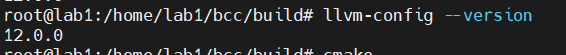

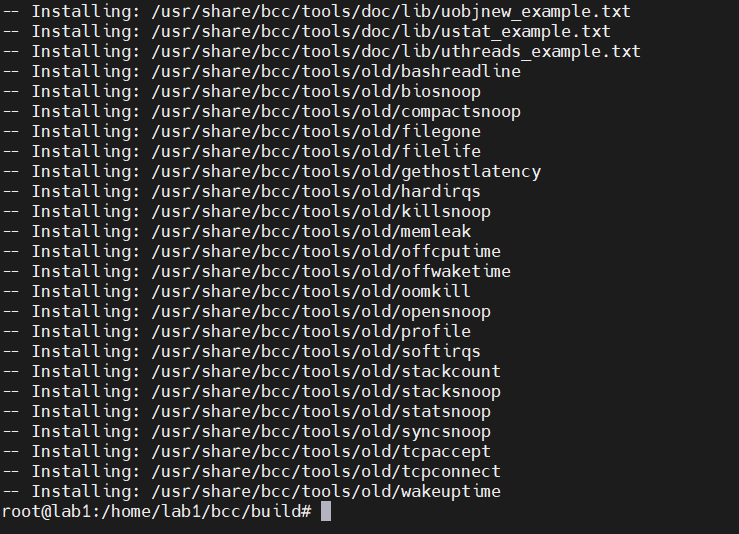

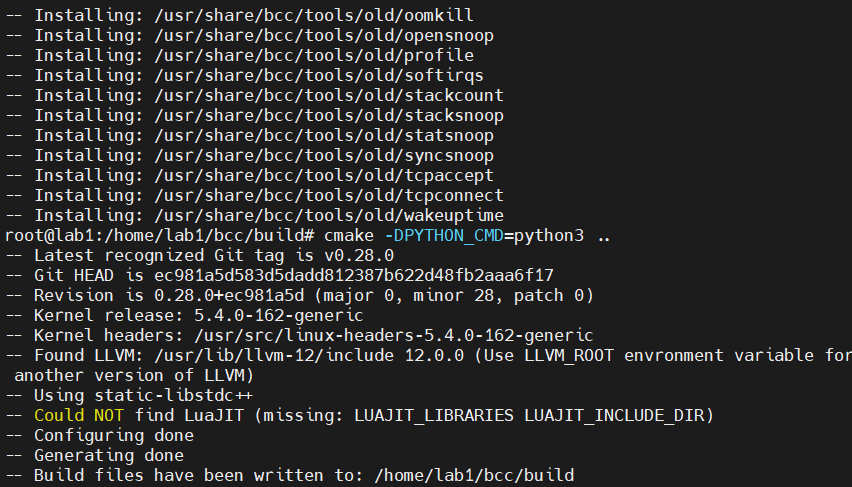

接下来在bcc/build目录下进行

1 cmake -DPYTHON_CMD=python3 ..

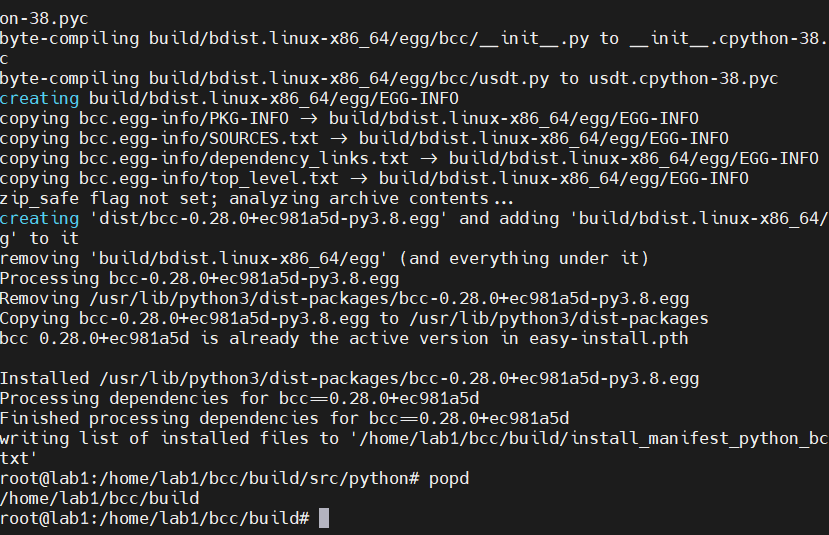

1 2 3 4 pushd src/python/make sudo make install popd

进入/usr/share/bcc/tools中进行测试

然后就一直报这样的错,明明在安装的时候是没有任何错误的。

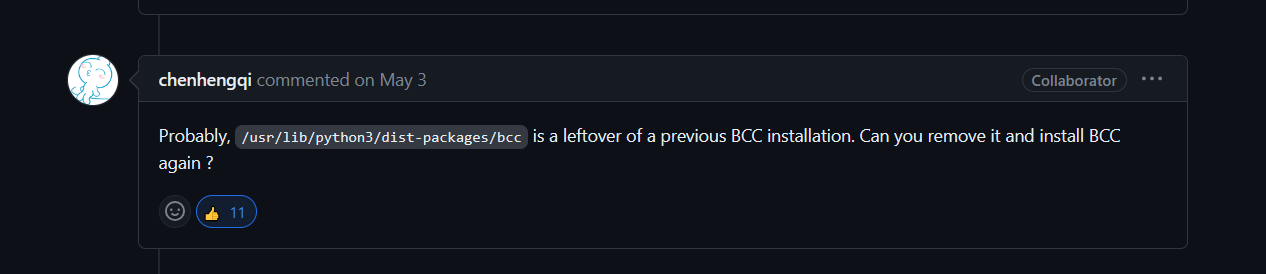

搜寻了好久,最后直接进官网的issues上找到了相同的问题

AttributeError: /lib/x86_64-linux-gnu/libbcc.so.0: undefined symbol: bpf_module_create_b · Issue #4583 · iovisor/bcc (github.com)

感谢这位大哥的建议,很可能之前有冗杂的bcc安装。

所以需要删掉/usr/lib/python3/dist-packages/bcc这个目录

1 sudo apt-get remove --purge bpfcc-tools python3-bpfcc

接着删除

1 sudo rm -rf /usr/lib/python3/dist-packages/bcc

然后重新安装一次,就可以了

在使用bpftool的时候有个报错

1 2 3 4 5 6 7 8 9 10 11 root@k8smaster:/home/mobb/eBPF_test WARNING: bpftool not found for kernel 5.15.0-84 You may need to install the following packages for this specific kernel: linux-tools-5.15.0-84-generic linux-cloud-tools-5.15.0-84-generic You may also want to install one of the following packages to keep up to date: linux-tools-generic linux-cloud-tools-generic

按照提示安装这些:

1 2 3 sudo apt update sudo apt install linux-tools-5.15.0-84-generic linux-cloud-tools-5.15.0-84-generic

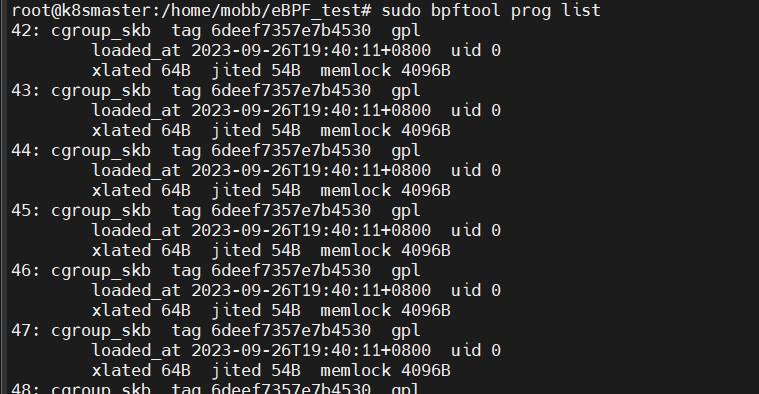

安装完之后就可以使用了

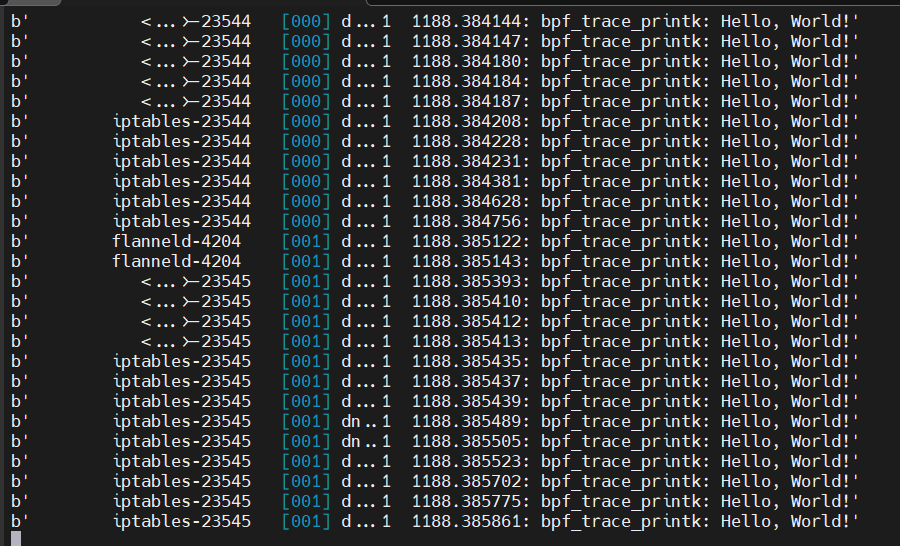

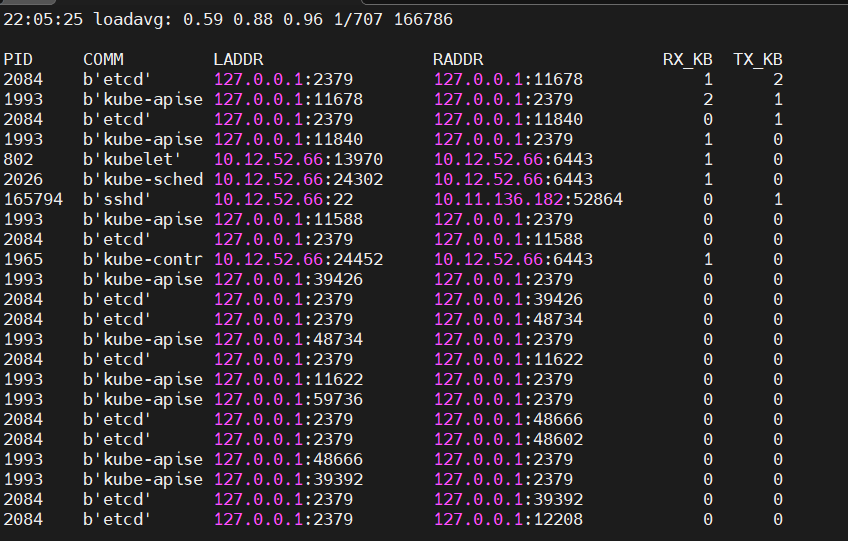

查看系统中正在运行的ebpf程序

输出中,45是这个 eBPF 程序的编号,kprobe 是程序的类型,而 memlock是程序的名字。

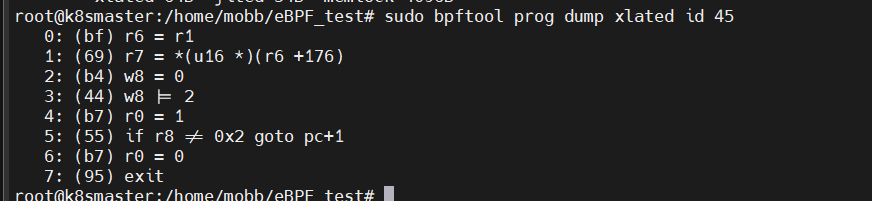

有了 eBPF 程序编号之后,执行下面的命令就可以导出这个 eBPF 程序的指令(注意把 45替换成你查询到的编号):

1 sudo bpftool prog dump xlated id 45

1 2 3 #include <linux/bpf.h> int bpf (int cmd, union bpf_attr *attr, unsigned int size)

BPF 系统调用接受三个参数:

第一个,cmd ,代表操作命令,比如上一讲中我们看到的 BPF_PROG_LOAD 就是加载 eBPF 程序;

第二个,attr,代表 bpf_attr 类型的 eBPF 属性指针,不同类型的操作命令需要传入不同的属性参数;

第三个,size ,代表属性的大小。

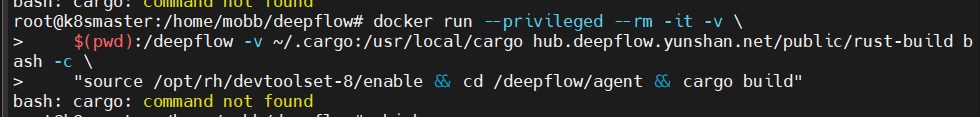

Agent调试 因为在使用官方调试环境时出现了报错

1 2 3 4 5 6 7 git clone --recursive https://github.com/deepflowio/deepflow.git cd deepflow docker run --privileged --rm -it -v \ $(pwd ):/deepflow -v ~/.cargo:/usr/local /cargo hub.deepflow.yunshan.net/public/rust-build bash -c \ "source /opt/rh/devtoolset-8/enable && cd /deepflow/agent && cargo build"

这是关于cargo

1 2 [root@5e338fc61771 cargo]

从输出来看,cargo 可执行文件位于 /usr/local/rustup/toolchains/stable-x86_64-unknown-linux-gnu/bin/ 目录中。

你可以尝试以下操作来使用 cargo:

直接使用完整路径 :

1 /usr/local /rustup/toolchains/stable-x86_64-unknown-linux-gnu/bin/cargo --version

1 2 docker run --privileged --rm -it -v $(pwd ):/deepflow -v /usr/local /cargo hub.deepflow.yunshan.net/public/rust-build bash

将其添加到 PATH 环境变量中 :

1 2 PATH=$PATH :/usr/local /rustup/toolchains/stable-x86_64-unknown-linux-gnu/bin/ cargo --version

将其添加到 PATH 后,应该可以在该会话中直接使用 cargo 命令。如果打算经常这样做,可能需要考虑在 Docker 镜像的启动脚本或 bash 配置文件中添加此 PATH 更新,以便每次启动容器时都能自动设置。

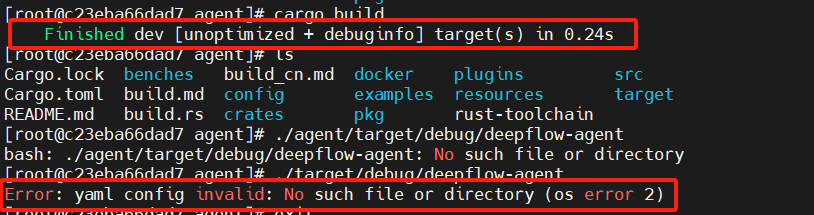

源码编译 这里虽然cargo build成功了,但是却运行不了

更新!!!此时其实已经构建好了编译环境,但是不能直接运行deepflow-agent,这需要加上一些命令选项,具体可以直接运行对应的程序,按照他那个命令来构建环境是可以的

进入容器内部

1 2 docker run --privileged --rm -it -v \ $(pwd ):/deepflow -v ~/.cargo:/usr/local /cargo hub.deepflow.yunshan.net/public/rust-build bash

命令执行

1 2 3 source /opt/rh/devtoolset-8/enable cd /deepflow/agentcargo build

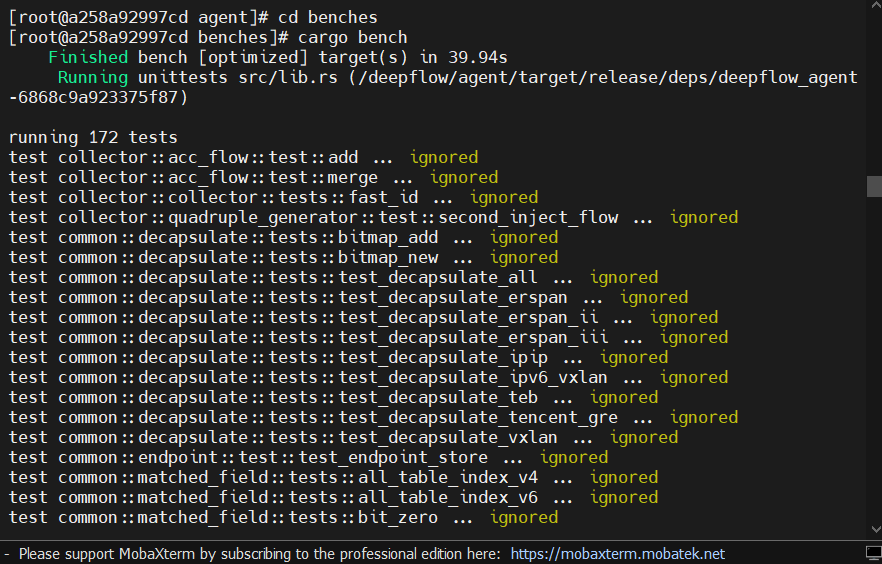

分析/agent/benches文件夹 总的来说,这个文件夹是用来进行测试代码性能的,使用的是Criterion库来进行测试。

构建好编译环境之后,可以在benches文件夹下执行

来测试代码

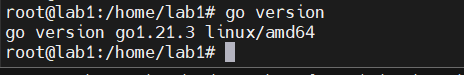

安装Go以及TinyGo 下载go的二进制包

All releases - The Go Programming Language (google.cn)

然后解压

1 tar -C /usr/local -xzf go1.21.3.linux-amd64.tar.gz

-C 选项解压文件到 /usr/local 目录,查看 /usr/local/go 目录的内容

将 Go 二进制文件添加到 $PATH 环境变量中

打开 .bashrc 或者 .bash_profile 文件

粘贴如下行

1 export PATH=$PATH :/usr/local /go/bin

保存更改并退出文件

重新加载 .bashrc 或者 .bash_profile 文件

使用 go version 命令查看版本号

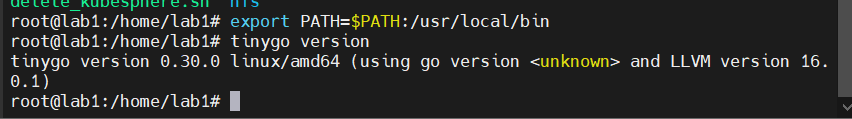

安装Tinygo

1 2 wget https://github.com/tinygo-org/tinygo/releases/download/v0.30.0/tinygo_0.30.0_amd64.deb sudo dpkg -i tinygo_0.30.0_amd64.deb

1 export PATH=$PATH :/usr/local /bin

查看是否安装成功

上传wasm插件测试

这次测试的是官网的HTTPv1协议增强,点击链接进入,然后把整个项目压缩包下下来,放入自己选定的文件夹里面。用unzip命令解压之后进入目录。

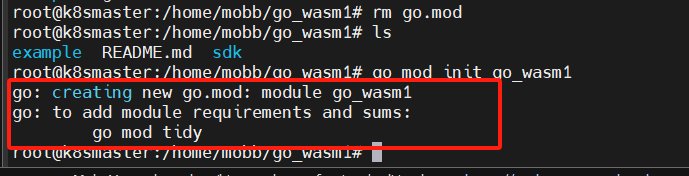

这时要先移除这里的go.mod

然后重新初始化

这时它会提示你要添加一些requirement

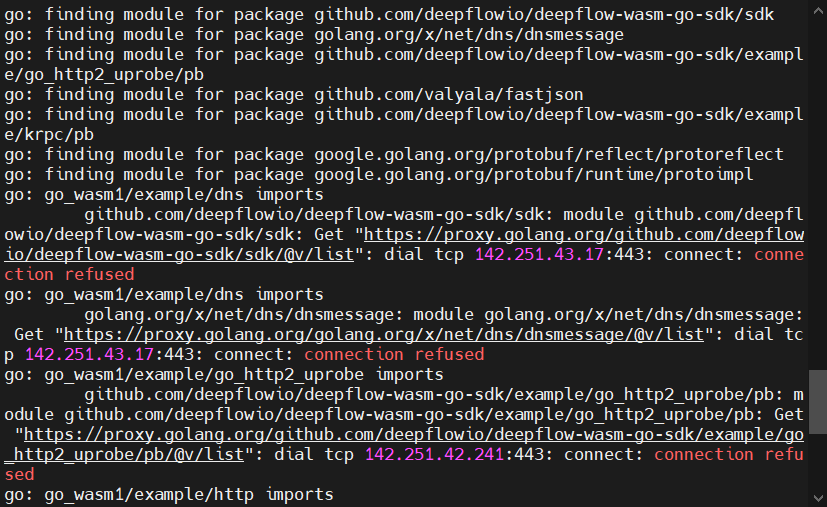

按着他给的命令执行

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 root@k8smaster:/home/mobb/go_wasm1 go: creating new go.mod: module go_wasm1 go: to add module requirements and sums: go mod tidy root@k8smaster:/home/mobb/go_wasm1 go: finding module for package github.com/deepflowio/deepflow-wasm-go-sdk/sdk go: finding module for package golang.org/x/net/dns/dnsmessage go: finding module for package github.com/deepflowio/deepflow-wasm-go-sdk/example/go_http2_uprobe/pb go: finding module for package github.com/valyala/fastjson go: finding module for package github.com/deepflowio/deepflow-wasm-go-sdk/example/krpc/pb go: finding module for package google.golang.org/protobuf/reflect/protoreflect go: finding module for package google.golang.org/protobuf/runtime/protoimpl go: go_wasm1/example/dns imports github.com/deepflowio/deepflow-wasm-go-sdk/sdk: module github.com/deepflowio/deepflow-wasm-go-sdk/sdk: Get "https://proxy.golang.org/github.com/deepflowio/deepflow-wasm-go-sdk/sdk/@v/list" : dial tcp 142.251.43.17:443: connect: connection refused go: go_wasm1/example/dns imports golang.org/x/net/dns/dnsmessage: module golang.org/x/net/dns/dnsmessage: Get "https://proxy.golang.org/golang.org/x/net/dns/dnsmessage/@v/list" : dial tcp 142.251.43.17:443: connect: connection refused go: go_wasm1/example/go_http2_uprobe imports github.com/deepflowio/deepflow-wasm-go-sdk/example/go_http2_uprobe/pb: module github.com/deepflowio/deepflow-wasm-go-sdk/example/go_http2_uprobe/pb: Get "https://proxy.golang.org/github.com/deepflowio/deepflow-wasm-go-sdk/example/go_http2_uprobe/pb/@v/list" : dial tcp 142.251.42.241:443: connect: connection refused

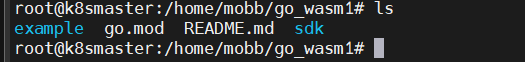

查看一下go.mod是否有生成

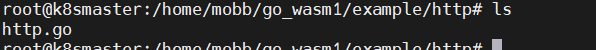

cd到example目录下的http目录

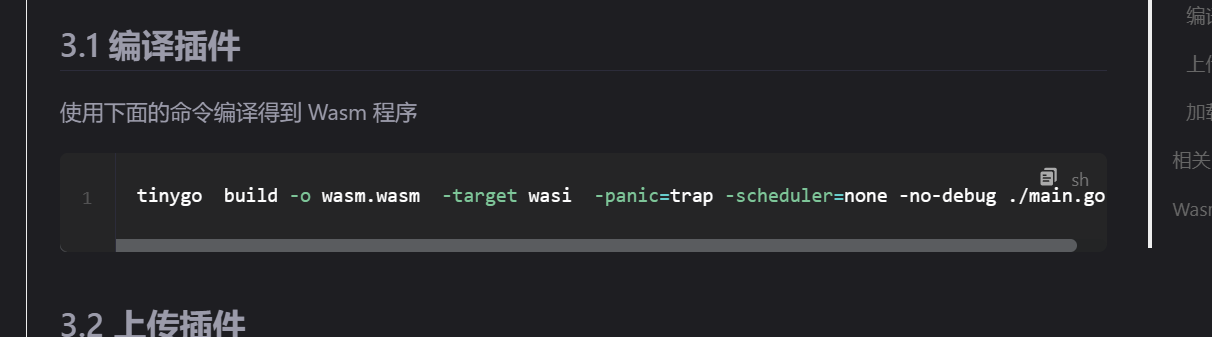

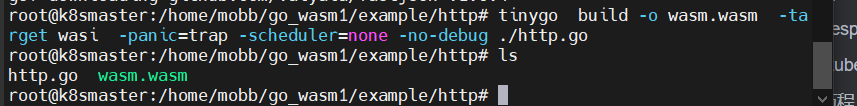

运行一下它官网给的编译命令

修改后如下:

1 tinygo build -o wasm.wasm -target wasi -panic=trap -scheduler=none -no-debug ./http.go

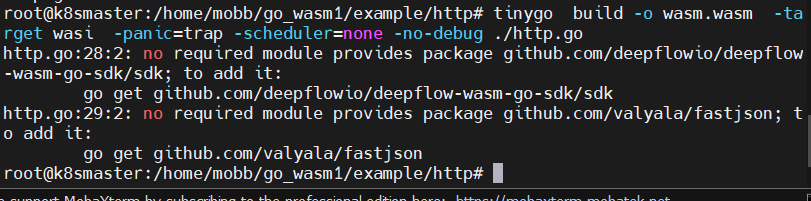

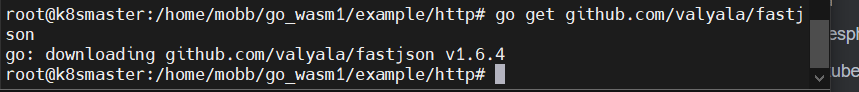

然后他又会告诉你缺少条件依赖,还是按他给的命令去执行

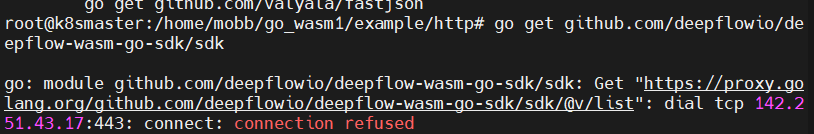

1 go get github.com/deepflowio/deepflow-wasm-go-sdk/sdk

遇到了网络问题,这里开一下梯子吧

1 2 root@k8smaster:/home/mobb/go_wasm1/example/http root@k8smaster:/home/mobb/go_wasm1/example/http

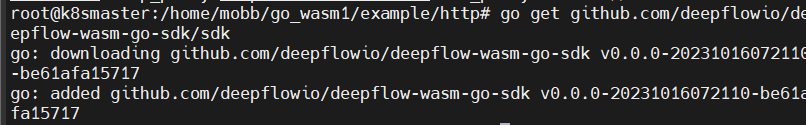

再次执行,这样应该就是成功了

还有一个依赖

1 go get github.com/valyala/fastjson

再次执行一下编译命令

1 tinygo build -o wasm.wasm -target wasi -panic=trap -scheduler=none -no-debug ./http.go

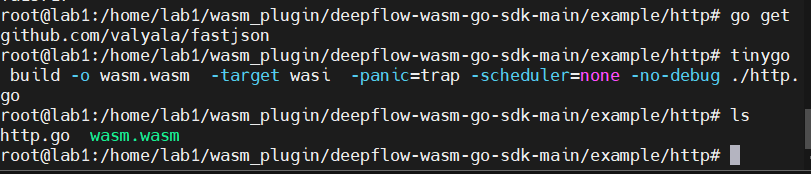

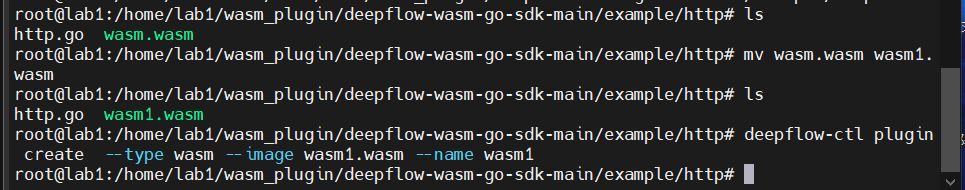

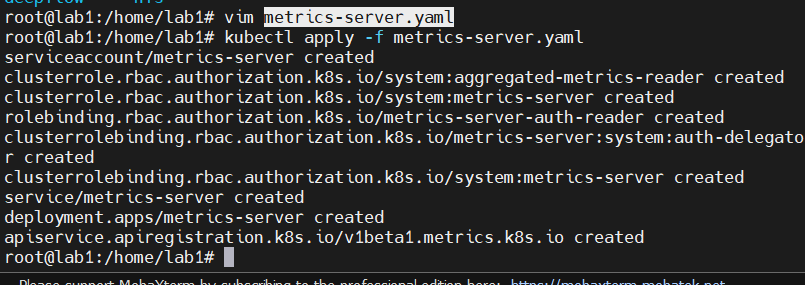

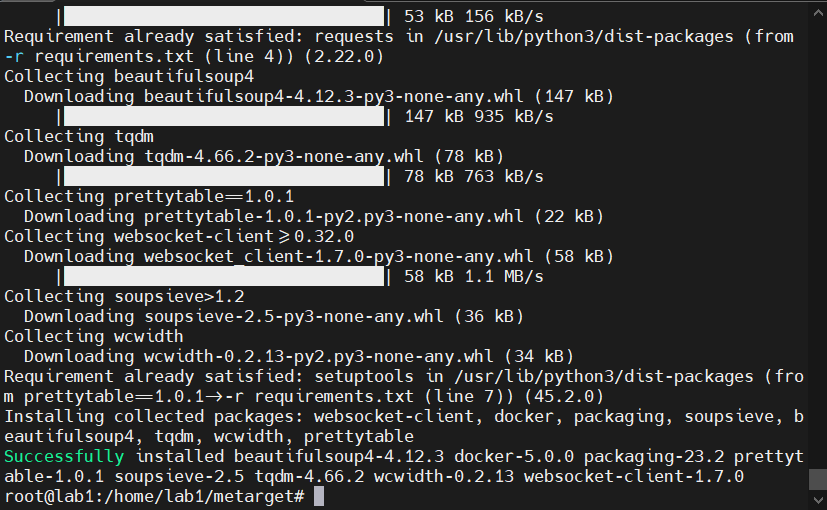

lab1的编译 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main go: creating new go.mod: module deepflow-wasm-go-sdk-main go: to add module requirements and sums: go mod tidy root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main go: finding module for package github.com/deepflowio/deepflow-wasm-go-sdk/sdk go: finding module for package github.com/deepflowio/deepflow-wasm-go-sdk/example/go_http2_uprobe/pb go: finding module for package golang.org/x/net/dns/dnsmessage go: finding module for package github.com/valyala/fastjson go: downloading golang.org/x/net v0.17.0 go: downloading github.com/deepflowio/deepflow-wasm-go-sdk v0.0.0-20231016072110-be61afa15717 go: downloading github.com/valyala/fastjson v1.6.4 go: finding module for package github.com/deepflowio/deepflow-wasm-go-sdk/example/krpc/pb go: finding module for package google.golang.org/protobuf/reflect/protoreflect go: finding module for package google.golang.org/protobuf/runtime/protoimpl go: downloading google.golang.org/protobuf v1.31.0 go: found github.com/deepflowio/deepflow-wasm-go-sdk/sdk in github.com/deepflowio/deepflow-wasm-go-sdk v0.0.0-20231016072110-be61afa15717 go: found golang.org/x/net/dns/dnsmessage in golang.org/x/net v0.17.0 go: found github.com/valyala/fastjson in github.com/valyala/fastjson v1.6.4 go: found github.com/deepflowio/deepflow-wasm-go-sdk/example/krpc/pb in github.com/deepflowio/deepflow-wasm-go-sdk v0.0.0-20231016072110-be61afa15717 go: found google.golang.org/protobuf/reflect/protoreflect in google.golang.org/protobuf v1.31.0 go: found google.golang.org/protobuf/runtime/protoimpl in google.golang.org/protobuf v1.31.0 go: downloading github.com/google/go-cmp v0.5.5 go: downloading golang.org/x/xerrors v0.0.0-20191204190536-9bdfabe68543 go: finding module for package github.com/deepflowio/deepflow-wasm-go-sdk/example/go_http2_uprobe/pb go: deepflow-wasm-go-sdk-main/example/go_http2_uprobe imports github.com/deepflowio/deepflow-wasm-go-sdk/example/go_http2_uprobe/pb: module github.com/deepflowio/deepflow-wasm-go-sdk@latest found (v0.0.0-20231016072110-be61afa15717), but does not contain package github.com/deepflowio/deepflow-wasm-go-sdk/example/go_http2_uprobe/pb

1 2 3 4 5 6 7 8 root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http http.go:28:2: no required module provides package github.com/deepflowio/deepflow-wasm-go-sdk/sdk; to add it: go get github.com/deepflowio/deepflow-wasm-go-sdk/sdk http.go:29:2: no required module provides package github.com/valyala/fastjson; to add it: go get github.com/valyala/fastjson root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http go: added github.com/deepflowio/deepflow-wasm-go-sdk v0.0.0-20231016072110-be61afa15717 root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http

1 2 3 root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http http.go wasm.wasm

插件上传摸索

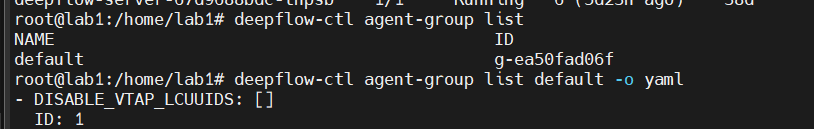

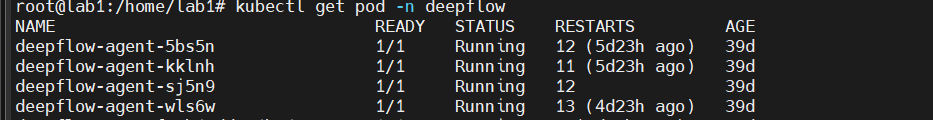

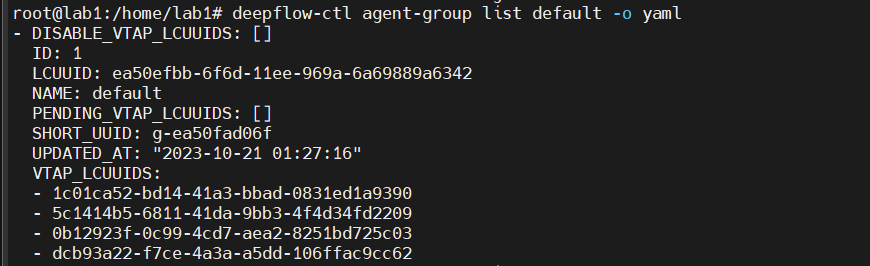

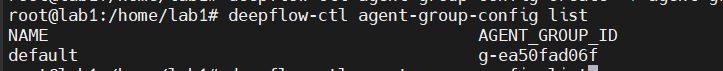

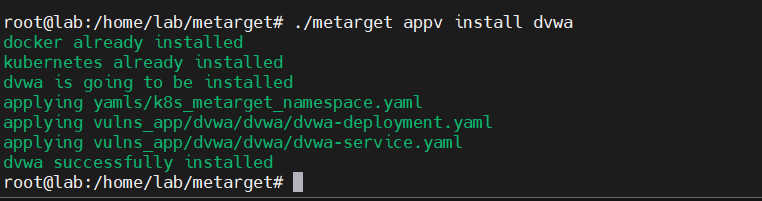

这里可以看到lab1和lab2的agent-group是同一个ID,那么我觉得

这四个agent pod都是由同一个agent-group管理的

这里查看的信息,有个字段VTAP_LCUUIDS,搜到的解释如下:

VTAP_LCUUIDS:这是一个列表,其中包含了多个虚拟交换机代理(VTAP)的唯一标识符(LCUUID)。每个 VTAP 都有一个唯一的 LCUUID,用于标识该 VTAP。在这个特定的代理组 “default” 中,有多个 VTAP,它们的 LCUUID 被列在这个列表中。

虚拟交换机代理(VTAP)通常用于虚拟化网络和安全操作。在您的情况下,这个代理组 “default” 可能与一组虚拟交换机代理相关联,这些代理在网络配置和操作中发挥重要作用。这个字段列出了该代理组所使用的 VTAP 的唯一标识符,以便进行配置和管理,不知道是否跟那四个pod相对应。

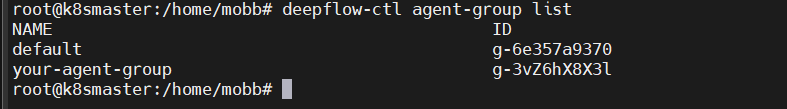

看了一下官网,应该是需要自己先创建一个配置文件,然后再通过ID关联

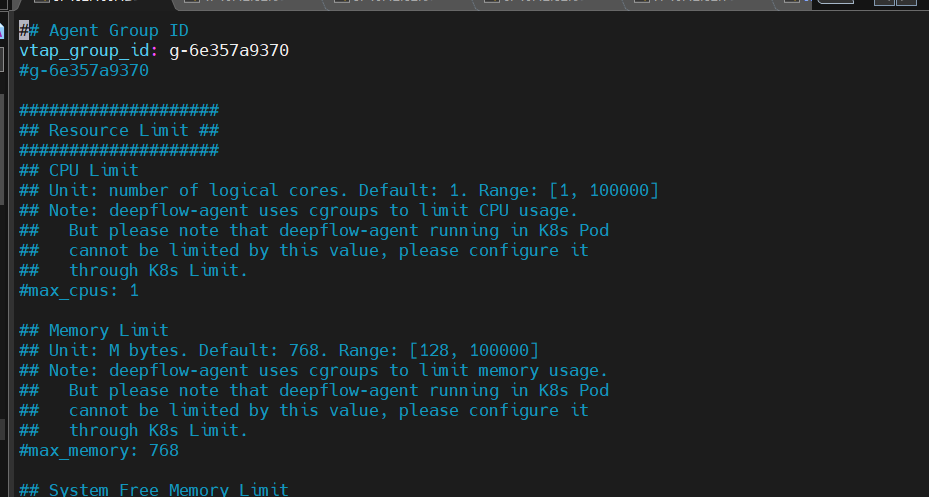

1 deepflow-ctl agent-group-config example

这个命令可以查看配置文件的默认配置有哪些

1 deepflow-ctl agent-group-config example > xxxx.yaml

直接给他导到一个yaml文件方便更改

这里我直接用default试一下吧,我现在还存在疑问,既然一个组管理了所有的agent,那么应该不需要其他的组才对。或许其他的组是需要用到多个server的情况?

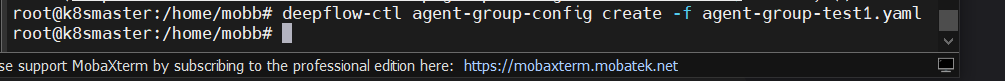

这里修改一下ID,然后直接创建

1 deepflow-ctl agent-group-config create -f agent-group-test1.yaml

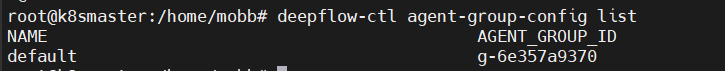

1 deepflow-ctl agent-group-config list

然后就能看到配置文件列表了

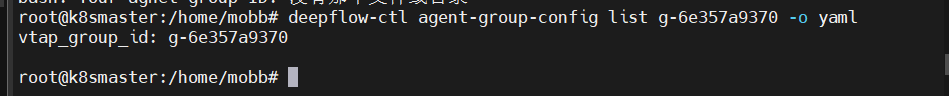

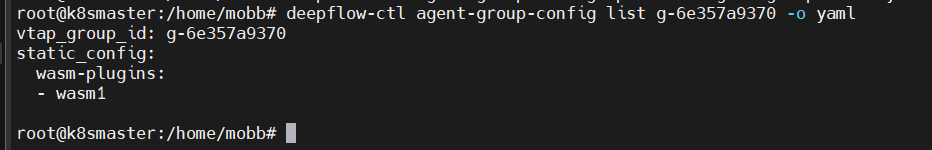

1 deepflow-ctl agent-group-config list g-6e357a9370 -o yaml

这个命令也能看到效果了

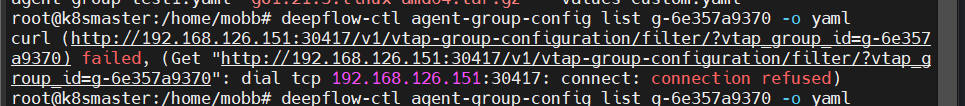

但是有时候会有这种错误

在浏览器打开grafana界面或者重启就好了,因为有时候有些pod莫名其妙就不行了,也不知道什么原因,可能是虚拟机的问题

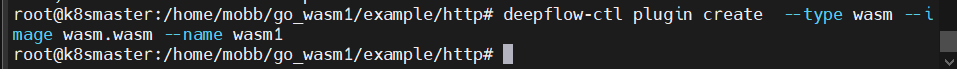

这里就直接上传插件试一下

1 deepflow-ctl plugin create --type wasm --image wasm.wasm --name wasm1

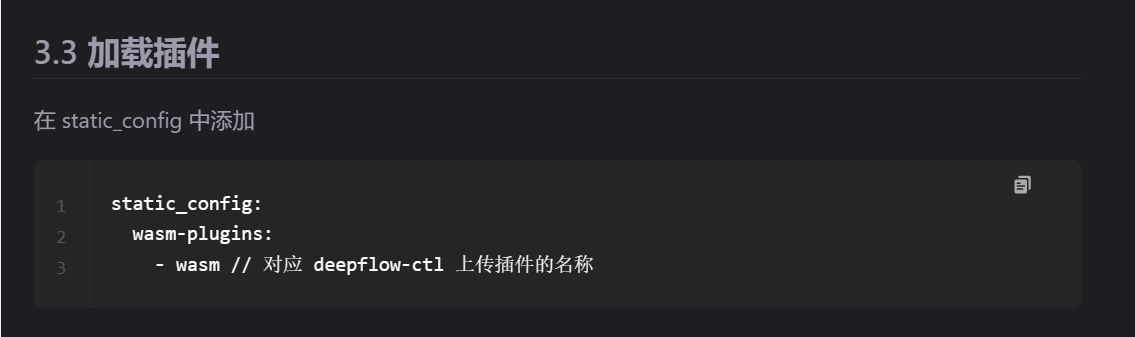

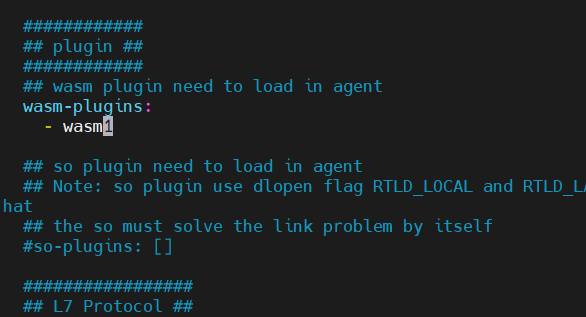

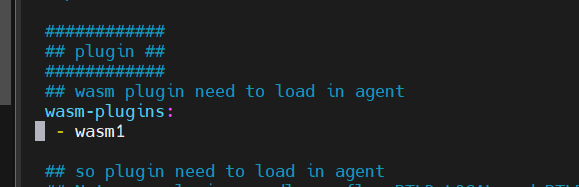

然后需要更改一下那个配置文件

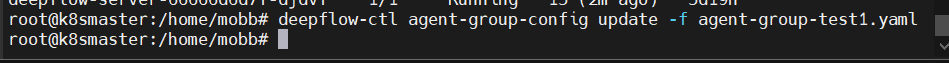

1 deepflow-ctl agent-group-config update -f your-agent-group-config.yaml

然后再查看一下配置

1 deepflow-ctl agent-group-config list g-6e357a9370 -o yaml

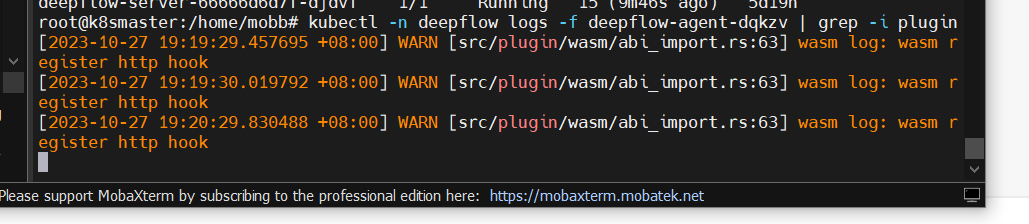

查看一下插件是否加载成功

1 root@k8smaster:/home/mobb

看起来似乎成功了,去页面看看

lab1插件上传 1 2 3 root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http NAME ID default g-ea50fad06f

1 2 3 root@lab1:/home/lab1 NAME AGENT_GROUP_ID default g-ea50fad06f

上传插件

1 2 3 4 root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http http.go wasm1.wasm root@lab1:/home/lab1/wasm_plugin/deepflow-wasm-go-sdk-main/example/http

更改插件配置

更新文件

1 deepflow-ctl agent-group-config update -f agent-group-config.yaml

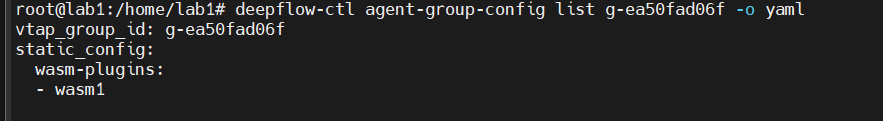

再查看一下配置

1 deepflow-ctl agent-group-config list g-ea50fad06f -o yaml

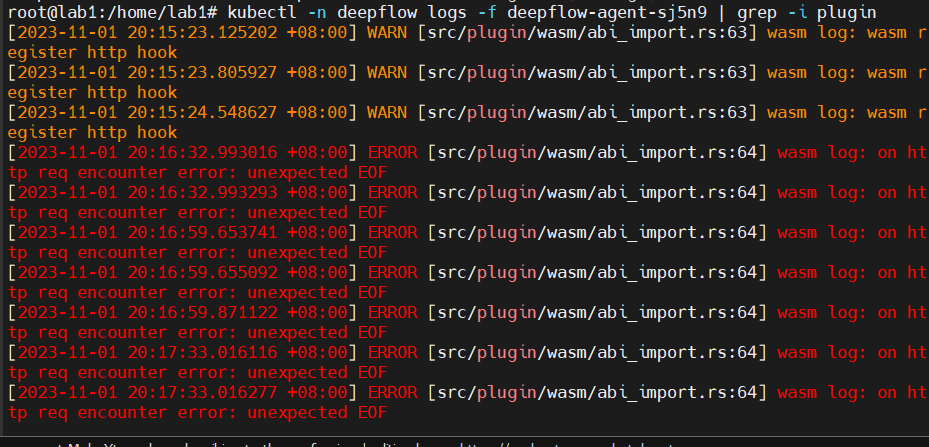

然后看一下日志,这里运行的agent pod有这几个

1 2 3 4 5 6 7 8 9 10 11 12 root@lab1:/home/lab1 NAME READY STATUS RESTARTS AGE deepflow-agent-5bs5n 1/1 Running 13 (19m ago) 45d deepflow-agent-kklnh 1/1 Running 12 (19m ago) 45d deepflow-agent-sj5n9 1/1 Running 13 (19m ago) 45d deepflow-agent-wls6w 1/1 Running 14 (19m ago) 45d deepflow-app-7f69b47dd6-4hv47 1/1 Running 2 (11d ago) 39d deepflow-clickhouse-0 1/1 Running 12 (11d ago) 45d deepflow-grafana-84cdcdf594-8n4rd 1/1 Running 14 (11d ago) 11d deepflow-mysql-6c97f94d8f-pkdsk 1/1 Running 0 11d deepflow-server-67d9688bdc-tnpsb 1/1 Running 6 (11d ago) 44d

随便挑一个都是有个,但是这里有报错

clash启动教程(已部署) 1 2 3 4 5 6 7 8 9 10 11 systemctl start clash export https_proxy=http://127.0.0.1:7890 http_proxy=http://127.0.0.1:7890 all_proxy=socks5://127.0.0.1:7890echo $http_proxy echo $https_proxy

微服务部署 部署kubesphere 参考文档:

k8s创建默认storageclass,解决pvc一直pending问题_k8s pvc pending_阿文_ing的博客-CSDN博客

报错,一开始没有默认的storageclashh,所以进行nfs安装

部署kubesphere时需要默认 StorageClass_storageclass default-CSDN博客

参考的这个文档,但是这个博主使用的是Centos,需要把其中的换成Ubuntu的指令

1.安装NFS服务器

1 2 sudo apt update sudo apt install nfs-kernel-server

2.创建一个要共享的目录

3.给目录增加权限

1 sudo chmod 777 /nfs_share

4.配置 NFS 共享

添加以下内容

1 /home/lab1/nfs_share *(rw,sync,no_root_squash,no_subtree_check)

5.重新启动NFS服务器

1 sudo systemctl restart nfs-kernel-server

6.创建一个连接nfs服务器的客户端

配置文件nfs-client.yaml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 kind: Deployment apiVersion: apps/v1 metadata: name: nfs-client-provisioner spec: replicas: 1 selector: matchLabels: app: nfs-client-provisioner strategy: type: Recreate template: metadata: labels: app: nfs-client-provisioner spec: serviceAccountName: nfs-client-provisioner containers: - name: nfs-client-provisioner image: quay.io/external_storage/nfs-client-provisioner:latest volumeMounts: - name: nfs-client-root mountPath: /persistentvolumes env: - name: PROVISIONER_NAME value: fuseim.pri/ifs - name: NFS_SERVER value: 10.12 .52 .66 - name: NFS_PATH value: /home/lab1/nfs_share volumes: - name: nfs-client-root nfs: server: 10.12 .52 .66 path: /home/lab1/nfs_share

记住其他四台也需要安装NFS,以下操作四台都需要进行

1 2 sudo apt update sudo apt install nfs-common

创建挂载的文件

将这个文件挂载到lab1的nfs_share上

1 sudo mount -t nfs 10.12.52.66:/home/lab1/nfs_share /mnt/test_mount

lab1 进行然后创建资源对象

1 2 3 kubectl create -f nfs-client.yaml kubectl create -f nfs-client-sa.yaml kubectl create -f nfs-client-class.yaml

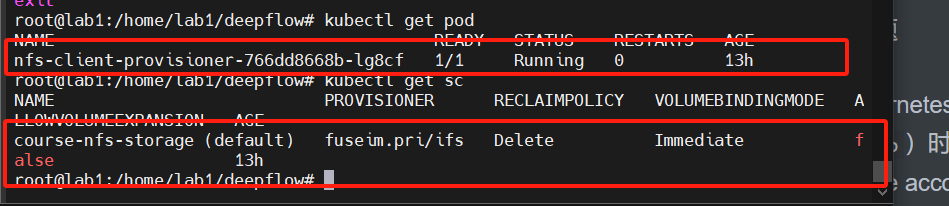

pod和sc都成功了就行了

之后重新部署,此时发生了权限问题

概述 (kubesphere.io)

这个错误信息指出,当尝试应用某些 Kubernetes 对象(特别是 globalrulegroups.alerting.kubesphere.io)时,遇到了权限问题。具体来说,ks-installer 这个 service account 没有足够的权限来获取或修改集群范围内的 globalrulegroups 资源。

错误消息部分为:

1 2 sqlCopy code globalrulegroups.alerting.kubesphere.io "prometheus-operator" is forbidden: User "system:serviceaccount:kubesphere-system:ks-installer" cannot get resource "globalrulegroups" in API group "alerting.kubesphere.io" at the cluster scope

这意味着需要为 ks-installer 这个 service account 授予所需的权限。通常,这是通过 ClusterRole 和 ClusterRoleBinding 来实现的。

这里是一个简化的步骤,以授予 ks-installer 所需的权限:

创建一个 ClusterRole,它允许在 alerting.kubesphere.io API 组中的 globalrulegroups 资源上执行所需的操作:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 yamlCopy codeapiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: name: globalrulegroups-access rules: - apiGroups: - alerting.kubesphere.io resources: - globalrulegroups verbs: - get - list - watch - create - update - patch - delete

创建一个 ClusterRoleBinding,将上面创建的 ClusterRole 绑定到 ks-installer 这个 service account 上:

1 2 3 4 5 6 7 8 9 10 11 12 yamlCopy codeapiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: ks-installer-globalrulegroups-access roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: globalrulegroups-access subjects: - kind: ServiceAccount name: ks-installer namespace: kubesphere-system

然后进行资源部署

1 2 3 kubectl apply -f ClusterRole.yaml kubectl apply -f ClusterRoleBinding.yaml

之后再次重新部署,然后查看日志

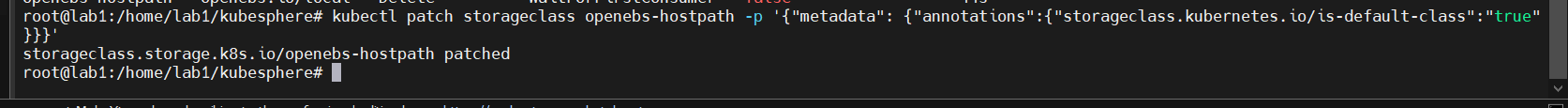

注:如果发现安装还是找不到默认的storageclass

可以尝试一下执行这个命令,把部署的nfs打上默认的标签

1 kubectl patch storageclass course-nfs-storage -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

1 kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l 'app in (ks-install, ks-installer)' -o jsonpath='{.items[0].metadata.name}' ) -f

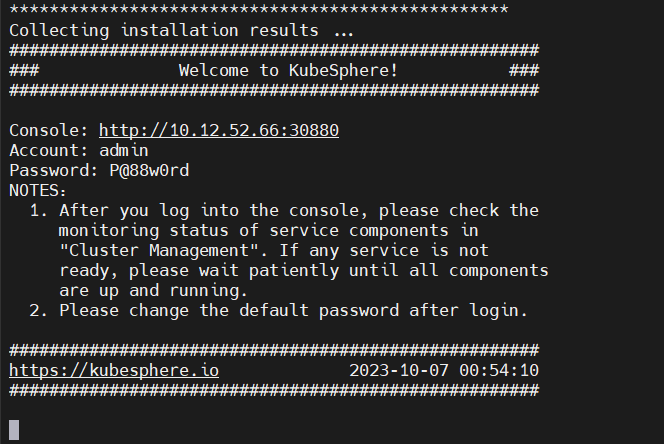

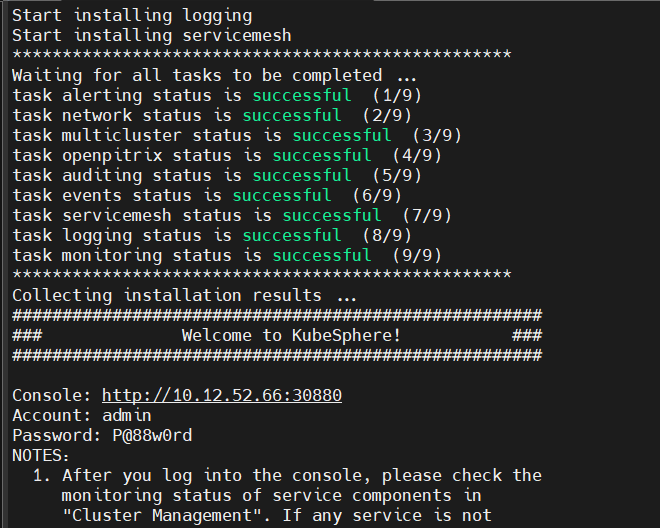

这样就是成功了,可以通过http://10.12.52.66:30880访问,现在用户名和密码是admin,123456.,Aa

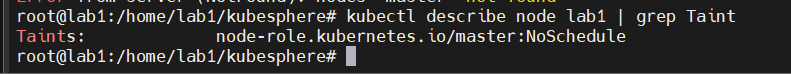

真·部署kubesphere 心路历程 因为上面的只是最小化All-in-one安装,所以并没有什么作用,本来想直接修改cluster-configuration.yaml,但是之后发生了一堆问题,于是干脆删了重装,卸载需要用到官方给的卸载脚本

ks-installer/scripts/kubesphere-delete.sh at release-3.1 · kubesphere/ks-installer (github.com)

把这个复制然后新建一个sh文件就好了,运行结束后就可以删除了,但是过程相当的漫长,之后

过了一遍安装,但是在deploying minio的时候一直卡住

1 TASK [common : KubeSphere | Checking minio] ************************************ changed: [localhost] TASK [common : KubeSphere | Deploying minio] ***********************************

在官方的issues也看到了许多有同样问题的人

Ubuntu 2204 Arm64 安装 v3.3.0 启用插件 一直卡在 Deploying minio · Issue #5273 · kubesphere/kubesphere (github.com)

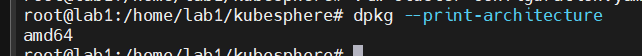

比如这个,但是看到这些全部的问题是因为官方提供的minio镜像是RELEASE.2019-08-07T01-59-21Z ,这个镜像不支持arm架构,这里我就感到奇怪了,Ubuntu20.04不是amd64架构吗(下图所示),但是实在没找到其他解决办法了,就尝试着按照他们的方法来重新搭建,果不其然,不能解决。于是弄了许久,没能解决。

KubeSphere 应用商店

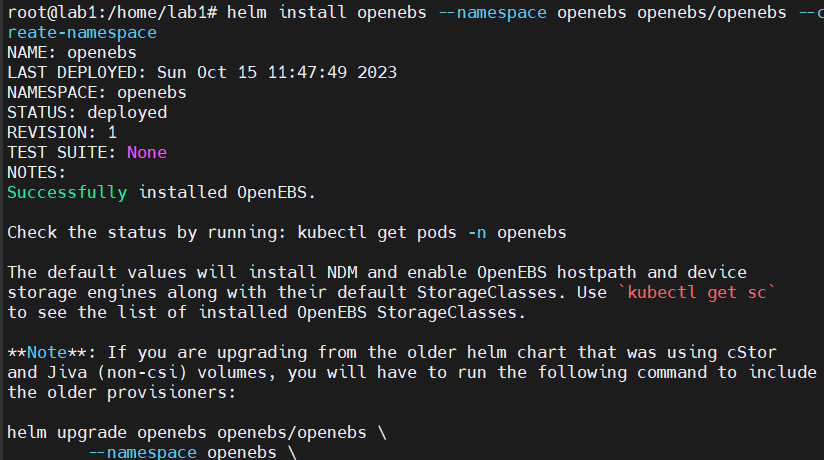

最后直接又进行了默认安装,但是这里把一些插件打开了,然后搭建成功之后进入平台查看原因,当然也可以通过kubectl describe来看原因,但是用平台比较无脑一点,发现还是说因为没找到默认的pvc,跟

遇到的原因差不多,于是就去搜索找不到pvc怎么办,估计是nfs不够用的原因,后来发现了openEBS,这个可以构建k8s默认的sc,安装完之后,的确是安装成功了,但是监控部分的pod还是没能跑起来,导致还是个空壳服务,甚至于还碰到了controller-manager找不到endpoint(b比如在kube-system下找不到calico之类的,可能是我又设置成localhost了),但是明明我已经改成了master(lab1)的ip了,就是下面的问题

1 Internal error occurred: failed calling webhook "users.iam.kubesphere.io": failed to call webhook: Post "https://ks-controller-manager.kubesphere-system.svc:443/validate-email-iam-kubesphere-io-v1alpha2?timeout=30s": no endpoints available for service "ks-controller-manager"

所以还是应该把endpointIps改成10.12.52.66,可能是webhook的原因,(……又是新词汇出现了)

看到了说要删除webhook,我先查询了一下现有的webhook,当然还有个这个网页

访问控制和帐户管理 - 帐号无法登录 - 《KubeSphere v3.1 使用手册》 - 书栈网 · BookStack ,他给的解决办法是一个issue

not working well with Kubernetes 1.19 · Issue #2928 · kubesphere/kubesphere (github.com)

1 2 3 4 5 6 7 8 9 10 11 root@lab1:/home/lab1 NAME WEBHOOKS AGE istio-validator-1-11-2-istio-system 2 10h ks-events-admission-validate 1 97m network.kubesphere.io 1 100m notification-manager-validating-webhook 2 94m resourcesquotas.quota.kubesphere.io 1 100m storageclass-accessor.storage.kubesphere.io 1 100m users.iam.kubesphere.io 1 100m validating-webhook-configuration 3 63m

然后那个教程说要删除掉遗留的webhook,那我还不如直接又卸载服务重装,免得删错了,卸载之后发现就剩下了这么个东西,也不知道是干嘛的,总之应该没什么用,然后又开始了重装,这回莫名其妙,就好了?我真的是,不理解计算机这种东西在搞什么东西,这回没有遇到webhook的问题了,怀着忐忑的心情进入页面,期望看到有数据出现,好,结果是令人失望的。那没办法,只能再看看哪里出了问题,发现是prometheus出了问题,那肯定没数据了,kubesphere主要就是用prometheus来做监控,所以看看什么问题

1 2 3 root@lab1:/home/lab1/kubesphere NAME WEBHOOKS AGE istio-validator-1-11-2-istio-system 2 8h

问题如下:

1 MountVolume.SetUp failed for volume "secret-kube-etcd-client-certs" : secret "kube-etcd-client-certs" not found

kubesphere解决etcd监控证书找不到问题-CSDN博客

02、安装kubesphere v3.0.0 (yuque.com)

[MountVolume.SetUp failed for volume “secret-kube-etcd-client-certs” : secret “kube-etcd-client-certs - 知乎 (zhihu.com)](https://zhuanlan.zhihu.com/p/648148640#:~:text=解决方法: kubectl -n kubesphere-monitoring-system create secret generic kube-etcd-client-certs,–from-file%3Detcd-client.crt%3D%2Fetc%2Fkubernetes%2Fpki%2Fetcd%2Fhealthcheck-client.crt –from-file%3Detcd-client.key%3D%2Fetc%2Fkubernetes%2Fpki%2Fetcd%2Fhealthcheck-client.key 发布于 2023-08-04 20%3A07 ・IP 属地河南)

用的知乎的命令,然后接着等待一会,就好了,真不容易,完整的历时两天的部署。接下来是完整的部署命令:

拉取配置文件 1 2 3 wget https://github.com/kubesphere/ks-installer/releases/download/v3.3.1/cluster-configuration.yaml wget https://github.com/kubesphere/ks-installer/releases/download/v3.3.1/kubesphere-installer.yaml