Python常见字符串/列表操作

len

find (不存在返回-1,存在返回索引值)

1 2 s='china' print(s.find('a'))

startswith,endswith 判断字符串是不是以谁谁谁开头/结尾

1 2 3 s='china' print(s.startswith('h')) print(s.endswith('a'))

count 返回str在strat和end之间出现的次数

1 2 s='aaabb' print(s.count('b'))

replace 替换字符串中指定内容,如果指定次数count,则替换不会超过count次

1 2 s='cccbb' print(s.replace('c','d'))

split 通过参数的内容切割字符串

1 2 s='1#2#3#4' print(s.split('#'))

upper lower 大小写互换

strip 去空格

1 2 s=' a ' print(len(s.split()))

join 字符串拼接

1 2 s='hello' print(s.join('a'))

append 列表添加元素

insert

extent

1 2 3 4 a1=[1,2,3] a2=[4,5,6] a1.extend(a2) #a1=[1,2,3,4,5,6]

del:根据下标进行删除

pop:删除最后一个元素

remove

Python常见元组/字典操作 1 2 3 4 5 6 7 8 person={'name' :'老马' ,'age' :18 } del person['age' ]del personperson.clear()

1 2 3 4 5 6 7 8 9 10 11 12 13 14 person={'name' :'老马' ,'age' :18 } for key in person.keys(): print (key) for value in person.values(): print (value) for key,value in person.items(): print (key,value) for item in person.items(): print (item)

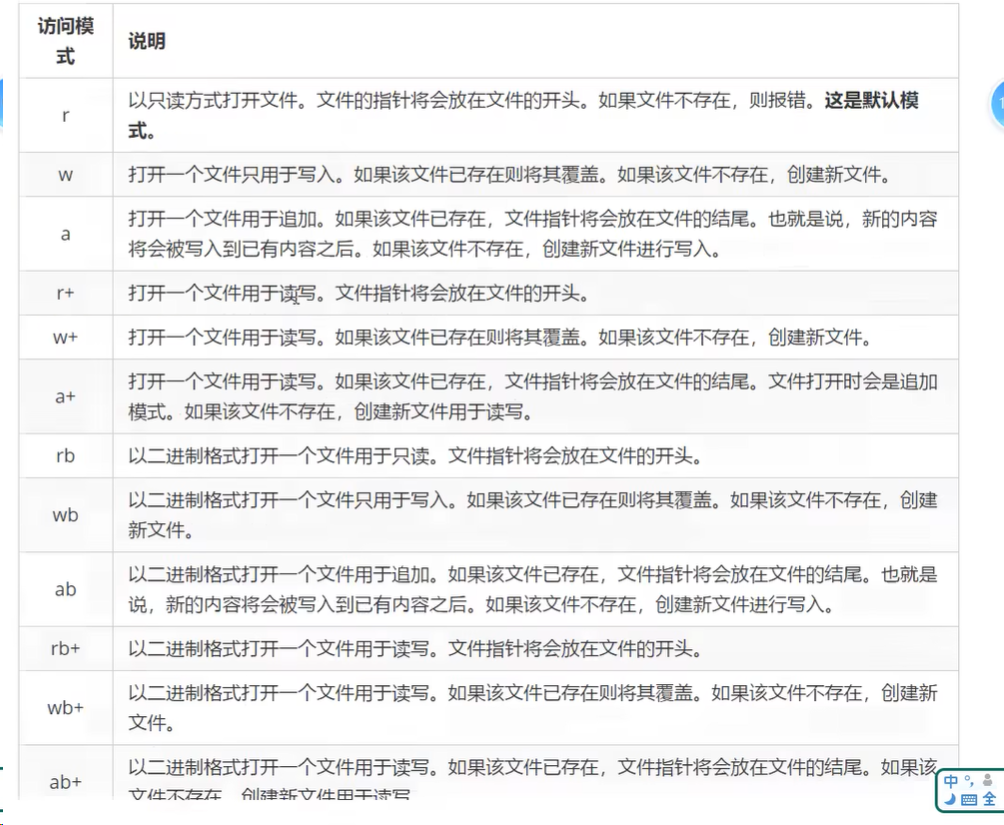

Python文件常见操作 打开文件

1 2 3 4 open (文件路径,访问权限) fp = open ('a.txt' ,'w' ) fp.write('hello' ) fp.close()

写数据

1 2 3 4 5 fp = open ('a.txt' ,'w' ) fp.write('hello\n' *5 ) fp = open ('a.txt' ,'a' ) fp.write('hello\n' *5 )

读数据

1 2 3 4 5 6 7 8 9 10 11 12 fp = open ('a.txt' ,'r' ) content=fp.read() print (content)content=fp.read() print (content)content = fp.readlines() print (content)

序列化与反序列化

通过文件操作,我们可以将字符串写入到一个本地文件。但是,如果是一个对象(例如列表、字典、元组等),就无法直接写入到一个文件里,需要对这个对象进行序列化,然后才能写入到文件里 。Python中提供了JSON这个模块用来实现数据的序列化和反序列化。JSON模块 JSON的本质是字符串 。dumps方法的作用是把对象转换成为字符串,它本身不具备将数据写入到文件的功能。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 import jsonname_list = ['zs' ,'ls' ] names = json.dumps(nume_list) fp=open ('./a.txt' ,'a' ) fp.write(names) fp.close() json.dump(name_list,fp)

1 2 3 4 5 6 7 8 9 10 11 12 13 fp = open ('text.txt' ,'r' ) content=fp.read() print (content)result = json.loads(content) print (result)fp = open ('./a.txt' ,'r' ) result = json.load(fp) print (type (result))

读取文件异常

如果不对异常进行处理,程序可能会由于异常直接中断掉

1 2 3 4 5 6 7 8 9 10 11 异常的格式 try : 可能出现异常的代码 except 异常的类型 友好的提示 try : fp=open ('text.txt' ,'r' ) fp.read() except FileNotFoundError: print ('系统正在升级,请稍后重试' )

HTML结构介绍 1 2 3 4 5 6 7 8 9 table 表格 tr 行 td 列 <table width ="200px" height ="200px" border ="1px" <tr > <td > </td > </tr > </table >

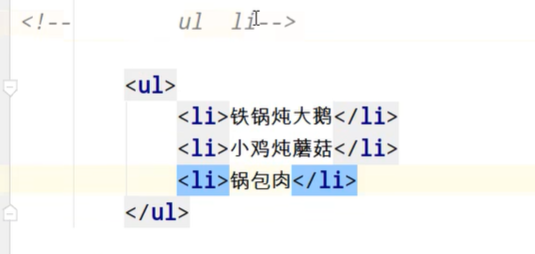

ul li是无序列表

ol和li是有序列表

爬虫分类 1 2 3 4 5 6 7 8 9 10 11 12 13 通用爬虫: 实例 百度J360、google、sougou等搜索引擎---伯乐在线 功能 访问网页->抓取数据->数据存储->数据处理->提供检索服务 robots协议 一个约定俗成的协议,添加robots.txt文件,来说明本网站哪些内容不可以被抓取,起不到限制作用自己写的爬虫无需遵守 网站排名(SEO) 1.根据pagerank算法值进行排名(参考个网站流量、点击率等指标) 2.百度竞价排名 缺点 1.抓取的数据大多是无用的 2.不能根据用户的需求来精准获取数据

1 2 3 4 5 6 7 8 9 10 聚焦爬虫 功能 根据需求,实现爬虫程序,抓取需要的数据 设计思路 1.确定要爬取的ur1 如何获取ur1 2.模拟浏览器通过http协议访问url,获取服务器返回的htm1代码 如何访问 3.解析htm1字符串(根据一定规则提取需要的数据) 如何解析

反爬手段 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 1. User-Agent: user Agent中文名为用户代理,简称 UA,它是一个特殊字符串头,使得服务器能够识别客户使用的操作系统及版本、CPU 类型、浏览器及版本、浏览器渲染引擎、浏览器语言、浏览器插件等。 2. 代理IP 西次代理 快代理 什么是高匿名、匿名和透明代理?它们有什么区别? 1. 使用透明代理,对方服务器可以知道你使用了代理,并且也知道你的真实IP. 2. 使用匿名代理,对方服务器可以知道你使用了代理,但不知道你的真实IP。 3. 使用高匿名代理,对方服务器不知道你使用了代理,更不知道你的真实IP。 3. 验证码访问 打码平台 云打码平台 超级鹰 4. 动态加载网页 网站返回的是js数据 并不是网页的真实数据 selenium驱动真实的浏览器发送请求 5. 数据加密 分析js代码

urllib库使用 总结

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 urllib.request.urlopen()模拟浏览器向服务器发送请求 response 服务器返回的数据 response的数据类型是HttpResponse 字节-->字符串 解码decode 字符串-->字节 编码encode read() 字节形式读取二进制扩展:rede(5 )返回前几个字节 readline() 读取一行 readlines()一行一行读取 直至结束 getcode() 获取状态码 geturl() 获取ur1 getheaders()获取headers urllib.request.urlretrieve() 请求网页 请求图片 请求视频

基本使用 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 url = 'http://www.baidu.com' response = urllib.request.urlopen(url) content = response.read().decode('utf-8' ) print (content)

一个类型和六个方法

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 import urllib.requesturl = 'http://www.baidu.com' response = urllib.request.urlopen(url) content = responese.read(5 ) content = response.readline() content = response.readlines() print (response.getcode())print (response.geturl())print (response.getheaders())

下载网页 1 2 3 4 5 6 7 8 9 10 11 12 url_page = 'http://www.baidu.com' urllib.request.urlretrieve('url' ,filename) url_img='url' urllib.request.urlretrieve('url' ,filename)

请求对象的定制 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 import urllib.request''' # url的组成 # https://www.baidu.com/s?wd=周杰伦 # http/https www.baidu.com 80/443 s wd=周杰伦 # 协议 主机 端口号 路径 参数 锚点 # http 80 # https 443 # mysql 3306 # oracle 1521 # redis 6379 # mongodb 27017 ''' headers = { 'User-Agent' : 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36' } url = 'http://172.22.255.18/srun_portal_pc?ac_id=1&theme=pro' request = urllib.request.Request(url=url,headers=headers) response = urllib.request.urlopen(request) content = response.read().decode('utf-8' ) print (content)

编解码 quote方法

1 2 3 4 5 6 7 8 9 10 11 import urllib.requestimport urllib.parsename = urllib.parse.quote('周杰伦' ) print (name)url = 'https://www.baidu.com/s?wd=' url = url+name

urlencode方法

1 2 3 4 5 6 7 8 9 import urllib.parsedata = { 'wd' :'周杰伦' , 'sex' :'男' , 'location' :'中国台湾省' } a = urllibparse.urlencode(data)

Post请求 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 import urllib.parseimport urllib.requestimport jsonurl = 'https://fanyi.baidu.com/sug' headers = { 'user-agent' : 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36' } data = { 'kw' : spider } data = urllib.parse.urlencode(data).encode('utf-8' ) request = urllib.request.Request(url=url,data=data,headers=headers) response urllib.request.urlopen(request) content = response.read().decode('utf-8' ) obj = json.loads(content) print (obj)

请求百度翻译详细翻译

注:与视频不同的是找不到他的v2api,这里爬取不到数据,可能是时间戳的问题。

补充:搜索后发现

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 import urllib.requestimport jsonimport timeurl = 'https://fanyi.baidu.com/ait/text/translate' current_timestamp = str (int (time.time() * 1000 )) headers = { "accept" : "text/event-stream" , "accept-encoding" : "gzip, deflate, br, zstd" , "accept-language" : "zh-CN,zh;q=0.9" , "acs-token" : "1732356101697_1732436485681_5CjkmRFp7GcssfzhsJBNfECmy/7oqAu5eHoeQGjfduQBVahwJT+sXAelKvBO4NwxRe3sVdWRr3NT++GAEltLHY9ul/xKFFTCmmrAHQnQMwTpRBODRaPcM0ZLtja9G0qH4KNIQLxHDuza8I3exTJVmAtB/jVVjXSMbPgia2rt+Ft0awa/7YN7DGPIlDYcYrY8XGkuKKxMvQ/yBVR3RUhDz8Yb4I4z/gfnZqo1e4BK15Kd60PINwsLu20nZbjz1TSIVDJlPqLC+cSukwE5KB4CuDx+IPNeH666foeZNbD0e6RO0vSV+I6WuevCFrGfzRT41/hwa7YiTpn7CZFIHnp5sGpPxQ9xe0IbGYXBitKyBQjPwvIWLYkK1OEeu3vDSTKxHn+41hhupyk6+pGSXMH3wtatAExlTjNWE5EOPZIu94O7DfQS4H/bUmOScJpdk1vQJnwVwBGo9xHHRO8tUZFk7m0eNK+S4e5/tlKh+Xks144=" , "connection" : "keep-alive" , "content-length" : "137" , "content-type" : "application/json" , "cookie" : "BIDUPSID=10161F3D56254D029FE060EC8EE55440; PSTM=1712035906; BAIDUID=8552D5E5687DC8B0A76CD324C49A0F93:FG=1; BAIDUID_BFESS=8552D5E5687DC8B0A76CD324C49A0F93:FG=1; ZFY=E5zTdRUPRMNuKoaOGA2mchAl6DrUkrPaMuPxGeQ5HbI:C; BAIDU_WISE_UID=wapp_1722399185495_611; H_PS_PSSID=60360_60678_60682_60728_60749; ab_sr=1.0.1_Y2QyMmM2ODZmNGRkNGQ1ZjFmMTFkYmUzMTYwMGFlOTIxOTk2ZjQwNjkyZTcwZGU5NjUyM2U5MzkyZjljOTE0MjM3YWY0ZDlkZDU0NDc3MDU4MmIzMWM3MmQxMmMwZDY4ODJmYmMxMTUyZDBlOTNhNjIxNWJkZDdlODk5NjIxZGU5ZDIzN2RlZjE0MjQ3NDI2ZjYxODU0NzllZGIwNzFmOQ==; RT=\"z=1&dm=baidu.com&si=7cc9449d-84ee-487d-98f6-d46e371f725a&ss=m3vbcyx1&sl=4&tt=7tm&bcn=https%3A%2F%2Ffclog.baidu.com%2Flog%2Fweirwood%3Ftype%3Dperf&ld=l95v\"" , "host" : "fanyi.baidu.com" , "origin" : "https://fanyi.baidu.com" , "referer" : "https://fanyi.baidu.com/mtpe-individual/multimodal?query=love&lang=en2zh" , "sec-ch-ua" : "\"Google Chrome\";v=\"131\", \"Chromium\";v=\"131\", \"Not_A Brand\";v=\"24\"" , "sec-ch-ua-mobile" : "?0" , "sec-ch-ua-platform" : "\"Windows\"" , "sec-fetch-dest" : "empty" , "sec-fetch-mode" : "cors" , "sec-fetch-site" : "same-origin" , "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" } data = { "query" : "love" , "from" : "en" , "to" : "zh" , "reference" : "" , "corpusIds" : [], "needPhonetic" : 'true' , "domain" : "common" , "milliTimestamp" : current_timestamp } json_data = json.dumps(data).encode('utf-8' ) req = urllib.request.Request(url, data=json_data, headers=headers) try : response = urllib.request.urlopen(req) content = response.read().decode('utf-8' ) print (content) except Exception as e: print (f"请求失败: {e} " )

ajax的get请求 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 import urllib.requestimport jsonimport timeurl = 'https://movie.douban.com/j/chart/top_list?type=5&interval_id=100%3A90&action=&start=0&limit=20' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" } data = { } json_data = json.dumps(data).encode('utf-8' ) req = urllib.request.Request(url=url, headers=headers) try : response = urllib.request.urlopen(req) content = response.read().decode('utf-8' ) obj = json.loads(content) with open ('./douban250.json' , 'w' , encoding='utf-8' ) as fp: fp.write(content) except Exception as e: print (f"请求失败: {e} " )

爬取豆瓣多个页面

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 import urllib.requestimport urllib.parseimport jsonfrom flask import requestdef create_url (page ): base_url = 'https://movie.douban.com/j/chart/top_list?type=5&interval_id=100%3A90&action=&' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" } data = { 'start' : (page - 1 ) * 20 , 'limit' : 20 } data = urllib.parse.urlencode(data) url = base_url + data request = urllib.request.Request(url, headers=headers) return request if __name__ == '__main__' : start = int (input ('请输入起始页面:' )) end = int (input ('请输入结束页面:' )) for page in range (start, end + 1 ): request = create_url(page) try : response = urllib.request.urlopen(request) obj = json.loads(response.read().decode('utf-8' )) with open ('douban250_' +str (page)+'.json' , 'a' , encoding='utf-8' ) as fp: fp.write(json.dumps(obj, ensure_ascii=False , indent=4 ,)) print (f'第 {page} 页生成成功' ) except Exception as e: print (f'请求错误,第 {page} 页生成失败,错误信息: {e} ' )

ajax的post请求 爬取肯德基城市店铺信息

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 import urllib.requestimport urllib.parseimport jsondef create_request (page ): base_url = 'https://www.kfc.com.cn/kfccda/ashx/GetStoreList.ashx?op=cname' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" } data = { 'cname' : '北京' , 'pid' : '' , 'pageIndex' : page, 'pageSize' : 10 } data = urllib.parse.urlencode(data).encode('utf-8' ) request = urllib.request.Request(url = base_url, data = data, headers = headers) return request def get_content (request ): response = urllib.request.urlopen(request) content = response.read().decode('utf-8' ) return content def get_down (content,page ): try : obj = json.loads(content) with open ('KFC_' + str (page) + '.json' , 'a' , encoding='utf-8' ) as fp: fp.write(json.dumps(obj, ensure_ascii=False , indent=4 )) print (f'第 {page} 页生成成功' ) except Exception as e: print (f'请求错误,第 {page} 页生成失败,错误信息: {e} ' ) if __name__ == '__main__' : start = int (input ('请输入起始页面:' )) end = int (input ('请输入结束页面:' )) for page in range (start, end + 1 ): request = create_request(page) content = get_content(request) down_load = get_down(content,page)

URLError\HTTPError 其实一个Exception就可以包括所有异常

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 import urllib.requestimport urllib.errorurl = 'https://blog.csdn.net/dQCFKyQDXYm3F8rB0/article/details/14399987?spm=1001.2014.3001.5502' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" } request = urllib.request.Request(url, headers=headers) try : response = urllib.request.urlopen(request) response = response.read().decode('utf-8' ) print (response) except urllib.urlerror.URLError as e: print (e)

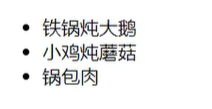

微博绕过登录获取个人信息(展示cookie作用) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 import urllib.requestimport urllib.parseurl ='https://weibo.cn/6327481426/info' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" } request = urllib.request.Request(url=url,headers=headers) response = urllib.request.urlopen(request) content = response.read().decode('utf-8' ) print (content)

添加上cookie

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 import urllib.requestimport urllib.parseurl = 'https://weibo.cn/6327481426/info' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" , 'cookie' : '_T_WM=f39be0bd871fec6d17b7771930f8fa7f; SCF=AjAOyF0gSCGvVUYEzHMSL9buJcJKPwNtgKaoQGFwIkUObXfT_WARC7fXL1_sd9zxNV5MXDOfaIZFdAtojBOmdWY.; SUB=_2A25KR3QRDeRhGeBN6VUV-C_IyTqIHXVpPYnZrDV6PUJbktANLVT2kW1NRJ12p2JlahbvKFXJIgdR37UGEmncLrS8; SUBP=0033WrSXqPxfM725Ws9jqgMF55529P9D9W5ZUmT08bZnfCU6nRPwVTdH5NHD95Qce0zNShnpShzcWs4DqcjMi--NiK.Xi-2Ri--ciKnRi-zNSoeES0BReKBESntt; ALF=1735037249' } request = urllib.request.Request(url=url, headers=headers) response = urllib.request.urlopen(request) content = response.read().decode('utf-8' ) print (content)

referer的作用:

Handler处理器 定制跟高级的请求头(动态的cookie和代理不能使用请求对象的定制)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 import urllib.requestimport urllib.parseurl = 'https://www.baidu.com' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" , } request = urllib.request.Request(url=url, headers=headers) handler = urllib.request.HTTPHandler() opener = urllib.request.build_opener((handler)) responese = opener.open (request) content = responese.read().decode('utf-8' ) print (content)

handler使用代理ip访问 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 import urllib.requestimport urllib.parseurl = 'http://www.baidu.com/s?wd=ip' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" , } request = urllib.request.Request(url=url, headers=headers) proxies = { 'http' :'218.87.205.154' } handler = urllib.request.ProxyHandler(proxies=proxies) opener = urllib.request.build_opener((handler)) responese = opener.open (request) content = responese.read().decode('utf-8' ) with open ('daili.html' ,'w' ,encoding='utf-8' ) as fp: fp.write(content)

代理池 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 import urllib.requestimport urllib.parseimport randomurl = 'http://www.baidu.com/s?wd=ip' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" , } request = urllib.request.Request(url=url, headers=headers) proxies_pool = { {'http' :'218.87.205.154' }, {'http' :'218.87.205.154' } } proxies = random.choice(proxies_pool) handler = urllib.request.ProxyHandler(proxies=proxies) opener = urllib.request.build_opener((handler)) responese = opener.open (request) content = responese.read().decode('utf-8' ) with open ('daili.html' ,'w' ,encoding='utf-8' ) as fp: fp.write(content)

xpath的基本使用 谷歌浏览器直接安装xpath就好

安装lxml

1 2 3 4 5 frmom lxml import etree

xpath基本语法

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 xpath基本语法: 1. 路径查询 //:查找所有子孙节点,不考虑层级关系 //:找直接子书点 2. 谓词查询 //div[@id ] //div[@id ="maincontent" ] 3. 属性查询 //@class 4.模糊查询 //div [contains (@id ,"he" )] //div [starts -with (@id , "he" )] 5.内容查询 //div /h1 /text () 6.逻辑运算 //div [@id ="head " and @class ="s_down "] //title | //price

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 import urllib.requestimport urllib.parsefrom lxml import etreetree = etree.parse('./xpath的基本使用.html' ) li_list = tree.xpath('//body/ul/li' ) li_list_id = tree.xpath('//body/ul/li[@id]' ) li_list_id_text = tree.xpath('//body/ul/li[@id="1"]/text()' ) li_list_id_class_text = tree.xpath('//body/ul/li[@id="1"]/@class' ) li_list_id_class_text_2 = tree.xpath('//body/ul/li[@id="1" and @class="c1"]/text()' ) li_list_id_l = tree.xpath('//body/ul/li[contains(@id,"l")]/text()' ) print (len (li_list))print (li_list_id_text)print (li_list_id_class_text)print (li_list_id_l)

使用xpath爬取网页图片 案例,爬取站长之家图片

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 import urllib.requestimport urllib.parseimport jsonfrom lxml import etreedef create_request (page ): base_url = 'https://sc.chinaz.com/tupian/gudianmeinvtupian.html' if page != 1 : base_url = 'https://sc.chinaz.com/tupian/gudianmeinvtupian_' + str (page) + '.html' headers = { "user-agent" : "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36" } request = urllib.request.Request(url=base_url, headers=headers) return request def get_content (request ): response = urllib.request.urlopen(request) content = response.read().decode('utf-8' ) return content def get_down (content, page ): try : tree = etree.HTML(content) name_list = tree.xpath('//div[@class="item"]//img/@alt' ) src_list = tree.xpath('//div[@class="item"]//img/@data-original' ) for name, src in zip (name_list, src_list): url = 'https:' + src urllib.request.urlretrieve(url, './img/' + name + '.jpg' ) except Exception as e: print (f'请求错误,第 {page} 页生成失败,错误信息: {e} ' ) if __name__ == '__main__' : start = int (input ('请输入起始页面:' )) end = int (input ('请输入结束页面:' )) for page in range (start, end + 1 ): request = create_request(page) content = get_content(request) down_load = get_down(content, page)

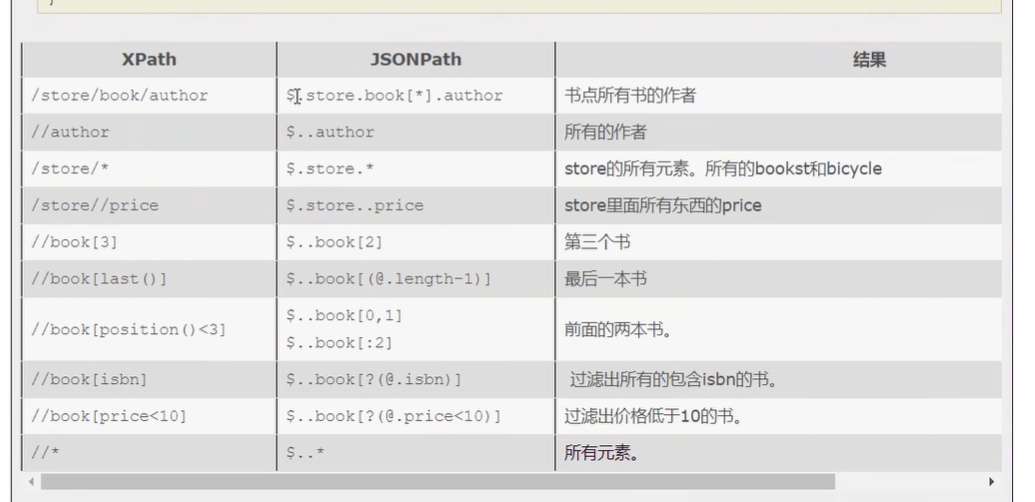

jsonpath的基本使用和案例 安装jsonpath

案例,爬取淘票票城市

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 import jsonpathimport urllib.parseimport urllib.requestimport jsonobj=json.load(open ('./test.json' ,'r' ,encoding='utf-8' )) author_list = jsaonpath.jsonpath(obj,'$.store.book[*].author' ) author_list=jsonpath.jsonpath(obj,'$..author' ) tag_list=jsonpath.jsonpath(obj,'$.store.*' ) price_list=jsonpath.jsonpath(obj,'$.store..price' ) book=jsonpath.jsonpath.jsonpath(obj,'$..book[2]' ) book=jsonpath.jsonpath(obj,'$..book[(@length-1)]' ) book_list=jsonpath.jsonpath(obj,'$..book[:2]' ) book_list=jsonpath.jsonpath(obj,'$..book[?(@.isbn)]' ) book_list=jsonpath.jsonpath(obj,'$../book[?(@.price>10)]' )

bs4的基本使用 安装bs4

bs4的基本使用

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 import requestsfrom bs4 import BeautifulSoupsoup = BeautifulSoup(open ('bs4的基本使用.html' , encoding='utf-8' ), 'lxml' ) obj = soup.select('#p1' )[0 ] print (obj.attrs)print (obj.attrs.get('class' ))

案例:抓取星巴克的菜单

1 2 3 4 5 6 7 8 9 10 11 12 13 14 import urllib.requestfrom bs4 import BeautifulSoupurl='https://www.starbucks.com.cn/menu/' responese=urllib.request.urlopen(url) content=responese.read().decode('utf-8' ) with open ('starbucks.html' ,'w' ,encoding='utf-8' ) as fp: fp.write(content) soup = BeautifulSoup(content,'lxml' ) name_list=soup.select('ul[class="grid padded-3 product] strong' )

selinum Chromedriver下载

参考的这个博客:

安装selenium和关于chrome高版本对应的driver驱动下载安装【Win/Mac 】_chrome driver125-CSDN博客

下载地址:

1.(123/125开头)https://googlechromelabs.github.io/chrome-for-testing/

2.(70~80~90~114开头)https://chromedriver.chromium.org/downloads

3.(还有一个可以看 ChromeDriver )

http://chromedriver.storage.googleapis.com/index.html

我用的是这个网页Chrome for Testing availability

选的带有ChromeDriver 的win/mac/Linux 系统。

安装完后执行下面的程序看是否成功

1 2 3 4 5 6 from time import sleepfrom selenium import webdriverdriver = webdriver.Chrome() driver.get("https://www.baidu.com" )

校园网 查看当前用户和用户组

1 chmod -R +x /home/mobb/login/chrome-linux64

由于机器不知道为什么始终没有实现X11转发弹不出来弹框,再进行了几种远程的虚拟桌面连接的尝试(xrdp、vnc),还是不能成功,大概率还是X11转发的问题,在其他机器和虚拟机是成功的。

google搜索基本符号

完全匹配搜索:“xxx”

+号指定一个一定存在的关键词:“有限公司” +百科

-号指定一个一定不存在的关键词:“有限公司” -全书

| 或:有限|责任

AND 且:有限 AND 控股

Site搜索语法(查找子域名):

inurl搜索语法(批量查找后台、批量找注入点、批量找指定漏洞目标站点等):

inurl:baidu.com (标识搜索的结果中一定有baidu.com)

inurl:1.txt

inurl:system/login.php

inurl:admin/login.asp

inurl:php?tid=5(批量找注入点)

intitle搜索语法:

intitle:后台管理(搜索标题为指定内容的结果)

intitle:管理员登录(搜索思路多样化,可以使用不同的关键词,且达到一样的效果)

intitle:登录 inurl:admin

Cache缓存搜索(类似于百度的快照功能)

拓展:

管理员用户名:

www.71tv.net.cn

比如这个网站中可以找到”发布人“的信息,那么就可以用site+“发布人”来查找信息

找目标脚本语言

www.csc.com.tw

//很多伪静态网站,我们可以通过google 找到真实的脚本语言

site:csc.com asp

inurl:ewebeditor/admin_login.asp

inurl:phpmtadmin/index.php

找c段主机

site:49.122.21.*

先找到目标域名,然后用爱站找ip,然后用site找ip

1 dirsearch -u qtxrmyy.com -e .php,.html,.txt --full-url

奇台:

奇台人

新疆第一窖古城酒业有限公司

新疆美景天成环保科技有限公司

奇台信息网

奇台 县狼度科技有限公司奇台 圈儿奇台县人民医院

新疆天山东部现代农产品物流园有限公司

奇台县党建网

qthysc.com

qitai123.net

https://www.qtsx.cn

xjtswl.com

09946830999.com

cms.hrbesd.com

和硕县:

博州: